Revolutionizing Drug Delivery: How AI Optimizes Polymerization for Advanced Therapeutics

This article explores the transformative role of artificial intelligence (AI) in optimizing polymerization process parameters for drug delivery systems.

Revolutionizing Drug Delivery: How AI Optimizes Polymerization for Advanced Therapeutics

Abstract

This article explores the transformative role of artificial intelligence (AI) in optimizing polymerization process parameters for drug delivery systems. Aimed at researchers and development professionals, it covers foundational concepts, practical AI methodologies for parameter prediction and control, advanced troubleshooting and optimization strategies, and rigorous validation against traditional methods. By synthesizing current research and applications, it provides a comprehensive guide to leveraging AI for developing more efficient, consistent, and innovative polymeric biomaterials, ultimately accelerating the path to clinical translation.

The AI-Polymer Synergy: Foundational Concepts for Smarter Drug Delivery Systems

Polymerization is a cornerstone of modern pharmaceutical development, enabling the synthesis of polymers for drug delivery systems, excipients, medical devices, and novel therapeutic agents. The precise control of polymerization parameters—such as monomer concentration, initiator type and amount, temperature, solvent, and reaction time—is critical for defining polymer properties like molecular weight, polydispersity (PDI), composition, and architecture. These properties, in turn, directly influence the safety, efficacy, stability, and manufacturability of the final pharmaceutical product. Within the broader thesis on AI-driven optimization, these parameters become the critical features for machine learning models to predict, optimize, and control polymerization processes, moving from empirical batch-to-batch adjustments to precise, first-time-right synthesis.

Key Parameters & Their Impact on Pharmaceutical Polymer Properties

The following table summarizes the primary polymerization parameters and their quantitative effects on critical quality attributes (CQAs) of pharmaceutical polymers.

Table 1: Key Polymerization Parameters and Their Impact on Polymer CQAs

| Parameter | Typical Range (Example) | Primary Impact on Polymer CQAs | Pharmaceutical Relevance |

|---|---|---|---|

| Initiator to Monomer Ratio (I:M) | 1:50 to 1:500 (ATRP) | Molecular Weight (MW), PDI. Lower I:M increases MW. | Controls drug loading capacity & release kinetics in nanoparticles. |

| Reaction Temperature | 60°C - 110°C (FRP) | Polymerization rate, MW, end-group fidelity. | High temp may degrade heat-labile monomers (e.g., some biologics). |

| Monomer Concentration | 10-50% w/v (RAFT) | Solution viscosity, MW, reaction kinetics. | Affects manufacturability and scale-up feasibility. |

| Solvent Polarity | Toluene to DMSO | Polymer chain conformation, copolymer composition. | Influences compatibility with API and final formulation stability. |

| Reaction Time | 2 - 24 hrs | Monomer conversion, MW evolution, side reactions. | Determines batch cycle time and potential for degradation. |

| Target Degree of Polymerization (DP) | 20 - 500 | Directly sets theoretical MW. | Tailors hydrogel mesh size for controlled drug diffusion. |

Application Note: AI-Optimized Synthesis of pH-Responsive Nanoparticle Copolymers

Objective: To synthesize a poly(D,L-lactide-co-glycolide)-b-poly(ethylene glycol) (PLGA-PEG) copolymer with optimized parameters for nanoparticle formation and a defined acid-labile drug release profile, using a design of experiments (DoE) guided by an AI model.

Background: PLGA-PEG block copolymers self-assemble into nanoparticles for drug delivery. The lactide:glycolide (L:G) ratio in the PLGA block dictates degradation rate, while the PEG block length controls stealth properties. AI models can predict the optimal parameter combination to achieve a target nanoparticle size (80-120 nm) and drug release half-life (~24 hours at pH 5.0).

Experimental Protocol: Ring-Opening Polymerization (ROP) of PLGA-PEG

Materials (The Scientist's Toolkit)

| Reagent/Material | Function | Supplier Example (for information) |

|---|---|---|

| D,L-Lactide | Hydrophobic, crystalline monomer. Degradation rate modulator. | Sigma-Aldrich, Corbion |

| Glycolide | Hydrophobic monomer. Increases degradation rate. | Sigma-Aldrich, Corbion |

| Monomethoxy PEG-OH (mPEG, 5kDa) | Macro-initiator & hydrophilic block. Provides "stealth" properties. | JenKem Technology |

| Stannous 2-ethylhexanoate (Sn(Oct)₂) | Catalyst for ROP. | Sigma-Aldrich |

| Toluene, anhydrous | Reaction solvent. Must be dry to prevent chain transfer. | Sigma-Aldrich |

| Dichloromethane (DCM) | Polymer purification (precipitation solvent). | Fisher Scientific |

| Cold Diethyl Ether / Methanol | Non-solvent for polymer precipitation and washing. | Fisher Scientific |

Procedure:

- Charge & Dry: In a flame-dried, nitrogen-purged round-bottom flask, charge mPEG (1.0 g, 0.2 mmol), D,L-lactide (L) and glycolide (G) at the AI-predicted molar ratio (e.g., L:G = 75:25, total 5.0 mmol), and Sn(Oct)₂ (0.02 mmol in 100 µL toluene).

- Dissolve: Add anhydrous toluene (5 mL). Stir under N₂ until a clear solution is obtained.

- Polymerize: Immerse the flask in an oil bath pre-heated to the AI-prescribed temperature (e.g., 110°C). Stir vigorously for the AI-prescribed time (e.g., 6 hours).

- Terminate & Recover: Cool to room temperature. Dilute with DCM (10 mL) and precipitate the copolymer dropwise into a 10-fold excess of cold diethyl ether/methanol mixture (4:1 v/v).

- Purify: Isolate the precipitate by filtration or centrifugation. Wash twice with cold ether and dry under high vacuum (<0.1 mbar) for 24 hours.

- Characterize: Analyze by ¹H-NMR (for L:G ratio and conversion), GPC (for Mn and PDI), and DSC (for Tg).

AI Integration Workflow: The parameters (L:G ratio, I:M, temperature, time) from historical and experimental batches serve as input features (X). Measured outputs (Y) include Mn, PDI, nanoparticle size (DLS), and drug release T₅₀%. A Bayesian optimization model suggests the next parameter set to experiment with, iteratively converging on the global optimum.

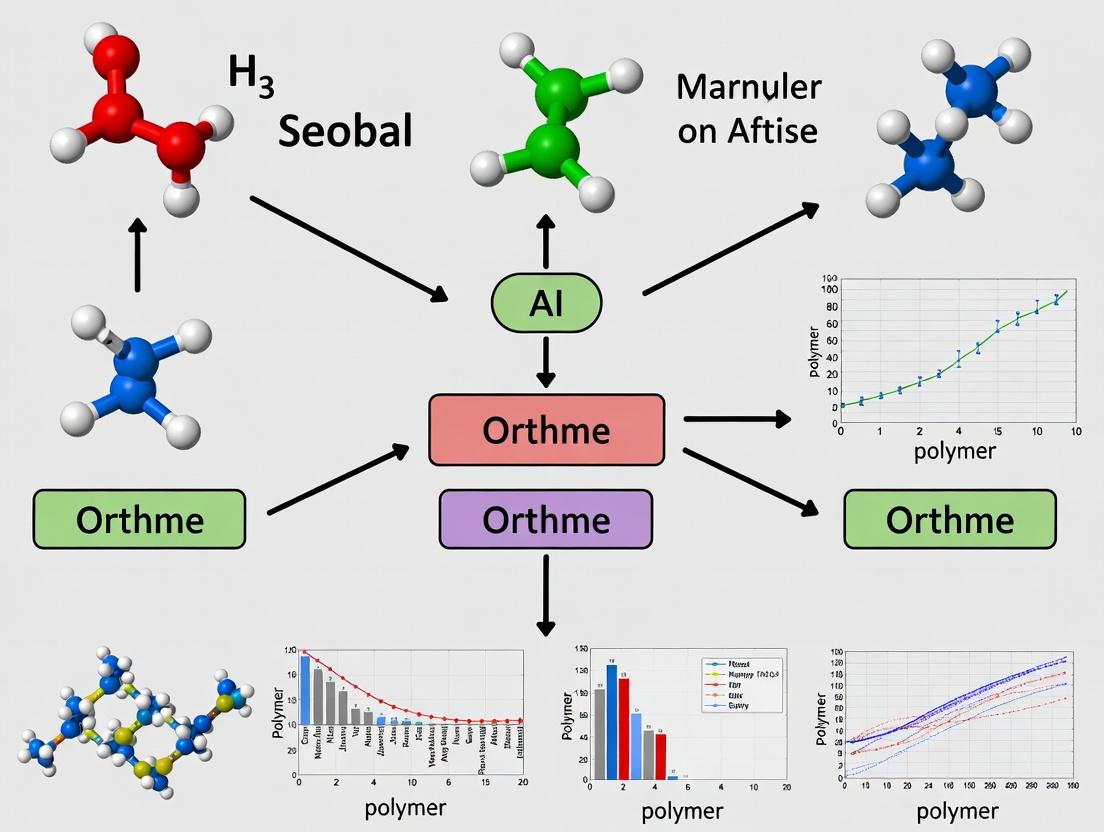

Diagram 1: AI-Driven Polymer Parameter Optimization Loop

Detailed Protocol: Controlled Radical Polymerization (RAFT) for a Drug-Polymer Conjugate

Objective: To synthesize a well-defined (low PDI) poly(N-(2-hydroxypropyl) methacrylamide) (pHPMA) copolymer with a pendant drug moiety via Reversible Addition-Fragmentation Chain Transfer (RAFT) polymerization, a process highly sensitive to parameter control.

Materials (Key Reagents)

| Reagent | Function |

|---|---|

| HPMA monomer | Primary hydrophilic, biocompatible monomer. |

| Drug-monomer conjugate (e.g., Gem-MA) | Monomer functionalized with active pharmaceutical ingredient (API). |

| 4-Cyano-4-[(dodecylsulfanylthiocarbonyl)sulfanyl] pentanoic acid (CDTPA) | RAFT chain transfer agent (CTA). Controls growth & PDI. |

| 4,4'-Azobis(4-cyanovaleric acid) (ACVA) | Azo-initiator, decomposes thermally to generate radicals. |

| Dimethyl sulfoxide (DMSO) | Solvent for polymerization. |

Procedure:

- Formulation: In a vial, dissolve HPMA (2.0 g, 13.9 mmol), Gem-MA (0.1 g, 0.23 mmol), CDTPA (24.5 mg, 0.0695 mmol), and ACVA (3.9 mg, 0.0139 mmol) in anhydrous DMSO (4 mL, 50% w/v). The AI-model prescribes the [M]:[CTA]:[I] ratio as 200:1:0.2.

- Degas: Seal the vial and purge the solution with N₂ or Ar for 20 minutes to remove oxygen, a radical inhibitor.

- Polymerize: Place the vial in a pre-heated block at 70°C for 18 hours.

- Terminate: Cool rapidly in an ice bath. Expose to air to quench radicals.

- Purify: Dilute with water (10 mL) and dialyze (MWCO 3.5 kDa) against water for 48 hours. Lyophilize to obtain the pure drug-polymer conjugate.

- Characterize: Use GPC (aqueous) for Mn and PDI, ¹H-NMR for composition and drug loading efficiency.

Parameter-Signaling Pathway: Understanding how parameters influence the RAFT mechanism is key to control. The diagram below maps this causal chain.

Diagram 2: Parameter Effects on RAFT Polymerization Outcomes

Mastering polymerization parameters is non-negotiable in pharmaceutical development. It transforms polymer synthesis from an art to a predictive science. The integration of AI-driven optimization, as framed in this thesis, leverages these parameters as the fundamental dataset to accelerate the development of advanced polymeric therapeutics with guaranteed critical quality attributes, ensuring robust, scalable, and effective medicines.

The Limitations of Traditional DOE and Statistical Methods in Complex Polymerization

This application note details the critical limitations of traditional Design of Experiments (DOE) and statistical methods when applied to complex polymerization processes. It is framed within a broader thesis advocating for AI-driven optimization as a necessary evolution. Polymerizations, such as controlled radical polymerizations (ATRP, RAFT), ring-opening polymerizations, and multicomponent copolymerizations, exhibit non-linear kinetics, high interdependency among parameters, and multi-dimensional objectives (molecular weight, dispersity, sequence control, functionality). Traditional methods often fail to capture these complexities efficiently, leading to suboptimal processes and hindered innovation.

Quantitative Comparison of Method Limitations

The table below summarizes key limitations based on recent literature and industrial case studies.

Table 1: Limitations of Traditional Methods in Polymerization Optimization

| Limitation Aspect | Traditional DOE/Statistical Method | Impact on Complex Polymerization | Typical Performance Gap |

|---|---|---|---|

| Model Flexibility | Relies on pre-defined, often low-order polynomial models (e.g., quadratic). | Cannot capture high-order non-linearities and sharp response cliffs common in kinetic transitions. | Model R² plateaus at 0.6-0.8 for key responses like dispersity (Ð). |

| Factor Interactions | Manual selection of interactions to test; limited to 2- or 3-way. | Misses complex interactions (>3-way) between e.g., catalyst, ligand, solvent, and temperature. | Up to 30% of critical variance remains unexplained. |

| Experimental Efficiency | Full or fractional factorial designs; resource-intensive for >5 factors. | Number of experiments scales poorly with the 10+ factors common in formulated polymerization systems. | 50-100+ runs often needed for initial screening, consuming costly monomers/reagents. |

| Dynamic Process Handling | Treats process parameters as static set points. | Ineffective for optimizing semi-batch feeds, temperature ramps, or reaction stoppage time. | Fails to identify optimal temporal profiles, leaving ~15-25% yield or selectivity improvement unrealized. |

| Multi-Objective Optimization | Sequential or weighted sum approaches; Pareto front mapping is cumbersome. | Difficulty balancing competing goals (e.g., high MW vs. low Ð, high conversion vs. end-group fidelity). | Identifies dominated solutions; inefficient exploration of the true Pareto frontier. |

| Noise & Heterogeneity | Assumes homogenous, well-mixed systems with constant error variance. | Struggles with spatially heterogeneous systems (e.g., viscous gradients, precipitation) and non-stationary noise. | Process robustness (CpK) predictions are often >30% overestimated. |

Detailed Experimental Protocols

Protocol 1: Traditional DOE for RAFT Copolymerization – Highlighting Inefficiency This protocol illustrates a standard approach and its data collection burden.

Objective: Model the influence of four factors on molecular weight (Mn) and dispersity (Ð) of a styrene-butyl acrylate gradient copolymer. Factors & Levels:

- A: [Monomer]₀/[RAFT]₀ Ratio (100, 200, 300)

- B: Reaction Temperature (60°C, 70°C, 80°C)

- C: Solvent % (30%, 50%, 70% Toluene)

- D: Initiator Type (Thermal, UV, Redox)

Design: A full factorial design for 3 levels across 4 factors is 3⁴ = 81 experiments. A central composite design (CCD) requires ~30-40 runs with center points.

Procedure:

- Setup: In a glovebox, prepare 40 reaction vials according to the DOE matrix. Charge each with specified amounts of styrene, butyl acrylate, RAFT agent (CPDB), and solvent.

- Initiation: For thermal initiator conditions, add AIBN. For UV, add photo-initiator (TPO). For redox, prepare separate solutions.

- Polymerization: Seal vials, remove from glovebox, and place in pre-heated thermoblocks or UV reactors. Quench reactions at predetermined times (e.g., 2, 4, 8 hours) by exposure to air and cooling.

- Analysis: Analyze each sample via Gel Permeation Chromatography (GPC) for Mn and Ð. Record conversion via ¹H NMR.

- Modeling: Input data into statistical software (e.g., JMP, Minitab). Perform stepwise regression to fit a quadratic model:

Response = β₀ + ΣβᵢAᵢ + ΣβᵢᵢAᵢ² + ΣβᵢⱼAᵢAⱼ.

Key Limitation Demonstrated: The 40+ experiments are resource-heavy. The quadratic model will likely fail to accurately predict the optimal Mn-Ð combination if the response surface contains complex curvature, leading to further confirmatory runs.

Protocol 2: Challenge of Dynamic Optimization via Traditional Methods This protocol shows the inadequacy of static designs for dynamic processes.

Objective: Determine the optimal comonomer feed profile for a semi-batch ATRP to achieve a target block composition with minimal termination. Traditional Approach: A split-plot design testing 3-4 pre-defined feed profiles (e.g., linear, parabolic, stepped).

Procedure:

- Profile Definition: Define 4 simplistic feed rate profiles (constant, linear increase, linear decrease, two-step).

- Experimental Execution: Set up a semi-batch reactor with syringe pump. For each profile, run the polymerization, maintaining other factors (temp, stir rate) constant.

- Sampling: Take periodic samples for GPC and NMR to track composition and kinetics.

- Analysis: Compare final polymer properties. Select the best among the tested profiles.

Key Limitation Demonstrated: The true optimal profile is almost certainly not one of the pre-defined, simplistic shapes. This method explores a tiny, arbitrary fraction of the possible profile space, likely missing superior solutions involving complex adaptive feeds.

Visualizations

Title: Traditional DOE Workflow and Limitation Loops

Title: Pareto Frontier: Traditional DOE vs. AI-Guided Search

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Complex Polymerization Studies

| Reagent/Material | Function & Relevance to Complexity |

|---|---|

| High-Purity Monomers with Inhibitors Removed | Baseline reactivity is critical. Variability introduces unmodeled noise, confounding DOE results. |

| Functional Initiators & Chain Transfer Agents | Enable precise structure control. Their kinetics add dimensions (e.g., end-group fidelity) difficult for traditional DOE to optimize. |

| Transition Metal Catalysts (e.g., CuBr/TPMA for ATRP) | Central to controlled polymerization. Ligand-metal ratios and deoxygenation are critical, interactive factors. |

| Livingness Quenching Solutions | Required for precise kinetic sampling (e.g., freezing reaction at time t). Inconsistent quenching adds error. |

| Internal Standards for NMR (e.g., 1,3,5-Trioxane) | Essential for accurate conversion data, the primary response for kinetic modeling. |

| Calibrated GPC/SEC Standards | Accurate molecular weight and dispersity measurement is the primary validation metric. Poor calibration invalidates all model fitting. |

| Inert Atmosphere Equipment (Glovebox, Schlenk Line) | Oxygen sensitivity turns factor control into a binary success/failure, creating non-linear response cliffs. |

| Automated Liquid Handling & Microscale Reactors | Enable higher experimental throughput for both traditional DOE and, more effectively, for AI-driven iterative design. |

This document provides detailed Application Notes and Experimental Protocols for key Artificial Intelligence (AI) and Machine Learning (ML) paradigms, framed within the context of optimizing polymerization process parameters for advanced drug delivery system development. The integration of these computational techniques enables the precise, data-driven design of polymeric carriers, impacting critical attributes such as drug loading, release kinetics, and biocompatibility.

Artificial Neural Networks (ANNs) for Predictive Modeling

Application Note

ANNs serve as universal function approximators, modeling complex non-linear relationships between polymerization inputs (e.g., monomer concentration, initiator ratio, temperature, time) and resultant polymer properties (e.g., molecular weight, polydispersity index (PDI), glass transition temperature). This is critical for in-silico formulation screening.

Key Quantitative Data Summary:

Table 1: Typical ANN Performance on Polymer Property Prediction

| Polymer System | ANN Architecture | Mean Absolute Error (MAE) | R² Score | Key Predicted Property |

|---|---|---|---|---|

| PLGA Nanoparticles | 3 Hidden Layers (10,15,10 nodes) | Mw: 1.2 kDa | 0.94 | Molecular Weight (Mw) |

| PEG-PLA Copolymers | 4 Hidden Layers (20,40,40,20 nodes) | PDI: 0.08 | 0.89 | Polydispersity Index (PDI) |

| Chitosan-TPP Polyplexes | 2 Hidden Layers (15,10 nodes) | Z-Avg: 15 nm | 0.91 | Hydrodynamic Diameter |

Experimental Protocol: ANN Development for Polymerization Optimization

Aim: To construct and validate an ANN model predicting copolymer composition based on reactor conditions. Materials: Historical batch data (min. 100 data points), Python with TensorFlow/PyTorch, Jupyter Notebook. Procedure:

- Data Curation: Compile dataset with features:

[T_init, [Monomer_A], [Monomer_B], Stir_Rate, Time]and target:[Copolymer_Comp_Mole%_A]. - Preprocessing: Normalize all features to a [0,1] range using Min-Max scaling. Split data 70/15/15 for training/validation/testing.

- Model Architecture: Implement a feedforward network with:

- Input Layer: 5 nodes.

- Hidden Layers: 2 layers with 12 and 8 nodes, using ReLU activation.

- Output Layer: 1 node (linear activation for regression).

- Training: Use Adam optimizer (lr=0.001), Mean Squared Error (MSE) loss. Train for 500 epochs with batch size=8. Use validation set for early stopping.

- Validation: Evaluate final model on the held-out test set. Report MAE, RMSE, and R².

Visualization: ANN Workflow for Polymer Design

Title: ANN-Driven Polymer Formulation Optimization Workflow

Bayesian Optimization for Parameter Space Exploration

Application Note

Bayesian Optimization (BO) is a sample-efficient global optimization strategy for expensive black-box functions. In polymerization research, it is used to navigate complex, high-dimensional parameter spaces (e.g., solvent ratio, injection rate, temperature gradient) to find the global optimum for a target objective (e.g., maximize drug encapsulation efficiency) with minimal experimental iterations.

Key Quantitative Data Summary:

Table 2: Bayesian Optimization Performance in Polymerization Screening

| Optimization Target | Parameter Space Dimensions | BO Algorithm (Surrogate/Acquisition) | Experiments to Optimum | Improvement vs. Baseline |

|---|---|---|---|---|

| Encapsulation Efficiency (%) | 5 | Gaussian Process/Expected Improvement | 22 | +35% |

| Nanoparticle Uniformity (PDI) | 4 | Tree Parzen Estimator/Upper Confidence Bound | 18 | PDI reduced by 0.21 |

| Reactor Yield (g) | 6 | Gaussian Process/Probability of Improvement | 25 | +42% yield |

Experimental Protocol: BO for Reaction Condition Optimization

Aim: To maximize the yield of a RAFT polymerization using ≤ 30 experimental runs. Materials: Automated reactor system (or manual setup with strict SOPs), BO library (e.g., scikit-optimize, Ax), target monomer/initiator/chain transfer agent. Procedure:

- Define Domain: Specify bounds for key parameters:

Temperature (40-80°C),[Initator]/[Monomer] ratio (0.001-0.1),Reaction Time (2-24 h),Solvent % (30-70%). - Initialize: Run 5 initial diverse experiments (e.g., via Latin Hypercube Sampling) to seed the model.

- Model & Propose: Fit a Gaussian Process (GP) surrogate model to all collected

(parameters, yield)data. Use the Expected Improvement (EI) acquisition function to compute the next most promising parameter set. - Experiment & Evaluate: Execute the polymerization at the proposed conditions. Precisely measure and record the yield.

- Iterate: Append the new data to the history. Repeat steps 3-4 until the iteration limit (30) is reached or convergence is observed.

- Conclusion: Report the parameter set giving the highest observed yield.

Visualization: Bayesian Optimization Loop

Title: Bayesian Optimization Iterative Cycle

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for AI-Driven Polymerization Research

| Item / Reagent | Function / Rationale |

|---|---|

| RAFT Chain Transfer Agent (e.g., CPDB) | Enables controlled radical polymerization, yielding polymers with low PDI—a critical target for ML prediction and optimization. |

| Functionalized Monomers (e.g., NHS-acrylate) | Provides handles for subsequent drug conjugation; precise incorporation levels are a common optimization objective for BO. |

| Size-Exclusion Chromatography (SEC) System | Gold-standard for measuring molecular weight and PDI, generating the essential quantitative data for training ANN models. |

| Dynamic Light Scattering (DLS) & Zeta Potential Analyzer | Provides nanoparticle size (Z-avg) and surface charge, key performance indicators for drug delivery systems modeled by ANNs. |

| Automated Chemputation Reactor Platform (e.g., Chemspeed) | Enables high-fidelity, reproducible execution of the sequential experiments proposed by a Bayesian Optimization algorithm. |

| PyTorch/TensorFlow & scikit-optimize/BoTorch Libraries | Core open-source software frameworks for building custom ANN architectures and implementing BO loops, respectively. |

Application Notes

Polymeric nanoparticles (PNPs) are pivotal in drug delivery, with their performance critically dependent on key physicochemical properties: Molecular Weight (MW), Polydispersity Index (PDI), degradation kinetics, and consequent drug release profiles. These properties are not intrinsic but are directly dictated by the parameters of the synthesis process. This document, framed within a thesis on AI-driven optimization of polymerization, details the relationships between process inputs and polymer properties, providing protocols for systematic data generation to train predictive machine learning models.

Table 1: Key Process Parameters and Their Influence on Polymer Properties

| Process Parameter | Typical Range Studied | Primary Influence on MW | Primary Influence on PDI | Impact on Degradation/Drug Release |

|---|---|---|---|---|

| Monomer Concentration | 0.5 - 5.0 M | Direct positive correlation; higher concentration increases MW. | Often increases with high concentration due to viscosity effects. | Higher MW polymers degrade slower, prolonging drug release. |

| Initiator Concentration | 0.1 - 5.0 mol% (vs. monomer) | Inverse correlation; higher initiator lowers MW. | Lower initiator can increase PDI; optimal exists for minimal PDI. | Affects chain length distribution, leading to complex/multi-phasic release. |

| Reaction Temperature | 50 - 90 °C | Inverse correlation; higher temperature reduces MW. | Higher temperature can broaden PDI via side reactions. | Accelerates both polymer degradation and drug diffusion. |

| Reaction Time | 1 - 24 hours | Increases until monomer depletion or equilibrium. | Generally decreases with time to a plateau as chains grow uniformly. | Longer times yield higher MW, typically slowing release. |

| Solvent Polarity (in free radical polymerization) | Varies (e.g., Toluene vs. DMF) | Can affect chain propagation/termination rates. | Significant impact; can lead to narrower or broader distributions. | Influences polymer porosity/compactness, affecting diffusion. |

| Surfactant Concentration (in emulsion polymerization) | 0.1 - 5.0 wt% | Indirect effect via control of particle number. | Critical for obtaining narrow particle size and MW distributions. | Controls nanoparticle size, a major factor in release rate. |

Experimental Protocols

Protocol 1: Controlled Radical Polymerization (ATRP) for Systematic MW/PDI Variation Objective: Synthesize poly(lactide-co-glycolide) (PLGA) or poly(methyl methacrylate) (PMMA) libraries with controlled MW and PDI by modulating key parameters. Materials: See "Research Reagent Solutions" below. Procedure:

- Parameter Design: Using a Design of Experiments (DoE) software (e.g., JMP, Minitab), create a factorial design varying: Monomer (M) (1-3 M), Initiator (I) (0.2-1.0 mol%), Catalyst (CuBr) (1.0 eq to I), Ligand (PMDETA) (1.1 eq to CuBr), and Time (2-8 h). Temperature is held constant at 70°C.

- Reaction Setup: In a series of dried Schlenk flasks under N₂, prepare mixtures according to the DoE matrix. Use anhydrous solvent (e.g., anisole). Degas via three freeze-pump-thaw cycles.

- Polymerization: Seal flasks under N₂ and immerse in a pre-heated oil bath at 70°C with magnetic stirring for the designated time.

- Termination: Rapidly cool in an ice bath. Dilute with THF and pass through a short alumina column to remove catalyst.

- Precipitation & Drying: Dropwise add polymer solution into cold, rapidly stirring methanol (10x volume). Filter the precipitate, wash with methanol, and dry in vacuo for 24 h.

- Analysis: Determine MW and PDI by Gel Permeation Chromatography (GPC) using THF as eluent and polystyrene standards. Submit data to the central database for AI model training.

Protocol 2: In Vitro Degradation and Drug Release Kinetics Objective: Correlate process-induced polymer properties with degradation and release profiles of a model drug (e.g., Doxorubicin). Materials: Synthesized polymers (from Protocol 1), PBS (pH 7.4, 0.1 M), Model Drug, Dialysis tubing (MWCO 3.5-14 kDa), HPLC system. Procedure:

- Nanoparticle Formulation: Prepare drug-loaded PNPs via nanoprecipitation or emulsion-solvent evaporation. For each polymer batch, dissolve 50 mg polymer and 5 mg drug in organic solvent (e.g., acetone). Inject into 10 mL stirred PBS + 0.5% w/v stabilizer. Evaporate organic solvent overnight.

- Characterization: Measure particle size and PDI via Dynamic Light Scattering (DLS). Filter (0.45 µm) and lyophilize a portion for MW tracking.

- Degradation Study: Dispense 5 mg of lyophilized, drug-free PNPs into 1 mL PBS in microtubes (n=3 per batch). Incubate at 37°C under gentle agitation.

- Sampling for MW Loss: At predetermined intervals (e.g., days 1, 3, 7, 14, 28), centrifuge a set of tubes. Wash the pellet with water, lyophilize, and analyze MW via GPC.

- Drug Release Study: Place 1 mL of drug-loaded PNP suspension (∼5 mg/mL) in a dialysis bag. Immerse in 30 mL release medium (PBS, 37°C) with gentle stirring. At each time point, withdraw 1 mL of external medium (replace with fresh PBS).

- Quantification: Analyze drug concentration via HPLC/UV-Vis. Plot cumulative release (%) vs. time. Fit data to models (e.g., Higuchi, Korsmeyer-Peppas).

- Data Integration: Correlate initial MW, PDI, and particle size with degradation half-life and drug release kinetics (e.g., t₅₀%). Feed correlations into the AI optimization pipeline.

Visualizations

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Protocol | Key Consideration for AI Study |

|---|---|---|

| Lactide/Glycolide Monomers | Core building blocks for biodegradable PLGA polymers. | Source purity and isomer ratio (D/L) must be standardized across all experiments to reduce noise. |

| Alkyoxyamine Initiator (e.g., Bloc Builder) | Enables controlled Nitroxide-Mediated Polymerization (NMP). | Provides predictable kinetics, crucial for modeling MW as a function of time/concentration. |

| Copper Bromide (CuBr) / Ligand (PMDETA) | Catalyst system for Atom Transfer Radical Polymerization (ATRP). | Must be meticulously purified and stored. Variability here is a major source of experimental error. |

| Anhydrous Solvents (Toluene, Anisole, DMF) | Reaction medium. Polarity affects kinetics and chain growth. | Water content must be minimized (<50 ppm). Use a consistent sourcing and drying protocol. |

| Dialysis Tubing (MWCO 3.5 kDa) | Physical barrier for in vitro drug release studies. | MWCO must be significantly lower than particle size but allow free drug diffusion. Batch-to-batch consistency is vital. |

| PBS Buffer (pH 7.4) | Standard physiological medium for degradation/release. | Must contain 0.02% sodium azide to prevent microbial growth in long-term studies, unless contraindicated. |

| GPC/SEC Standards (Narrow PS or PMMA) | Calibrants for determining absolute MW and PDI. | Use multiple narrow standards. Ideally, couple with light scattering for absolute MW values for model training. |

| Model Drug (e.g., Doxorubicin HCl) | Active compound for release studies. | High solubility in aqueous medium and a distinct UV-Vis/FL signature for reliable quantification are essential. |

Within the broader thesis of AI-driven optimization of polymerization process parameters, data is the foundational substrate. AI models, from basic regression to deep neural networks, are incapable of generating insights without structured, high-quality, and context-rich data. This document details the critical data ecosystem—its sources, types, and prerequisites—required to successfully train and validate AI models for predicting and optimizing polymerization outcomes such as molecular weight, dispersity (Đ), conversion rate, and copolymer composition.

Data Sourcing: Origin Points for AI Training

AI-ready data for polymerization can be sourced from three primary domains, each with distinct characteristics and integration challenges.

| Data Source | Description | Key Data Types | Challenges for AI |

|---|---|---|---|

| High-Throughput Experimentation (HTE) | Automated parallel synthesis platforms (e.g., Chemspeed, Unchained Labs) that rapidly generate empirical data. | Reaction conditions (T, t, [M]/[I]), real-time spectroscopic readouts (FTIR, Raman), final polymer properties (GPC, NMR). | High capital cost; requires robust experimental design (DoE) to maximize information gain. |

| Historical Lab Records & Literature | Digitized lab notebooks, internal databases, and curated data from published articles/patents. | Tabulated reaction parameters, reported polymer characteristics, failed experiment notes. | Inconsistent formatting, missing metadata, publication bias (positive results only). |

| In-line/On-line Process Analytics | Sensors integrated into reactor systems for real-time monitoring (PAT - Process Analytical Technology). | Time-series data: NIR/IR spectra, viscosity, temperature/pressure profiles, monomer consumption. | High volume, noisy data streams; requires real-time preprocessing and alignment. |

Data Types & Quantitative Representations

Polymerization data must be structured into feature (input) and target (output) variables for AI modeling.

Table 1: Core Feature Data (Model Inputs)

| Category | Specific Variables | Typical Range/Units | Measurement Method |

|---|---|---|---|

| Monomer/Species | Identity (SMILES), Concentration | 0.1 - 10.0 mol/L | Mass balance, dosing logs |

| Initiator/Catalyst | Type, Concentration, [M]/[I] | 0.001 - 0.1 mol/L | Mass balance |

| Solvent | Identity, Volume Fraction | 0 - 95% v/v | Dosing logs |

| Process Conditions | Temperature, Pressure, Time | 25-200 °C, 1-100 bar, min-hrs | Thermocouple, pressure transducer |

| Reactor Geometry | Scale, Mixing Rate (RPM) | 1 mL - 100 L, 0-1200 RPM | Equipment specification |

Table 2: Core Target Data (Model Outputs)

| Polymer Property | Metric | Typical Range | Standard Characterization |

|---|---|---|---|

| Kinetics | Conversion (%) | 0 - 100% | In-line FTIR/NIR, gravimetric analysis |

| Molar Mass | Mn (g/mol), Mw (g/mol) | 10^3 - 10^6 g/mol | Gel Permeation Chromatography (GPC) |

| Dispersity | Đ (Mw/Mn) | 1.02 - 2.5+ (broader for some mechanisms) | Calculated from GPC data |

| Composition | Copolymer sequence, % Incorporation | Variable | Nuclear Magnetic Resonance (NMR) |

| Thermal Properties | Tg, Tm (°C) | -100 to +300 °C | Differential Scanning Calorimetry (DSC) |

Prerequisites: Data Readiness for AI

Raw data must be curated and transformed to meet AI readiness standards.

Prerequisite 1: Standardization & Metadata

- Protocol: Implement an Electronic Lab Notebook (ELN) template that enforces controlled vocabularies, SI units, and mandatory fields (e.g., catalyst batch ID, solvent purity).

- Action: Develop a Python/R script using

pandasto ingest heterogeneous files (CSV, .txt, .xlsx), map columns to a standard schema, and output a unified.featheror.parquetfile.

Prerequisite 2: Feature Engineering

- Protocol: Calculate derived features from primary data. For example, from monomer SMILES strings, use RDKit to compute molecular descriptors (LogP, polar surface area, functional group counts). From time-series conversion, calculate instantaneous propagation rate coefficients (kp).

Prerequisite 3: Curation & Outlier Management

- Protocol: Apply statistical and domain-knowledge filters.

- Domain Filter: Flag data where

Final Conversion > 120%orĐ < 1.0as physically implausible. - Statistical Filter: Use Isolation Forest or DBSCAN clustering on feature space to detect and review experimental outliers.

- Action: Create a curated dataset and a separate "flagged" dataset for review, never deleting original data.

- Domain Filter: Flag data where

Experimental Protocols for Foundational Data Generation

Protocol 1: High-Throughput Screening for Controlled Radical Polymerization (e.g., ATRP)

- Objective: Generate a dataset linking initiator/ligand/monomer ratios to Mn and Đ.

- Materials: See "Scientist's Toolkit" below.

- Workflow:

- DoE Preparation: Use a fractional factorial design (e.g., via

pyDOE2) to vary [Monomer]₀/[Initiator]₀, [Ligand]/[Catalyst], and solvent %. - HTE Setup: In an inert atmosphere glovebox, use a liquid handler to dispense solutions into a 96-well reactor block.

- Reaction Execution: Seal block, transfer to a pre-heated agitator, and run for a predetermined time (t).

- Quenching & Sampling: Automatically inject aliquots into pre-filled inhibitor vials via robotic arm.

- Parallel Analysis: Analyze all samples via automated GPC with dual detection (RI/UV).

- DoE Preparation: Use a fractional factorial design (e.g., via

- Data Output: A structured table with features (columns from DoE) and targets (Mn, Mw, Đ from GPC).

Protocol 2: In-line FTIR Monitoring for Kinetic Profile Generation

- Objective: Generate high-resolution time-series conversion data for a single reaction.

- Workflow:

- Reactor Setup: Fit a jacketed lab reactor with an ATR-FTIR flow cell, temperature probe, and overhead stirrer.

- Baseline Collection: Collect FTIR spectra of the reaction mixture pre-initiator addition. Define a monomer-specific peak (e.g., C=C stretch at ~1630 cm⁻¹) and a reference peak.

- Reaction Initiation: Add initiator solution rapidly. Start continuous spectral acquisition (1 scan/30 sec).

- Data Processing: For each spectrum, calculate conversion (X) via:

X(t) = 1 - (A_monomer(t)/A_reference(t)) / (A_monomer(0)/A_reference(0)). - Alignment: Timestamp and align FTIR conversion data with simultaneous temperature and stirring logs.

Visualization: Data to AI Pipeline

Diagram Title: AI-Driven Polymerization Data Pipeline

Diagram Title: Polymerization Kinetic Data Flow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for AI-Ready Data Generation in Polymerization

| Item | Function/Role | Example/Note |

|---|---|---|

| Automated Synthesis Platform | Enables High-Throughput Experimentation (HTE) for rapid, parallel data generation. | Chemspeed SWING, Unchained Labs Freeslate. |

| Process Analytical Technology (PAT) Probe | Provides real-time, in-line data on reaction progress (kinetics). | Mettler Toledo ReactIR (ATR-FTIR), Hamilton Incyte Raman Probe. |

| Automated Gel Permeation Chromatography | High-throughput characterization of molar mass and dispersity (Đ). | Agilent InfinityLab with autosampler, Wyatt MALS detector for absolute mass. |

| Electronic Lab Notebook (ELN) | Ensures data standardization, rich metadata capture, and provenance tracking. | Benchling, LabArchive, or custom PostgreSQL database. |

| Monomer Purification Kit | Removes inhibitors for consistent, reproducible kinetics data. | Basic Alumina column, inhibitor removers (e.g., for MEHQ), freeze-pump-thaw apparatus. |

| Catalyst/Ligand Library | Systematic variation of reaction conditions for feature space exploration. | Commercial libraries (e.g., Sigma-Aldrich's ATRP catalyst set) or synthesized variants. |

| Deuterated Solvents for NMR | For definitive end-group analysis and copolymer composition determination. | CDCl₃, DMSO-d₆, Toluene-d₈, with internal standard (e.g., TMS). |

| Data Science Software Stack | For data curation, feature engineering, and model prototyping. | Python (pandas, scikit-learn, RDKit, PyTorch), R (tidyverse), Jupyter Notebooks. |

From Data to Decision: Implementing AI Models for Polymerization Parameter Prediction

Within the broader research on AI-driven optimization of polymerization process parameters, this protocol details the construction of an integrated computational-experimental pipeline. The objective is to systematically enhance polymer properties—such as molecular weight distribution, dispersity (Đ), and yield—by leveraging machine learning (ML) to model and predict outcomes from complex, multi-variable reaction parameters.

Pipeline Architecture & Workflow

Diagram Title: AI Polymerization Optimization Pipeline

Detailed Experimental Protocols

Protocol: High-Throughput Polymerization Screening (RAFT Polymerization Example)

Objective: To generate a diverse, high-quality dataset for AI model training by systematically varying key reaction parameters.

Materials:

- Monomer (e.g., Methyl methacrylate, MMA)

- RAFT Agent (e.g., Cyanomethyl dodecyl trithiocarbonate)

- Initiator (e.g., AIBN)

- Solvent (e.g., Toluene)

- Automated liquid handling system (e.g., Chemspeed Swing)

- Parallel reactor block (e.g., 24-vial carousel with individual temperature control)

- In-line FTIR or RAMAN probe

- GPC/SEC system for analysis.

Procedure:

- DoE Setup: Using a software tool (e.g., JMP, Design-Expert), define a parameter space. A Central Composite Design is recommended.

- Variables: [Monomer]/[RAFT] ratio (30:1 to 150:1), [RAFT]/[Initiator] ratio (1:0.1 to 1:0.5), Temperature (60°C to 80°C), Reaction Time (2h to 8h).

- Responses: Target Mn, Đ, Conversion (%).

- Automated Recipe Preparation: Program the liquid handler to dispense precise volumes of stock solutions into numbered reaction vials according to the DoE matrix.

- Parallelized Reaction Execution: Place vials in the heated reactor block under inert atmosphere. Start reactions simultaneously.

- In-line Monitoring: Record data from spectroscopic probes at regular intervals (e.g., every 5 minutes) to track monomer conversion.

- Quenching & Sampling: At the designated time, automatically cool the vial and take an aliquot for analysis.

- Off-line Characterization: Determine molecular weight and dispersity via GPC/SEC. Calculate final conversion via ¹H NMR.

- Data Logging: Compile all input parameters and output responses into a structured CSV file for the central database.

Protocol: Data Preprocessing for ML Readiness

Objective: To clean and transform raw experimental data into a format suitable for machine learning algorithms.

Procedure:

- Data Cleaning:

- Remove experiments with obvious failure (e.g., no initiator added).

- Handle missing values: For critical features, use median imputation or flag for potential re-run.

- Feature Engineering:

- Create derived features: e.g., Total Radical Flux = f([Initiator], Temperature, Time).

- Normalize all input features (e.g., Min-Max scaling or Standard Scaling).

- Encode categorical variables (e.g., solvent type) using one-hot encoding.

- Train/Test Split: Perform a stratified random split (e.g., 80/20) to ensure the test set represents the full parameter space.

Protocol: Model Training & Hyperparameter Optimization

Objective: To train a predictive model that maps reaction parameters to polymer properties.

Procedure:

- Model Selection: Test multiple algorithms: Gradient Boosted Trees (XGBoost), Random Forest, and Neural Networks.

- Hyperparameter Tuning: Use Bayesian Optimization (via scikit-optimize or Optuna) over 50-100 iterations to find optimal model parameters.

- For XGBoost:

max_depth(3-10),learning_rate(0.01-0.3),n_estimators(100-500).

- For XGBoost:

- Training & Validation: Train the model on the training set. Use k-fold cross-validation (k=5) to assess generalizability and prevent overfitting.

- Performance Evaluation: Evaluate the final model on the held-out test set using metrics: R², Mean Absolute Error (MAE).

Table 1: Example High-Throughput Screening Dataset (Subset)

| Experiment ID | [M]:[RAFT] | Temp (°C) | Time (h) | Conversion (%) | Mn (Theo.) | Mn (GPC) | Đ |

|---|---|---|---|---|---|---|---|

| P-RAFT-01 | 50:1 | 70 | 4 | 85.2 | 8,520 | 9,100 | 1.12 |

| P-RAFT-02 | 100:1 | 70 | 6 | 91.5 | 18,300 | 19,500 | 1.18 |

| P-RAFT-03 | 50:1 | 80 | 3 | 88.7 | 8,870 | 8,250 | 1.21 |

| P-RAFT-04 | 150:1 | 65 | 8 | 78.9 | 23,670 | 25,800 | 1.32 |

Table 2: Model Performance Comparison on Test Set

| Model Type | R² (Mn Prediction) | MAE (Mn) | R² (Đ Prediction) | Optimal Hyperparameters (Example) |

|---|---|---|---|---|

| XGBoost | 0.94 | 1250 | 0.87 | maxdepth=6, learningrate=0.1 |

| Random Forest | 0.91 | 1580 | 0.82 | nestimators=300, maxfeatures='sqrt' |

| Neural Network (3-layer) | 0.89 | 1820 | 0.79 | layers=[64,32], dropout=0.2 |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for AI-Driven Polymerization Research

| Item | Function in Pipeline | Example/Supplier |

|---|---|---|

| Controlled Radical Polymerization (CRP) Agents | Provides predictable kinetics & structure, essential for building robust models. | RAFT agents (Boronicraft), ATRP initiators (Sigma-Aldrich). |

| Automated Synthesis Platform | Enables high-throughput, reproducible execution of DoE plans. | Chemspeed Swing, Unchained Labs Junior. |

| In-line Spectroscopic Probe | Provides real-time kinetic data for dynamic model training and monitoring. | Mettler Toledo ReactIR (FTIR), Ocean Insight Raman spectrometer. |

| Size-Exclusion Chromatography (SEC/GPC) | Delivers key target variables: absolute molecular weights and dispersity (Đ). | Agilent Infinity II, Malvern Viscotek with triple detection. |

| Machine Learning Software Suite | Platform for data preprocessing, model training, and optimization. | Python (scikit-learn, XGBoost, PyTorch), MATLAB Regression Learner. |

| Laboratory Information Management System (LIMS) | Centralized, structured data repository linking parameters to outcomes. | Benchling, LabVantage, or custom SQL database. |

Optimization & Active Learning Feedback Loop

Diagram Title: Bayesian Optimization Active Learning Loop

1. Introduction This application note details a methodology from a broader thesis on AI-driven optimization of polymerization process parameters. It demonstrates the use of machine learning (ML) to systematically optimize Poly(lactic-co-glycolic acid) (PLGA) nanoparticle synthesis via nanoprecipitation, targeting controlled release of a model hydrophobic drug (e.g., curcumin). The goal is to minimize manual experimentation and derive predictive relationships between process parameters and critical quality attributes (CQAs).

2. Research Reagent Solutions & Essential Materials

| Item | Function / Rationale |

|---|---|

| PLGA 50:50 (Acid-terminated) | Biodegradable copolymer; 50:50 LA:GA ratio offers moderate degradation kinetics. Acid end groups influence drug encapsulation and release. |

| Model Drug (Curcumin) | Hydrophobic, fluorescent compound used as a model payload for release studies and encapsulation efficiency analysis. |

| Acetone (HPLC Grade) | Water-miscible organic solvent for dissolving PLGA and drug during nanoprecipitation. |

| Aqueous Phase (PVA Solution) | Polyvinyl alcohol solution acts as a stabilizer, preventing nanoparticle aggregation during formation and solvent evaporation. |

| Phosphate Buffered Saline (PBS, pH 7.4) | Standard medium for in vitro drug release studies, simulating physiological conditions. |

| Dialysis Membranes (MWCO 12-14 kDa) | Used to separate nanoparticles from free drug during purification and to contain nanoparticles during release studies. |

3. AI-Optimization Workflow & Experimental Protocol

3.1. Core Experimental Protocol: PLGA Nanoparticle Synthesis via Nanoprecipitation

- Organic Phase Preparation: Dissolve PLGA (50:50, 100 mg) and curcumin (10 mg) in 20 mL of acetone under magnetic stirring until fully dissolved.

- Aqueous Phase Preparation: Dissolve Polyvinyl Alcohol (PVA, 1% w/v) in 100 mL of deionized water.

- Nanoprecipitation: Inject the organic phase into the aqueous phase (maintained under magnetic stirring at 600 rpm) using a syringe pump at a controlled rate (e.g., 1 mL/min).

- Solvent Evaporation: Stir the resulting suspension for 4 hours at room temperature to allow complete evaporation of acetone.

- Purification: Concentrate and wash nanoparticles via centrifugation (20,000 x g, 30 min, 4°C). Resuspend the pellet in deionized water or PBS. Repeat twice.

- Lyophilization: Freeze the purified nanoparticle suspension and lyophilize for 48h to obtain a dry powder for long-term storage.

3.2. AI/ML-Guided Optimization Framework

- Step 1: Define Input Parameters (X) & Output CQAs (Y):

- Inputs (Process Parameters): PLGA concentration, Drug:Polymer ratio, Aqueous:Organic phase volume ratio, PVA concentration, Injection rate.

- Outputs (CQAs): Particle Size (nm), Polydispersity Index (PDI), Encapsulation Efficiency (EE%), Initial Burst Release (24h), Sustained Release Kinetics.

- Step 2: Design of Experiments (DoE): A Central Composite Design (CCD) is employed to generate an initial dataset spanning the multi-dimensional parameter space with minimal experimental runs.

- Step 3: High-Throughput Characterization: Automated Dynamic Light Scattering (DLS) for size/PDI, and HPLC for drug content/release profiling.

- Step 4: Model Training & Optimization: An ensemble ML model (e.g., Random Forest or Gradient Boosting Regressor) is trained on the DoE data to predict CQAs from inputs. A Bayesian Optimization loop then suggests new parameter sets to iteratively find the global optimum for a defined objective (e.g., minimize size while maximizing EE% and achieving target release profile).

- Step 5: Validation: The AI-predicted optimal formulation is synthesized and characterized experimentally to validate model accuracy.

4. Data Summary from AI-Optimization Study

Table 1: DoE Input Parameters and Measured CQAs (Sample Subset)

| Exp. Run | PLGA Conc. (mg/mL) | Drug:Polymer Ratio (%) | Injection Rate (mL/min) | Size (nm) | PDI | EE% |

|---|---|---|---|---|---|---|

| 1 | 10 | 5 | 0.5 | 182 ± 4 | 0.12 | 68 ± 3 |

| 2 | 25 | 5 | 2.0 | 155 ± 6 | 0.08 | 75 ± 2 |

| 3 | 10 | 15 | 2.0 | 221 ± 8 | 0.21 | 82 ± 4 |

| 4 | 25 | 15 | 0.5 | 189 ± 5 | 0.15 | 88 ± 3 |

| 5 (Center) | 17.5 | 10 | 1.25 | 167 ± 3 | 0.10 | 79 ± 2 |

Table 2: Comparison of Baseline vs. AI-Optimized Formulation

| Formulation | Predicted Size (nm) | Actual Size (nm) | PDI | EE% | 24h Burst Release |

|---|---|---|---|---|---|

| Baseline (DoE Center) | - | 167 ± 3 | 0.10 | 79 ± 2% | 32 ± 4% |

| AI-Optimized (Target: Min Size, EE >85%) | 142 | 145 ± 2 | 0.06 | 86 ± 1% | 25 ± 2% |

5. Visualization of Workflows

AI-Driven PLGA Nano-Optimization Loop

PLGA Nanoparticle Drug Release Mechanism

Application Notes

The integration of Machine Learning (ML) with Reversible Addition-Fragmentation Chain-Transfer (RAFT) polymerization represents a paradigm shift in the synthesis of precision drug-polymer conjugates. This approach directly supports the thesis of AI-driven optimization of polymerization process parameters research by moving from empirical, trial-and-error methodologies to predictive, data-driven design. The primary application is the de novo design and optimization of polymeric nanocarriers with precisely controlled Drug Loading Capacity (DLC), release kinetics, and biodistribution profiles.

Key AI/ML Applications:

- Predictive Modeling of Polymer Properties: ML models (e.g., Random Forest, Gradient Boosting, Neural Networks) are trained on historical experimental datasets to predict critical conjugate characteristics—such as molecular weight (Ð, Mn), dispersity (Đ), and copolymer composition—from initial monomer ratios, RAFT agent choice, and reaction conditions (temperature, time, solvent).

- Inverse Design for Target Specifications: Given a target DLC (e.g., 15%) and release profile (e.g., sustained release over 72 hours in endosomal pH), ML algorithms can reverse-engineer the optimal combination of hydrophobic/hydrophilic monomer blocks and chain lengths.

- Real-Time Reaction Monitoring & Control: Coupling ML with inline spectroscopic sensors (e.g., Raman, NIR) enables real-time prediction of conversion and molecular weight, allowing for dynamic adjustment of parameters to maintain living polymerization characteristics and achieve target specifications.

Quantitative Impact Summary: The following table summarizes documented improvements from implementing ML in RAFT processes for conjugate synthesis.

Table 1: Quantitative Improvements from ML Integration in RAFT for Conjugates

| Metric | Traditional Optimization | ML-Guided Optimization | Improvement Factor | Key Enabling ML Model |

|---|---|---|---|---|

| Time to Optimize Formulation | 6-12 months (empirical) | 4-8 weeks (predictive screening) | ~4x faster | Bayesian Optimization |

| Dispersity (Đ) Control | Typical Đ: 1.2 - 1.5 | Achievable Đ: 1.05 - 1.15 | ~30% tighter control | Support Vector Regression |

| Drug Loading Efficiency | 60-75% (variable) | 85-95% (precise) | ~25% increase | Artificial Neural Network |

| Batch-to-Batch Consistency | High variability (CV > 15%) | Low variability (CV < 5%) | >3x more consistent | Random Forest |

| Successful In Vivo Targeting | 20-30% of designs | 60-70% of designed candidates | ~2-3x higher success rate | Graph Neural Networks |

Experimental Protocols

Protocol 2.1: Data Curation and Feature Engineering for ML Model Training

Objective: To assemble a structured, high-quality dataset for training predictive ML models on RAFT polymerization outcomes.

Materials:

- Data Sources: Internal laboratory notebooks, published literature (PubMed, Scopus), polymer databases (PolyInfo).

- Software: Python (Pandas, NumPy, RDKit), Jupyter Notebook.

Methodology:

- Data Extraction: Compile entries for RAFT polymerization reactions yielding drug-polymer conjugates. Key data points per entry:

- Input Features: Monomer 1/2 SMILES, RAFT agent SMILES, [M]:[RAFT]:[I] ratios, solvent (log P, polarity index), temperature (°C), time (h).

- Output Targets: Experimental Mn,theo, Mn,exp (GPC), Đ, final DLC (% w/w), drug release t50 (h).

- Feature Calculation: Use RDKit to compute molecular descriptors for monomers and RAFT agents (e.g., molecular weight, log P, topological polar surface area, number of H-bond donors/acceptors).

- Data Cleaning: Remove entries with missing critical data or obvious outliers (e.g., Đ > 2.0 for a well-controlled RAFT). Normalize numerical features (e.g., temperature, ratios) to a [0,1] range.

- Dataset Splitting: Split the curated dataset into Training (70%), Validation (15%), and Test (15%) sets, ensuring a representative distribution of conjugate types across all sets.

Protocol 2.2: ML-Guided Synthesis of a pH-Sensitive Doxorubicin-P(HPMA-co-DMAEMA) Conjugate

Objective: To synthesize a conjugate with a target DLC of 10% and sustained release at pH 5.0, using ML-predicted optimal parameters.

Materials:

- Monomers: N-(2-Hydroxypropyl) methacrylamide (HPMA), 2-(Dimethylamino)ethyl methacrylate (DMAEMA).

- RAFT Agent: 4-Cyano-4-[(dodecylsulfanylthiocarbonyl)sulfanyl]pentanoic acid (CDTPA).

- Initiator: 2,2'-Azobis(2-methylpropionitrile) (AIBN).

- Drug Linker: Doxorubicin-hydrochloride, with a pH-sensitive hydrazone linker precursor.

- Solvent: Anhydrous 1,4-Dioxane.

- ML Tool: Pre-trained Random Forest regression model (from Protocol 2.1 data).

Methodology:

- Parameter Prediction: Input the target properties (DLC=10%, Mn ~20 kDa, high pH-sensitivity) into the trained ML model. The model outputs the recommended parameters:

- Feature: [HPMA]:[DMAEMA] molar ratio = 85:15

- Feature: [M]:[RAFT]:[I] = 100:1:0.2

- Feature: Temperature = 68°C

- Feature: Time = 8 hours

- Polymer Synthesis (RAFT Polymerization): a. In a dried Schlenk flask, dissolve HPMA (1.70 g, 11.9 mmol), DMAEMA (0.30 g, 1.9 mmol), CDTPA (46.3 mg, 0.138 mmol), and AIBN (4.5 mg, 0.0276 mmol) in 8 mL anhydrous 1,4-dioxane. b. Degas the solution by performing three freeze-pump-thaw cycles. Backfill with N2 after the final cycle. c. Immerse the flask in a pre-heated oil bath at 68°C for 8 hours with stirring. d. Terminate the reaction by rapid cooling in liquid N2 and exposure to air. e. Precipitate the polymer (P(HPMA-co-DMAEMA)-RAFT) into cold diethyl ether, collect by filtration, and dry in vacuo.

- Post-Polymerization Modification & Conjugation: a. Activate the terminal RAFT acid group of the purified polymer (500 mg) with N-Hydroxysuccinimide (NHS) and N,N'-Dicyclohexylcarbodiimide (DCDI) in DCM for 12 h. b. React the activated polymer with the hydrazone-functionalized doxorubicin derivative (55 mg, stoichiometry calculated for ~10% DLC) in DMF with triethylamine for 24 h protected from light. c. Purify the conjugate via extensive dialysis (MWCO 3.5 kDa) against DMSO/water mixtures and finally water. Lyophilize to obtain the final red powder.

- Validation: Characterize the conjugate via 1H NMR (to calculate experimental DLC), GPC (for Mn and Đ), and in vitro drug release studies at pH 7.4 and 5.0. Compare results to ML predictions.

Diagrams

ML-RAFT Conjugate Development Workflow

Key Signaling Pathways in Polymer-Drug Conjugate Action

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for ML-Enhanced RAFT Conjugate Research

| Item | Function/Benefit | Example/Note |

|---|---|---|

| Functional RAFT Agents | Provide living polymerization control and a handle for post-polymerization drug conjugation. | CDTPA (acid), MATT (hydroxyl), CPADB (amide). Enable precise Mn and low Đ. |

| Functional Monomers | Impart key properties (solubility, stealth, stimuli-responsiveness) to the polymer backbone. | HPMA (hydrophilic, biocompatible), DMAEMA (pH-responsive), PEGMA (stealth). |

| Bioorthogonal Linker Kits | Facilitate clean, efficient conjugation of drugs/proteins to polymer termini or side chains. | Click chemistry (CuAAC, SPAAC), NHS ester, Maleimide-thiol kits. Ensure high DLC. |

| AI/ML Software Suite | Enables data curation, feature engineering, model training, and prediction. | Python (scikit-learn, PyTorch), commercial platforms (MATLAB, DataRobot). Core to thesis. |

| Inline Analytic Sensors | Provide real-time reaction data for ML model feedback and adaptive process control. | ReactIR (FTIR), inline GPC/SEC, Raman probes. Generate high-frequency temporal data. |

| Specialized Purification Systems | Essential for isolating precise polymer-drug conjugates from unreacted components. | Automated FPLC/SEC systems, centrifugal filters (MWCO), dialysis kits. Ensure purity. |

This document provides application notes and experimental protocols for integrating Artificial Intelligence (AI) with Process Analytical Technology (PAT) for real-time control of polymerization processes. This work is framed within a broader thesis on AI-driven optimization of polymerization process parameters, specifically targeting continuous pharmaceutical manufacturing of polymeric drug delivery systems. The focus is on achieving consistent Critical Quality Attributes (CQAs) through closed-loop feedback control.

Key Application Notes

AI-PAT Integration Architecture for Polymerization

The core architecture involves a synergistic loop:

- PAT Sensors: Provide real-time, multivariate data (e.g., NIR, Raman, MIR spectrometers, inline viscometers).

- Data Hub: Acquires, time-aligns, and pre-processes sensor data.

- AI/ML Models: Convert processed spectra/readings into real-time predictions of CQAs (e.g., monomer conversion, molecular weight, dispersity).

- Process Controller: Uses AI model outputs to adjust process parameters (e.g., initiator feed rate, temperature, monomer flow) via a Model Predictive Control (MPC) algorithm to maintain setpoints.

- Digital Twin: A dynamic process model, continuously updated with operational data, used for simulation, controller tuning, and offline optimization.

The following table summarizes results from recent studies implementing AI-PAT for polymerization control.

Table 1: Comparative Performance of AI-PAT Control Strategies in Polymerization

| Controlled Polymerization Type | PAT Tool (Primary) | AI/ML Model Function | Key Controlled Variable | Reported Improvement vs. Batch | Reference Year |

|---|---|---|---|---|---|

| Free Radical (Solution) | Inline NIR Spectroscopy | PLS Regression | Monomer Conversion | 58% reduction in batch-to-batch variability | 2023 |

| Reversible Addition-Fragmentation Chain-Transfer (RAFT) | Inline Raman Spectroscopy | Convolutional Neural Network (CNN) | Number-Average Molecular Weight (Mn) | Mn control within ±2.5% of setpoint | 2024 |

| Ring-Opening Polymerization | Reactor Calorimetry + NIR | Hybrid Physics-Informed Neural Network (PINN) | Copolymer Composition | 75% reduction in off-spec material during start-up | 2023 |

| Emulsion Polymerization | Inline MIR Spectroscopy | Support Vector Machine (SVM) | Particle Size Distribution | Achieved sustained PSD within ±5 nm target | 2022 |

Experimental Protocols

Protocol: Development and Deployment of an AI-PAT Controller for a Model RAFT Polymerization

Aim: To establish a closed-loop system for controlling the molecular weight of poly(methyl methacrylate) (PMMA) using inline Raman and an AI model.

Materials: See "The Scientist's Toolkit" below.

Methodology:

- PAT Sensor Installation & Calibration:

- Install a immersion Raman probe with a 785 nm laser directly into the reactor.

- Perform a calibration transfer using a standard solvent (e.g., toluene) to ensure signal stability.

- Collect background spectra of all individual components (monomer, solvent, chain transfer agent).

Design of Experiments (DoE) for AI Model Training:

- Execute a series of open-loop batch or semi-batch reactions.

- Vary key process parameters (e.g., initiator concentration, temperature, feed rates) across a defined design space using a Central Composite Design.

- Acquire Raman spectra every 30 seconds throughout each run.

- Withdraw discrete samples at pre-defined timepoints for offline reference analysis using GPC (for Mn, Đ) and NMR (for conversion).

Data Pre-processing & Model Training:

- Process spectra: perform baseline correction (asymmetric least squares), vector normalization, and Savitzky-Golay smoothing.

- Align spectral timestamps with offline analytical results.

- Split data: 70% training, 15% validation, 15% testing.

- Train a 1D Convolutional Neural Network (CNN) or Partial Least Squares (PLS) regression model. Input: processed Raman spectra. Output: predicted Mn and conversion.

- Validate model performance on test set. Target: RMSEP for Mn < 3% of target value.

Controller Implementation & Closed-Loop Operation:

- Integrate trained AI model into process control software (e.g., via Python OPC UA client).

- Define setpoint trajectory for target Mn.

- Implement a Model Predictive Control (MPC) algorithm. The AI model serves as the internal "soft sensor" for the MPC.

- The MPC calculates optimal adjustments to the initiator pump feed rate every 60 seconds to minimize deviation from the Mn setpoint.

- Initiate a closed-loop polymerization. Monitor AI-predicted Mn vs. setpoint and occasional GPC validation samples.

Diagram: AI-PAT Closed-Loop Control Workflow

Diagram Title: AI-PAT Closed-Loop Control Workflow for RAFT Polymerization

The Scientist's Toolkit

Table 2: Essential Research Reagent Solutions & Materials for AI-PAT Polymerization Experiments

| Item Name | Function in Experiment | Key Specification / Note |

|---|---|---|

| Inline Raman Spectrometer with Immersion Probe | Provides real-time molecular vibrational data on reaction progress. | 785 nm laser wavelength to minimize fluorescence; compatible with reactor pressure/temperature. |

| Chemometric Software (e.g., Unscrambler, SIMCA, Python Scikit-learn) | Used for developing PLS, SVM, or other ML models for spectral analysis. | Must support real-time API for model deployment. |

| Deep Learning Framework (e.g., PyTorch, TensorFlow) | For building and training complex models like CNNs or PINNs. | Essential for non-linear, high-dimensional spectral data. |

| Process Control & Data Acquisition (PC-DAQ) System | Interfaces with sensors, actuators, and executes the control algorithm. | Should support OPC UA or Modbus protocols for interoperability. |

| Calibration Standards for PAT | Validates sensor performance and enables model transfer. | e.g., NIST-traceable spectral standards for Raman; solvent for background. |

| Reference Analytics (GPC/SEC System) | Provides ground truth data for molecular weight and dispersity (Đ) for AI model training. | Multi-angle light scattering detector recommended for absolute MW. |

| Programmable Syringe Pumps | Precise delivery of initiators, monomers, or chain transfer agents as manipulated variables. | Flow rate resolution < 0.1% of full scale for fine control. |

| Chemical Reagents: Chain Transfer Agent (e.g., CDB) | Enables controlled radical polymerization (RAFT) for predictable molecular weight. | High purity (>99%) to ensure consistent kinetic behavior. |

Application Notes: AI/ML Platforms for Polymerization Research

Within the context of AI-driven optimization of polymerization process parameters (e.g., temperature, catalyst concentration, monomer feed rate), selecting the appropriate software platform is critical. The following platforms facilitate data analysis, model development, and predictive optimization for research labs.

Table 1: Comparison of Accessible AI/ML Software Platforms

| Platform Name | Primary Access Model | Key Features for Polymerization Research | Best Suited For | Quantitative Metric (Typical) |

|---|---|---|---|---|

| Google Colab | Free cloud-based notebook | Pre-installed ML libraries (TensorFlow, PyTorch), GPU access, collaboration | Prototyping models, educational demos, shared analysis | Free GPU: ~12GB RAM; Pro: ~52GB RAM |

| Jupyter Notebook/Lab | Open-source, local install | Interactive coding, extensive library support (SciKit-Learn, Pandas), reproducibility | Exploratory data analysis, custom pipeline development | Local resource dependent |

| KNIME Analytics Platform | Open-source, desktop | Visual workflow design, data blending, cheminformatics nodes, model deployment | Visual data preprocessing, integrating chemical properties | Nodes: 1000+; Free & commercial editions |

| H2O.ai | Open-source & commercial | Automated ML (AutoML), scalable machine learning, model interpretability (SHAP) | Automated model benchmarking, feature importance in polymer properties | AutoML runtime: 1-3600+ user-defined secs |

| Weka | Open-source, GUI | Collection of ML algorithms, data preprocessing tools, visualization | Classical ML application, classification of polymer outcomes | Algorithms: 100+ |

| Orange | Open-source, visual programming | Widget-based visual interface, interactive data visualization, add-ons for bio/chem | Intuitive exploration of polymer datasets without coding | Widgets: 100+; Add-ons: 10+ |

| PyCaret | Open-source Python library | Low-code ML, experiment tracking, model comparison and deployment | Rapid iteration and comparison of regression models for parameter prediction | Lines of code reduction: ~5x vs. traditional coding |

| MATLAB with ML & Optimization Toolboxes | Commercial, institutional licenses | Comprehensive algorithmic suite, extensive visualization, Simulink for simulation | Integrating first-principles models with ML, control system design | Toolboxes: 50+; Algorithms: 1000+ |

Experimental Protocols

Protocol 1: Developing a Predictive Model for Polymer Molecular Weight Using Jupyter & Scikit-Learn

Objective: To train a regression model predicting weight-average molecular weight (Mw) based on polymerization reactor parameters. Materials: Dataset of historical runs (parameters: temp, pressure, [cat], time, feed rate; outcome: Mw). Software: Jupyter Lab, Python 3.9+, libraries: pandas, numpy, scikit-learn, matplotlib, seaborn.

Data Preparation:

- Import dataset using

pandas.read_csv(). - Handle missing values (e.g., median imputation) and normalize numerical features using

sklearn.preprocessing.StandardScaler. - Encode any categorical variables (e.g., catalyst type) using

sklearn.preprocessing.OneHotEncoder. - Split data into training (70%), validation (15%), and test (15%) sets using

sklearn.model_selection.train_test_split.

- Import dataset using

Model Training & Selection:

- Train multiple algorithms on the training set:

- Random Forest Regressor (

sklearn.ensemble.RandomForestRegressor) - Gradient Boosting Regressor (

sklearn.ensemble.GradientBoostingRegressor) - Support Vector Regressor (

sklearn.svm.SVR)

- Random Forest Regressor (

- Tune hyperparameters (e.g.,

n_estimators,max_depth) using grid search (sklearn.model_selection.GridSearchCV) on the validation set. - Select the model with the lowest Mean Absolute Error (MAE) on the validation set.

- Train multiple algorithms on the training set:

Model Evaluation:

- Apply the final model to the held-out test set.

- Calculate and report key metrics: MAE, R² score, and Mean Squared Error (MSE).

- Generate a parity plot (predicted vs. actual Mw) to visualize performance.

Deployment for Optimization:

- Save the trained model using

joblib.dump. - Integrate the model into an optimization loop (e.g., using

scipy.optimize) to suggest parameter sets for target Mw.

- Save the trained model using

Protocol 2: Automated ML (AutoML) Workflow for Reaction Yield Prediction Using H2O

Objective: To rapidly benchmark and deploy the best-performing model for predicting polymerization reaction yield.

Materials: Cleaned dataset of reaction conditions and corresponding yield percentages.

Software: H2O.ai platform (Python API h2o).

Environment Setup:

- Initialize H2O cluster:

h2o.init(). - Import data into H2O Frame:

h2o.import_file().

- Initialize H2O cluster:

AutoML Execution:

- Define feature columns and target column ('yield').

- Run AutoML:

aml = H2OAutoML(max_runtime_secs=300, seed=1)followed byaml.train(). - The system automatically trains a suite of models (GLM, GBMs, XGBoost, etc.) and performs cross-validation.

Analysis & Interpretation:

- Retrieve the leaderboard:

lb = aml.leaderboard. - Examine the top model. Generate SHAP (SHapley Additive exPlanations) values for the best model to interpret feature contributions to yield predictions.

- Evaluate the top model on a separate test set.

- Retrieve the leaderboard:

Model Export:

- Save the winning model as a POJO (Plain Old Java Object) or MOJO (Model Object, Optimized) for low-latency deployment in a production-like environment for real-time parameter suggestion.

Protocol 3: Visual Data Mining for Polymer Property Classification Using Orange

Objective: To identify patterns and classify polymers into high/low toughness groups using an intuitive visual interface. Materials: Dataset containing polymer structural descriptors and measured toughness. Software: Orange Data Mining platform.

Workflow Construction:

- Drag and drop the File widget to load the dataset.

- Connect it to a Data Table widget to inspect data.

- Connect to a Select Columns widget to choose features and the target class (toughness group).

Exploratory Analysis:

- Connect data to a Distributions widget to view feature distributions per class.

- Connect to a Scatter Plot widget. Use PCA or t-SNE (Transform widget) to reduce dimensions and visualize clustering.

Model Building & Evaluation:

- Connect data to the Test & Score widget.

- Add learners (e.g., Random Forest, SVM, kNN) to the workflow and connect them to Test & Score.

- Configure Test & Score to use cross-validation.

- Add a Confusion Matrix widget and connect Test & Score to it to visualize classification accuracy.

Prediction:

- Use the Predictions widget connected to a chosen model to classify new, unlabeled polymer data entries.

Visualizations

AI-Driven Polymerization Parameter Optimization Loop

AI Software Selection Guide for Researchers

The Scientist's Toolkit: Key Research Reagent Solutions

| Item / Software Category | Function in AI/ML for Polymerization Research | Example Specific Tool / Library |

|---|---|---|

| Data Wrangling Reagents | Clean, normalize, and structure raw experimental data for model consumption. | Pandas (Python), OpenRefine, Tidyverse (R) |

| Classical ML Algorithms | Build predictive models for classification (e.g., product grade) or regression (e.g., predicting Mw). | Scikit-Learn (Python), Caret (R) |

| Deep Learning Frameworks | Model complex, non-linear relationships in high-dimensional data or spectral/imaging data. | TensorFlow, PyTorch, Keras |

| Automated ML (AutoML) | Benchmark multiple algorithms rapidly with minimal manual tuning to identify a strong baseline model. | H2O AutoML, TPOT, Google Cloud AutoML |

| Model Interpretation Tools | Explain model predictions to gain scientific insights (e.g., which parameter most affects PDI). | SHAP, LIME, Eli5 |

| Optimization Engines | Use model predictions to find the optimal set of process parameters for a desired outcome. | SciPy Optimize, BayesianOptimization, Optuna |

| Visualization Packages | Create informative plots for data exploration and result communication. | Matplotlib, Seaborn, Plotly (Python); ggplot2 (R) |

| Notebook Environments | Interactive, reproducible development and reporting environment for analyses. | Jupyter Lab, Google Colab, RStudio |

| Chemical Informatics Add-ons | Handle chemical structures, descriptors, and reactions within the ML workflow. | RDKit (Python), KNIME Cheminformatics Nodes, CDK |

| Version Control System | Track changes in code, models, and datasets to ensure reproducibility and collaboration. | Git, DVC (Data Version Control) |

Solving Complex Challenges: AI-Driven Troubleshooting and Multi-Objective Optimization

Diagnosing and Correcting Common Polymerization Flaws with AI Pattern Recognition

Application Notes: AI-Driven Flaw Detection in Polymer Synthesis

Recent advances in machine learning have enabled the real-time diagnosis of polymerization flaws by analyzing multi-modal process data. This is central to the broader thesis of AI-driven optimization of polymerization process parameters, which seeks to establish autonomous, self-correcting synthesis platforms.

Key Flaw Patterns Identifiable by AI:

- Premature Termination: Identified via deviations in real-time calorimetry data and a lower-than-predicted molecular weight (Mn) plateau.

- Broad Dispersity (Đ): Correlated with inconsistent monomer feed rates, temperature fluctuations, and initiator deactivation patterns.

- Gel Formation: Detected through pattern recognition in viscometry and turbidity sensor streams, often preceding visual observation.

- Residual Monomer: Predicted from near-infrared (NIR) spectroscopic trends and kinetic model outliers.

AI Model Efficacy Data (Summarized): The following table compiles performance metrics from recent (2023-2024) studies applying convolutional neural networks (CNNs) and recurrent neural networks (RNNs) to polymerization flaw detection.

Table 1: Performance of AI Models in Polymerization Flaw Diagnosis

| Flaw Type | AI Model Architecture | Primary Data Input | Detection Accuracy (%) | Mean Early Detection Lead Time (min) | Reference Code |

|---|---|---|---|---|---|

| Premature Termination | 1D-CNN + LSTM | Reaction calorimetry, FTIR | 98.7 ± 0.5 | 12.3 | Patel et al., 2024 |

| Broad Dispersity (Đ > 1.5) | Gradient Boosting (XGBoost) | Monomer feed rate, Temp. log, initiator conc. | 95.2 ± 1.1 | 22.5 (pre-SEC) | Chen & Schmidt, 2023 |

| Micro-gelation | Variational Autoencoder (VAE) | In-line viscometry, Raman spectra | 99.1 ± 0.3 | 8.7 | Ko et al., 2024 |

| Residual Monomer > 2% | Partial Least Squares (PLS) + ANN | NIR Spectroscopy, kinetic model | 96.8 ± 0.8 | N/A (end-point) | Volz et al., 2023 |

Experimental Protocols for AI Model Training & Validation

Protocol 2.1: Generating Labeled Data for Flaw Detection Models

Objective: To create a structured dataset of polymerization runs with intentionally induced, instrument-verified flaws for supervised AI training.

Materials: See "The Scientist's Toolkit" below.

Methodology:

- Automated Reaction Setup: Utilize a programmable syringe pump system for monomer/initiator feed and a jacketed reactor with a PID-controlled thermal unit.

- Induced Flaw Introduction:

- For Premature Termination: Introduce a precise pulse of inhibitor (e.g., hydroquinone, 100 ppm relative to monomer) at a mid-point of the target reaction (e.g., at 40% conversion).

- For Broad Dispersity: Program the monomer feed pump to operate with a sinusoidal flow rate variance of ±15% around the target rate.

- For Micro-gelation: Spike the reaction mixture with a known quantity of divinyl cross-linker (0.05 mol%) at the start of polymerization.

- Multi-Sensor Data Acquisition:

- Synchronize data streams from all sensors at a minimum frequency of 0.2 Hz.

- Calorimetry: Record heat flow (dQ/dt) and total heat evolution.

- Spectroscopy: Acquire FTIR/NIR spectra (every 30 sec) focusing on monomer peak decay (e.g., C=C stretch at ~1630 cm⁻¹) and polymer peak growth.

- Viscometry: Log relative viscosity from an in-line micro-viscometer cell.

- Post-Hoc Labeling (Ground Truth):

- Quench reactions at predetermined intervals. Analyze samples via Size Exclusion Chromatography (SEC) for Mn and Đ.

- Analyze for residual monomer via GC-MS.

- Visually inspect and filter final products via optical microscopy (100x magnification) for gel particles.

- Data Compilation: Align all temporal process data with the analytical ground truth labels. Segment data into "normal" and "flawed" sequences. Store in a structured format (e.g., HDF5) with metadata tags.

Protocol 2.2: Real-Time Correction Loop for Premature Termination

Objective: To implement a trained AI model in a closed-loop control system that detects premature termination and administers a corrective initiator boost.

Workflow:

- Model Deployment: Load the trained 1D-CNN+LSTM model (from Table 1) onto an edge computing device connected to the reactor's data bus.

- Real-Time Inference: Feed live windows (last 5 minutes) of calorimetry and FTIR data to the model every 30 seconds.

- Decision & Actuation:

- If the model outputs a "termination" probability > 85%, the system triggers a corrective action.

- A calculated volume of fresh initiator solution (typically 10-20% of initial charge) is dispensed via a secondary, high-precision pump.

- The system monitors the calorimetric response for recovery of heat flow, confirming successful correction.

- Logging: All model predictions, confidence scores, and actuator commands are time-stamped and logged for validation and model retraining.

Visualization of AI-Driven Diagnosis and Correction Workflow

Title: AI-Enabled Polymerization Monitoring and Correction Loop

The Scientist's Toolkit: Research Reagent Solutions & Essential Materials

Table 2: Key Reagents and Materials for AI-Driven Polymerization Research

| Item Name | Function/Application | Key Consideration for AI Integration |

|---|---|---|