From Data to Delivery: How AI and Machine Learning Are Revolutionizing Polymer Manufacturing for Pharmaceutical Applications

This article provides a comprehensive guide to data-driven optimization in polymer manufacturing for researchers, scientists, and drug development professionals.

From Data to Delivery: How AI and Machine Learning Are Revolutionizing Polymer Manufacturing for Pharmaceutical Applications

Abstract

This article provides a comprehensive guide to data-driven optimization in polymer manufacturing for researchers, scientists, and drug development professionals. We explore the foundational role of polymer data science, detailing key material properties and characterization methods. The piece delves into practical methodologies, including AI and machine learning models for formulation and process design. We address common challenges and advanced optimization strategies, such as reducing batch variability and managing complex excipient interactions. Finally, we compare different analytical frameworks for validating predictive models and ensuring robust scale-up. This synthesis of cutting-edge techniques aims to accelerate the development of next-generation polymeric drug delivery systems.

The Data Science of Polymers: Building Blocks for Predictive Manufacturing in Pharma

The development of polymer-based drug delivery systems (DDS) has traditionally relied on empirical, trial-and-error approaches. This often leads to lengthy development cycles and suboptimal formulations. Data-driven optimization, powered by high-throughput experimentation, computational modeling, and machine learning, is now the critical catalyst. It enables researchers to decipher the complex relationships between polymer synthesis parameters, material properties, nanoparticle characteristics, and in vivo performance, transforming polymer manufacturing from an art into a predictable science.

Technical Support Center: Data-Driven Polymer DDS Development

Troubleshooting Guides & FAQs

1. Polymer Synthesis & Characterization

- Q: My synthesized PLGA batches show inconsistent molecular weights and high dispersity (Đ). How can I stabilize the polymerization process for reliable data generation?

- A: Inconsistent molecular weight is often due to trace moisture or variable initiator concentrations. Implement a strict, data-logged protocol:

- Precision Drying: Use a Schlenk line to dry monomers (D,L-lactide and glycolide) by three cycles of dissolution in anhydrous toluene followed by azetropic distillation under vacuum. Log the final vacuum pressure (< 0.1 mBar) and time.

- Initiator Calibration: Precisely titrate your tin(II) 2-ethylhexanoate (Sn(Oct)₂) catalyst solution in dry toluene to determine exact concentration before each use.

- In-line Monitoring: Employ in-situ FTIR or Raman spectroscopy to monitor monomer conversion in real-time, stopping the reaction at a consistent target conversion (e.g., 85%) rather than a fixed time.

- A: Inconsistent molecular weight is often due to trace moisture or variable initiator concentrations. Implement a strict, data-logged protocol:

Q: My Dynamic Light Scattering (DLS) data for polymer nanoparticles shows multiple peaks or a polydispersity index (PDI) > 0.2. How do I troubleshoot this?

A: High PDI indicates a heterogeneous particle population. Follow this diagnostic workflow:

Diagram Title: Troubleshooting High PDI in Nanoparticle DLS

2. Drug Loading & Release

- Q: The encapsulation efficiency (EE%) of my hydrophobic drug in PLGA nanoparticles is low and variable. How can I improve it systematically?

- A: Low EE% is a multi-factorial problem. Use a Design of Experiments (DoE) approach to model the interaction of key factors. A recent study (2023) identified the following primary contributors:

Q: My in vitro drug release profile does not match the predicted Higuchi or Korsmeyer-Peppas model. What are the likely causes?

A: Model mismatch indicates unaccounted-for phenomena. Correlate release deviation with physicochemical data.

Observed Deviation Likely Cause Data-Driven Check Initial Burst > 40% Surface-adsorbed drug / porous matrix Check BET surface area & pore size data of nanoparticles. Lag Phase / Slow Start Highly crystalline polymer or dense matrix Check DSC data for polymer crystallinity. Biphasic with Sharp Change Polymer degradation threshold reached Monitor media pH change and GPC data of recovered polymer.

3. Data Management & Modeling

- Q: How should I structure my experimental data for effective machine learning (ML) model training?

- A: Create a unified relational data table. Each row is one formulation ("sample"), and columns are features.

Detailed Experimental Protocol: HPLC Analysis of Drug Encapsulation Efficiency

Objective: To accurately quantify the amount of drug (e.g., Paclitaxel) encapsulated within PLGA nanoparticles. Materials: See "Scientist's Toolkit" below. Method:

- Nanoparticle Disruption: Precisely pipette 100 µL of the nanoparticle suspension into a 1.5 mL Eppendorf tube. Add 900 µL of DMSO or acetonitrile to completely dissolve the polymer and release the drug. Vortex vigorously for 3 minutes.

- Centrifugation: Centrifuge the solution at 14,000 rpm for 10 minutes to pellet any insoluble stabilizers (e.g., PVA) or salts.

- Dilution: Transfer 100 µL of the clear supernatant into a fresh vial containing 900 µL of HPLC mobile phase (e.g., 50:50 Acetonitrile:Water). Perform serial dilution if necessary to fall within the calibration range.

- HPLC Analysis: Inject 20 µL of the diluted sample onto a reversed-phase C18 column. Use a calibrated UV-Vis or fluorescence detector. Quantify the drug concentration by comparing the peak area to a standard curve (linear range: 0.1–100 µg/mL, R² > 0.999).

- Calculation:

- Total Drug (T): Measured from the disrupted sample.

- Free Drug (F): Measured from the supernatant of an un-disrupted sample centrifuged using an ultracentrifugation filter (MWCO 30 kDa).

- Encapsulation Efficiency (%) = [ (T - F) / T ] x 100.

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Polymer DDS Research |

|---|---|

| PLGA (Poly(D,L-lactide-co-glycolide)) | Biodegradable polymer backbone; composition (LA:GA ratio) dictates degradation rate and drug release kinetics. |

| Sn(Oct)₂ (Tin(II) 2-ethylhexanoate) | Common catalyst for ring-opening polymerization of lactides and glycolides. Requires careful handling due to moisture sensitivity. |

| Polyvinyl Alcohol (PVA) | Widely used stabilizer/emulsifier in nanoparticle formulation. Degree of hydrolysis and molecular weight critically impact particle size and stability. |

| Dichloromethane (DCM) & Ethyl Acetate | Organic solvents for oil-in-water emulsion methods. Ethyl acetate is less toxic and facilitates easier removal. |

| Dialysis Membranes (MWCO 3.5-14 kDa) | For purifying nanoparticles and studying drug release kinetics in a controlled environment. |

| SZ-10 Nanoparticle Analyzer (or equivalent) | Instrument for Dynamic Light Scattering (DLS) to measure hydrodynamic diameter (size), PDI, and zeta potential. |

| Asymmetrical Flow Field-Flow Fractionation (AF4) with MALS | Advanced, orthogonal technique to DLS for separating and characterizing complex nanoparticle mixtures by size with high resolution. |

| High-Performance Liquid Chromatography (HPLC) | Essential for quantifying drug loading, encapsulation efficiency, and monitoring release profiles with high specificity and sensitivity. |

Technical Support Center

Troubleshooting Guides & FAQs

Q1: Our GPC/SEC results show unexpected high polydispersity (Đ > 2.0) in what should be a controlled polymerization. What are the primary causes and corrective actions? A: High, inconsistent Đ often indicates inadequate mixing, initiator deactivation, or thermal gradients.

- Check & Correct:

- Mixing Efficiency: Ensure stirring rate is sufficient for reactor volume. Use baffled reactors for viscous solutions.

- Initiator Freshness: Confirm initiator is stored correctly (often at -20°C, under inert gas) and solution is prepared fresh. Titrate to determine active concentration.

- Temperature Control: Calibrate thermocouples and verify heating/cooling jacket performance. Allow sufficient equilibration time before monomer addition.

- Protocol for Initiator Potency Check (Iodometric Titration for Peroxides):

- Dissolve ~0.1g of initiator in 20mL glacial acetic acid.

- Add 1g of solid KI.

- Flush with N₂, heat to 60°C for 5-10 min in the dark.

- Titrate liberated iodine with 0.1M sodium thiosulfate (Na₂S₂O₃) until colorless.

- Calculate active %: Active % = (VNa2S2O3 * MNa2S2O3 * Minitiator) / (2 * Wsample) * 100.

Q2: During rheological time-sweeps, our polymer melt shows erratic torque readings and slips from the parallel plate geometry. How can we ensure reliable data? A: This is a common issue related to sample loading and normal force control.

- Step-by-Step Solution:

- Sample Loading: Pre-heat the polymer pellet/disk on the lower plate at the test temperature for 2-3 minutes to soften it before trimming.

- Gap Setting: Use a "compression and trim" method. Set the gap slightly larger than the sample, then compress slowly while trimming excess with a hot blade.

- Normal Force: After trimming, allow the sample to thermally equilibrate for 5 min. Then, apply a small, constant normal force (e.g., 0.5-1.0 N) during the test to maintain contact and prevent slip. Apply a thin layer of silicon carbide sandpaper to plate surfaces for melts.

- Strain/Stress Check: Perform an amplitude sweep first to confirm your time-sweep is within the linear viscoelastic region.

Q3: Our degradation kinetics study (e.g., hydrolysis) shows poor fit to common models (zero-order, first-order). How should we proceed with data analysis? A: Simple models often fail for heterogeneous systems or where degradation products alter the microenvironment.

- Actionable Steps:

- Monitor Multiple Properties: Do not rely solely on mass loss. Collect concurrent data on molecular weight (GPC), solution viscosity, and pH change.

- Employ a Two-Stage Model: Fit early-stage data to one model (e.g., surface erosion) and later-stage to another (e.g., bulk erosion).

- Use a Robust, Multi-Parameter Model: Consider the following semi-empirical model for autocatalytic hydrolysis: dM/dt = -k * [Cester]^a * [Cacid]^b, where C_acid is the concentration of carboxylic acid end groups.

- Protocol for Synchronized Degradation Kinetics:

- Prepare identical polymer samples (n≥5) in controlled buffer (pH 7.4, 37°C).

- At predetermined time points, remove one sample and perform this sequence:

- Blot dry, weigh for mass loss.

- Filter media, measure pH.

- Dry sample completely for GPC analysis in THF or DMF.

- Use remaining solution for UV-Vis analysis of any released products.

Q4: How do we reconcile discrepancies between molecular weight from GPC (relative) and from light scattering (absolute)? A: Discrepancies arise from GPC's reliance on polymer standards and differences in hydrodynamic volume.

- Resolution Protocol:

- Always use a multi-detector GPC (RI + MALS + Viscosity).

- For GPC Calibration: Create a broad, polymer-specific calibration curve using characterized narrow dispersity samples of the same polymer class.

- Key Calculation: Use the data from the table below to convert between values. The Mark-Houwink-Sakurada parameters relate intrinsic viscosity [η] to molecular weight: [η] = K * M^a.

Data Presentation

Table 1: Key Polymer Property Benchmarks & Model Parameters

| Property | Ideal Range (High-Performance) | Typical Challenge Range | Key Influencing Factor | Common Measurement Standard |

|---|---|---|---|---|

| Mn (Thermoplastic) | 50,000 - 200,000 g/mol | < 30,000 (brittle) | Initiator/Monomer ratio, conversion | ASTM D6474 (GPC) |

| Đ (Controlled Poly.) | 1.01 - 1.20 | > 1.50 | Mixing, rate of initiation > rate of propagation | ISO 16014 |

| Complex Viscosity (η*, melt) | Log-Linear with shear | "Rheopexy" or severe thinning | Branching, MWD, thermal stability | ASTM D4440 |

| Hydrolysis Rate (k, 37°C pH 7.4) | 0.01 - 0.1 day⁻¹ | > 0.5 day⁻¹ (too fast) | Crystallinity, hydrophilic moiety % | N/A (Fit to model) |

| Glass Transition (Tg) | ±2°C of theoretical | Broad transition (>15°C width) | Residual solvent, plasticizers | ASTM D3418 (DSC) |

Table 2: Essential Research Reagent Solutions Toolkit

| Item | Function & Critical Note |

|---|---|

| HPLC-Grade Tetrahydrofuran (with BHT stabilizer) | GPC/SEC solvent. Must be freshly distilled over sodium/benzophenone or filtered through an alumina column to remove peroxides for accurate Mw analysis. |

| Polystyrene & Poly(methyl methacrylate) EasiVials | Narrow Đ calibration kits for GPC. Must be matched to polymer chemistry (non-aqueous) for meaningful relative comparisons. |

| Benzoyl Peroxide (recrystallized) | Common radical initiator. Must be recrystallized from chloroform/methanol and stored dry at -20°C to ensure reliable kinetics. |

| Deuterated Chloroform (CDCl3) with TMS | Standard NMR solvent for polymer characterization. TMS (Tetramethylsilane) serves as internal chemical shift reference (δ = 0 ppm). |

| Phosphate Buffered Saline (PBS), 10X Concentrate | Standard medium for in vitro degradation and release studies. Always dilute to 1X and adjust to exact pH (7.4) before use to ensure consistency. |

| SEC/LS Grade N,N-Dimethylformamide (with LiBr) | Absolute Mw measurement solvent. LiBr (0.1 M) suppresses polyelectrolyte effects for polar polymers like polyacrylamides. |

Experimental Protocols

Protocol: Triangulation of Molecular Weight Objective: Determine absolute number-average (Mn), weight-average (Mw) molecular weight, and intrinsic viscosity. Materials: GPC system with RI, MALS, and viscometer detectors; characterized columns; polymer-specific standards; purified solvent. Method:

- System Calibration: Elute narrow dispersity standards. Generate a calibration curve (log Mw vs. time) and determine inter-detector delay volumes and band broadening corrections.

- Sample Preparation: Filter polymer solution (2 mg/mL) through a 0.22 μm PTFE filter.

- Injection & Run: Inject 100 μL at a flow rate of 1.0 mL/min. Record data from all detectors.

- Data Analysis (MALS): Use the Zimm equation: (Kc/R(θ)) = 1/Mw + 2A₂c. Plot *(Kc/R(θ))* vs. sin²(θ/2) at each elution slice to determine Mw and radius of gyration (Rg).

- Data Analysis (Viscometer): Calculate intrinsic viscosity [η] from the specific viscosity ηsp at each slice: *[η] = ηsp / c*.

Protocol: Small-Amplitude Oscillatory Shear (SAOS) Rheology for Stability Objective: Characterize viscoelastic properties and thermal stability of a polymer melt. Materials: Strain-controlled rheometer with parallel plate geometry, temperature controller, nitrogen purge. Method:

- Geometry & Loading: Select 8-25 mm diameter plates based on sample stiffness. Load pre-melted pellet, compress at above Tg/Tm, and trim excess.

- Amplitude Sweep: At a fixed frequency (e.g., 10 rad/s), measure storage (G') and loss (G") modulus over a strain range (0.01% - 100%) to determine the linear viscoelastic region (LVR).

- Frequency Sweep: Within the LVR (e.g., 1% strain), measure G' and G" over an angular frequency range (e.g., 0.1 - 100 rad/s) to map relaxation behavior.

- Time Sweep: At a frequency and strain within the LVR, measure G' and G" over 1-2 hours at the processing temperature to assess thermal stability (indicated by a sharp drop in G').

Mandatory Visualizations

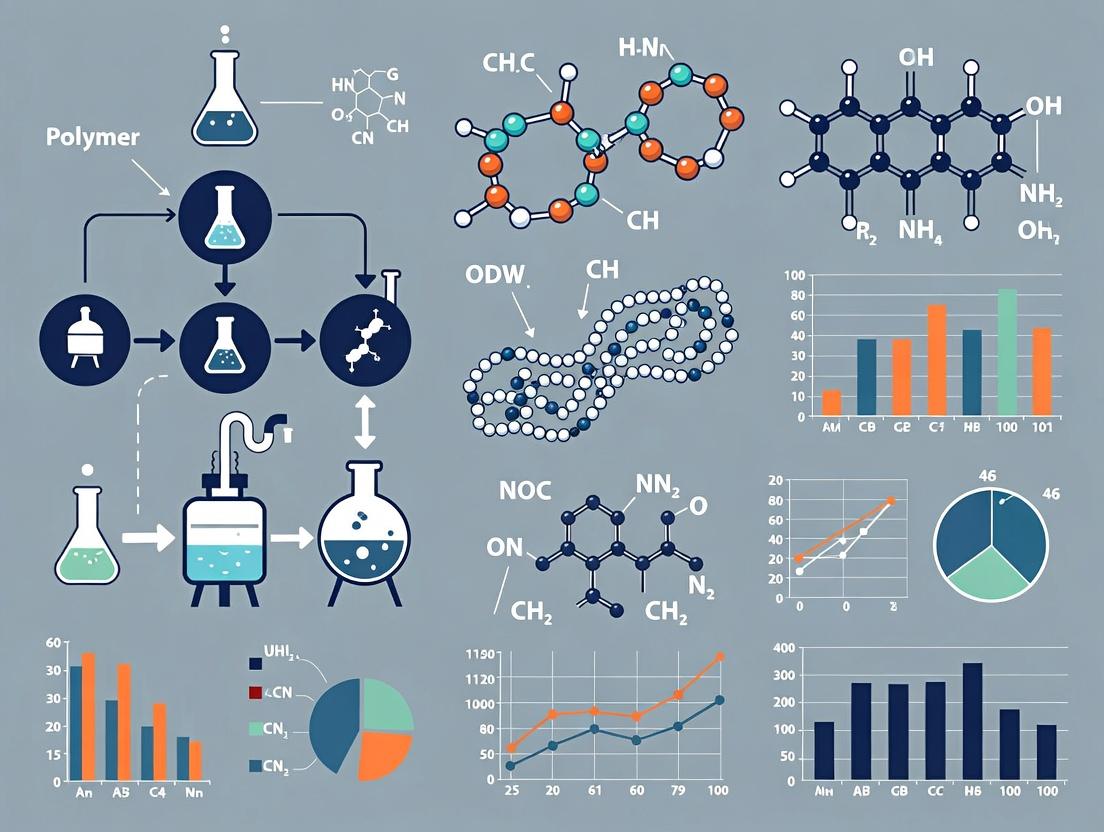

Polymer Data-Driven Research Workflow

Polymer Degradation Autocatalytic Pathway

Technical Support & Troubleshooting Center

Frequently Asked Questions (FAQs)

Q1: In a high-throughput screening (HTS) experiment for polymer film libraries using automated FTIR mapping, we observe poor signal-to-noise ratios. What are the primary causes and solutions? A: Poor S/N in automated FTIR mapping often stems from incorrect contact pressure, moisture interference, or suboptimal spectral averaging.

- Solution: Follow the protocol below and consult Table 1 for parameter optimization.

Q2: During in-line Raman monitoring of a polymerization reaction, the baseline signal drifts significantly over time. How can this be corrected? A: Baseline drift in in-line Raman is commonly caused by probe window fouling or temperature fluctuations affecting the spectrometer.

- Solution: Implement the automated baseline correction protocol. For persistent drift, initiate the probe cleaning cycle (see Reagent Solutions).

Q3: Our high-throughput DSC data for copolymer blends shows inconsistent glass transition (Tg) measurements between replicates. What could be the issue? A: Inconsistent Tg in HTS-DSC is frequently due to poor sample seal integrity (moisture ingress) or non-uniform sample mass across wells.

- Solution: Ensure precise, automated liquid handling for sample loading and use validated hermetic seal protocols. Standardize sample mass to 5.0 ± 0.2 mg.

Q4: When using in-line process analytics (PAT) for data-driven optimization, how do we synchronize time-series spectral data with reactor process variables (like temperature, viscosity)? A: This requires a shared timing trigger and a unified data architecture.

- Solution: Use an OPC UA or similar industrial communication protocol to timestamp all data streams. Implement the data fusion workflow shown in Diagram 2.

Detailed Experimental Protocols

Protocol 1: High-Throughput FTIR Mapping for Polymer Film Libraries Objective: To acquire consistent, high-quality IR spectra for rapid composition screening. Materials: See "Research Reagent Solutions" table. Method:

- Film Preparation: Spin-coat polymer solutions onto a 96-element silicon wafer substrate. Dry under vacuum at 40°C for 12 hours.

- Instrument Setup: Load wafer into automated stage. Purge compartment with dry N₂ for 15 min (dew point < -40°C).

- Mapping Parameters: Set as per Table 1. Perform background scan on a clean silicon spot before each row.

- Data Acquisition: Run automated map using software. Validate each spectrum in real-time for absorbance range (0.1 - 1.2 AU).

- Post-processing: Apply vector normalization (1800-600 cm⁻¹ region) and integrate key carbonyl (C=O) peak area (1720-1750 cm⁻¹) for analysis.

Protocol 2: In-Line Raman Monitoring for Free-Radical Polymerization Objective: Real-time tracking of monomer conversion and copolymer composition. Materials: Immersion optic Raman probe (785 nm), spectrometer, reactor fitting. Method:

- Probe Calibration: Perform intensity calibration using a NIST-traceable white light source. Perform wavelength calibration using cyclohexane.

- Installation: Insert probe into reactor via an Ingold-type port, ensuring the window is flush with the interior.

- Acquisition Settings: Laser power: 400 mW; Integration time: 30 s; Spectral range: 200-1800 cm⁻¹. Acquire spectrum every 2 minutes.

- Reference Model: Develop a PLS model correlating the C=C stretch peak (~1640 cm⁻¹) decrease with monomer concentration from offline GC data.

- Real-time Analysis: Stream pre-processed spectra (cosmic ray removal, vector normalization) to the PLS model for instantaneous conversion prediction.

Data Tables

Table 1: Optimized Parameters for HTS-FTIR Mapping

| Parameter | Value Range | Optimal Setting | Function |

|---|---|---|---|

| Spectral Resolution | 2 - 16 cm⁻¹ | 4 cm⁻¹ | Balances detail & scan speed |

| Number of Scans | 16 - 128 | 64 per pixel | Defines signal averaging |

| Aperture Size | 50 - 200 µm | 100 µm | Defines spatial resolution |

| Step Size (X, Y) | 50 - 200 µm | 100 µm | Controls mapping density |

| Contact Force | 5 - 30 g | 15 g | Ensures optical contact |

Table 2: Key Process Variables & In-Line Analytical Techniques

| Process Variable | Target Range | Primary PAT Tool | Data Sampling Rate | Key Performance Metric |

|---|---|---|---|---|

| Monomer Conversion | 0 - 100% | In-line Raman | 120 s | Prediction Error: ≤ 2.5% |

| Molecular Weight | 10k - 500k Da | In-line GPC/SEC | 900 s | Correlation R²: ≥ 0.95 |

| Melt Viscosity | 1 - 10,000 Pa·s | In-line Rheometer | 60 s | Shear Rate Accuracy: ± 5% |

| Particle Size (Dispersion) | 50 - 500 nm | In-line DLS | 180 s | PDI Resolution: ≤ 0.05 |

Diagrams

HTS to Data-Driven Optimization Workflow

PAT Data Fusion Architecture for Real-Time Analysis

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Experiment | Key Specification/Note |

|---|---|---|

| Silicon Wafer Substrate (76x128 mm) | Low-background substrate for HTS FTIR mapping of films. | IR-transparent, 96-position grid lithographically marked. |

| Hermetic DSC Crucibles (Aluminum) | Ensures integrity of samples during HTS thermal analysis. | Must be sealed with dedicated press; gold-coated for inertness. |

| Raman Probe Cleaning Kit | Removes polymer fouling from in-line probe window. | Contains safe, non-abrasive solvent (e.g., dimethylacetamide) and soft lint-free wipes. |

| NIST-Traceable Polystyrene | Calibration standard for in-line GPC/SEC. | Narrow molecular weight distribution (Mw/Mn < 1.05). |

| PAT Data Management Software | Unifies, synchronizes, and pre-processes streams from multiple analyzers. | Must support OPC UA, Python/R APIs, and real-time visualization. |

Technical Support Center: Troubleshooting Guides & FAQs

This support center provides assistance for common experimental challenges encountered while correlating polymer structure with drug release and biocompatibility. The content is framed within a data-driven optimization paradigm for polymer manufacturing research.

Frequently Asked Questions (FAQs)

Q1: My drug release profile from a PLGA matrix shows an unexpected initial burst release, skewing my correlation data. What are the primary causes? A: A high initial burst release (>40% in first 24 hours) is frequently correlated with surface-adsorbed drug and porous polymer morphology. From recent literature (2023-2024), key data-driven factors include:

- Inadequate Encapsulation Efficiency: Lower efficiency (<70%) often leaves excess drug on particle surfaces.

- High Porosity & Large Pore Size: Pore diameter > 200 nm, as measured by mercury intrusion porosimetry, facilitates rapid aqueous intrusion.

- Low Molecular Weight Polymer: Using PLGA with Mn < 20 kDa accelerates initial degradation and release.

- Poor Solvent Removal During Fabrication: Incomplete removal of organic solvents (e.g., dichloromethane) creates porous channels.

Protocol: To diagnose, perform Scanning Electron Microscopy (SEM) on your matrices. Use image analysis software (e.g., ImageJ) to quantify surface porosity. Correlate this with your first-order release rate constant (k1) calculated from the first 24-hour data.

Q2: My in vitro biocompatibility assay (e.g., MTT) shows high cytotoxicity for a polymer formulation that passed initial characterization. How do I systematically troubleshoot? A: Cytotoxicity not predicted by chemical analysis often stems from physicochemical interactions or degradation byproducts. Follow this diagnostic workflow:

- Test Degradation Media: Collect the supernatant from your polymer degradation study (e.g., PBS at 37°C for 72 hours). Test this conditioned media on cells separately. High cytotoxicity here implicates soluble leachables (e.g., residual initiators, monomers, stabilizers).

- Measure Surface Charge: Use Dynamic Light Scattering (DLS) to determine the zeta potential of polymer nanoparticles. Highly positive surfaces (> +20 mV) often correlate with membrane disruption and cell death.

- Check Sterilization Method: Autoclaving can hydrolyze polyesters like PLA. Use gamma irradiation or ethylene oxide, and re-test sterility via agar plate assays.

Q3: I am trying to establish a structure-property relationship. How do I quantitatively link copolymer composition (e.g., LA:GA ratio in PLGA) to release profile parameters? A: Implement a Design of Experiments (DoE) approach. Vary the Lactide:Glycolide (LA:GA) ratio and molecular weight systematically. Measure the resulting glass transition temperature (Tg) and hydrophilicity (via water contact angle). Use multiple linear regression to model their effect on the release rate constant (k) and diffusion exponent (n) from the Korsmeyer-Peppas model.

Experimental Protocols for Key Experiments

Protocol 1: Determining Drug Release Kinetics and Modeling Objective: To quantitatively profile drug release and fit data to mechanistic models. Materials: Dialysis bags (MWCO 12-14 kDa), release medium (PBS, pH 7.4), shaking water bath (37°C, 50 rpm), HPLC system. Method:

- Pre-hydrate dialysis membrane.

- Place polymer-drug formulation (equivalent to 5 mg drug) in the bag, seal.

- Immerse in 200 mL release medium.

- Withdraw 1 mL aliquots at predetermined times (e.g., 1, 2, 4, 8, 24, 48, 72, 168 hrs). Replace with fresh pre-warmed medium.

- Analyze aliquot drug concentration via HPLC.

- Data Fitting: Fit cumulative release data to models:

- Zero-order:

M_t / M_inf = k*t - Higuchi:

M_t / M_inf = k_H * sqrt(t) - Korsmeyer-Peppas:

M_t / M_inf = k_KP * t^n(for first 60% of release).

- Zero-order:

Protocol 2: In Vitro Biocompatibility Assessment via Indirect Contact Objective: To evaluate cytotoxicity of polymer degradation products. Materials: L929 fibroblast cells, DMEM culture medium, 96-well plates, MTT reagent, DMSO. Method:

- Prepare Conditioned Medium: Sterilize polymer samples (e.g., 1 cm² film or 100 mg particles). Incubate in serum-free medium (1 mL) at 37°C for 72 hours. Filter supernatant (0.22 µm).

- Seed cells in 96-well plate at 10,000 cells/well. Incubate for 24 hrs.

- Replace normal medium with 100 µL of conditioned medium (test) or fresh medium (control). Include a blank (medium only).

- Incubate for 24-48 hrs.

- Add 10 µL MTT solution (5 mg/mL) per well. Incubate 4 hrs.

- Carefully remove medium, add 100 µL DMSO to solubilize formazan crystals.

- Measure absorbance at 570 nm using a microplate reader.

- Calculate Cell Viability:

(%) = (Abs_sample - Abs_blank) / (Abs_control - Abs_blank) * 100. Viability < 70% (per ISO 10993-5) indicates a cytotoxic response.

Data Presentation Tables

Table 1: Correlation of PLGA Properties with Drug Release Metrics

| LA:GA Ratio | Mw (kDa) | Tg (°C) | Initial Burst (24h) | Release Rate Constant (k, h⁻ⁿ) | Diffusion Exponent (n) | Model Best Fit |

|---|---|---|---|---|---|---|

| 50:50 | 30 | 45 | 45% | 0.35 | 0.89 | Korsmeyer-Peppas |

| 75:25 | 30 | 50 | 25% | 0.21 | 0.67 | Higuchi |

| 85:15 | 50 | 55 | 15% | 0.12 | 0.51 | Zero-Order |

Table 2: Common Polymer Additives & Their Impact on Biocompatibility

| Additive / Impurity | Typical Function | Cytotoxicity Threshold | Primary Assay for Detection |

|---|---|---|---|

| Residual Tin Catalyst (e.g., Stannous Octoate) | Polymerization Catalyst | > 1000 ppm | ICP-MS |

| Plasticizer (e.g., DEHP) | Increases Flexibility | > 3 µg/mL | GC-MS |

| Residual Monomer (e.g., Lactide) | Synthesis Building Block | > 0.5% w/w | HPLC-UV |

| Antioxidant (e.g., BHT) | Prevents Oxidation | > 50 µg/mL | HPLC-FLD |

Visualizations

Diagram 1: Troubleshooting Cytotoxicity Workflow

Diagram 2: Data-Driven Polymer Optimization Cycle

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function & Relevance to Correlation Studies |

|---|---|

| PLGA (Poly(lactic-co-glycolic acid)) | Benchmark biodegradable copolymer. Varying LA:GA ratio and Mw allows systematic study of hydrophilicity/crystallinity on release. |

| Dialysis Membranes (MWCO 3.5-14 kDa) | Standard tool for in vitro release studies under sink conditions. MWCO must be 3-4x smaller than polymer Mw for accurate data. |

| MTT (3-(4,5-dimethylthiazol-2-yl)-2,5-diphenyltetrazolium bromide) | Yellow tetrazole reduced to purple formazan by viable cell mitochondria. Standard for ISO 10993-5 biocompatibility screening. |

| Gel Permeation Chromatography (GPC) Standards (Polystyrene, PMMA) | Essential for determining critical polymer properties: molecular weight (Mn, Mw) and polydispersity index (PDI), key structural variables. |

| Phosphate Buffered Saline (PBS), pH 7.4 | Standard physiological release medium. pH must be monitored, as acidic degradation products of polyesters can autocatalyze hydrolysis. |

| AlamarBlue / Resazurin | Alternative to MTT; fluorescent/colorimetric redox indicator for cell viability. Offers superior sensitivity and linear range for dose-response. |

| Dynamic Light Scattering (DLS) & Zeta Potential Cell | For nanoparticle formulations, measures hydrodynamic diameter (size) and surface charge (zeta potential), critical for stability and cell interaction. |

Current Challenges in Polymer Data Collection, Standardization, and FAIR Principles

Troubleshooting Guides & FAQs

Q1: Our high-throughput polymer synthesis robot is generating inconsistent batch data. What are the primary checkpoints? A: Inconsistent data often stems from uncontrolled environmental variables or calibration drift.

- Calibration Check: Re-calibrate all liquid handlers and in-line spectrometers using certified reference materials.

- Environmental Logging: Verify that temperature and humidity sensors are logging correctly. Batch data invalid without this metadata.

- Reagent Degradation: Check solvent water content and monomer inhibitor levels. Implement a "reagent QC" step before critical runs.

- Data Pipeline Audit: Ensure the automated data capture script is pulling from the correct instrument output file version.

Q2: When sharing polymer datasets for a consortium project, reviewers complain the data is not "interoperable." What does this mean practically? A: Interoperability means others can use your data without ambiguity. Common failures include:

- Missing Context: "Mw 50,000" is not interoperable. It must be "Weight-average molar mass (Mw) = 50,000 g/mol, determined by THF-SEC against PMMA standards."

- Unstructured Files: Data stored only in PDF reports or proprietary instrument software files. Required: Raw data (e.g., .txt, .csv) + standardized metadata file (e.g., JSON-LD using a polymer ontology).

Q3: We cannot find historical polymer rheology data in our lab's shared drive. How can we improve data findability? A: This is a core FAIR (Findable) challenge. Implement a mandatory digital lab notebook (ELN) protocol:

- Persistent Identifiers: Assign a unique, searchable ID (e.g., P-2024-001) to every sample. This ID must be in the ELN, file names, and printed on vials.

- File Naming Convention: Enforce:

[PolymerID]_[Technique]_[Date]_[OperatorInitials].csv(e.g.,P-2024-001_Rheology_20241015_AS.csv). - Indexed Repository: Store all final data in a dedicated platform (e.g., dedicated instance of

Materials Cloud) with tagged metadata, not a general-purpose cloud drive.

Q4: How do we standardize the description of a complex copolymer for a database? A: Use a systematic, machine-readable notation and controlled vocabulary.

- IUPAC Notation: Use standard notation (e.g.,

poly(stat-alt-ran)descriptions). - SMILES/String Notation: Employ a simplified string representation (e.g.,

*CC*for polyethyelene) for searchability. - Contextual Metadata: Link the structure to its synthesis method (e.g., ATRP, ROMP) and catalyst ID.

Q5: Our AI model for predicting glass transition temperature (T_g) performs poorly on new polymer families. What data quality issues could be the cause? A: This highlights "Reusability" in FAIR. Likely issues:

- Hidden Biases: Training data was mostly from one synthesis method (e.g., anionic polymerization). Data for new families from RAFT may have different impurity profiles.

- Inconsistent Measurement Protocols: T_g values were collected at different heating rates (e.g., 5°C/min vs. 20°C/min) without annotation.

- Solution: Retrain with a federated dataset that explicitly tags the measurement protocol (ASTM D3418) and synthesis method for each entry.

Key Experimental Protocols

Protocol 1: Standardized Data Capture for Polymer Synthesis

Objective: To generate FAIR-compliant data from a batch polymerization reaction.

- Pre-Synthesis:

- Assign a unique Polymer ID.

- In the ELN, log all reagent IDs (linked to vendor/lot number), target DP, and theoretical M_n.

- Record ambient conditions (T, %RH).

- Synthesis Execution:

- Use automated reactors with in-line sensors (NIR, Raman) where possible.

- Save raw sensor temporal data as

.csvwith timestamp linked to Polymer ID.

- Post-Synthesis:

- Immediately log actual yield, sample photos.

- Split sample for characterization, ensuring each vial is labeled with Polymer ID and analysis technique (e.g.,

P-2024-001_SEC).

- Data Packaging:

- Create a folder named by Polymer ID.

- Populate with: raw sensor data, ELN entry PDF, and a

metadata.jsonfile using the PMD (Polymer Metadata) schema.

Protocol 2: Implementing FAIR Principles for a Polymer Characterization Dataset

Objective: To prepare size-exclusion chromatography (SEC) data for public repository submission.

- Data Curation:

- Convert proprietary instrument files (.ch) to open formats (.csv containing retention time and detector counts).

- Include calibration curve data and mobile phase details.

- Metadata Annotation:

- Create a machine-readable metadata sheet. Key fields:

Polymer ID,Synthesis Method,SEC Instrument Model,Columns Used,Mobile Phase,Flow Rate,Calibration Standards,Detectors,Data Processing Software,Date.

- Create a machine-readable metadata sheet. Key fields:

- Repository Submission:

- Upload to a discipline-specific repository (e.g.,

PolymerC). - Request a Digital Object Identifier (DOI).

- The DOI and a citation become the foundation for reusability.

- Upload to a discipline-specific repository (e.g.,

Data Presentation

Table 1: Common Data Standardization Gaps in Polymer Research

| Data Type | Common Non-Standard Format | FAIR-Compliant Standard | Tool for Conversion |

|---|---|---|---|

| Chemical Structure | Hand-drawn image in PPT | Simplified molecular-input line-entry system (SMILES) or InChIString | RDKit, Open Babel |

| Synthesis Protocol | Paragraph in lab notebook | Standardized JSON schema (e.g., SPDM) | NLP parsers, manual templates |

| Chromatography (SEC) | Proprietary .ch, .asc files | Open CSV with retention time & intensity | Instrument export scripts, OpenChrom |

| Thermal Analysis (DSC) | Image of heat flow curve | CSV of Temperature (°C) vs. Heat Flow (W/g) | TA Instruments TRIOS software export |

| Mechanical Properties | Excel table with ambiguous headers | CSV with columns labeled per ISO/ASTM standards | Custom Python pandas script |

Table 2: Impact of Data Standardization on Model Performance

| Training Data Quality | Dataset Size (Polymer Samples) | Prediction Error (T_g, °C) | Time to Prepare Data for Modeling |

|---|---|---|---|

| Non-standardized, legacy lab data | 500 | ± 25 | 4-6 weeks |

| Standardized metadata, open file formats | 500 | ± 15 | 1-2 weeks |

| FAIR-compliant, consortium data | 2000 | ± 8 | 1-2 days |

Visualizations

Polymer FAIR Data Workflow Cycle

Hierarchy from Raw Data to FAIR Repository

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Polymer Data Generation & Standardization

| Item | Function | Example/Supplier |

|---|---|---|

| Certified Reference Materials | Calibrating instruments (SEC, DSC) for comparable data across labs. | NIST PS, PMMA standards (e.g., Agilent, PSS). |

| Structured Digital Lab Notebook | Centralized, searchable record of synthesis and metadata. | LabArchive, RSpace, SciNote. |

| Polymer Ontology (PMO) | Controlled vocabulary for tagging data (e.g., "ATRP", "T_g by DSC"). | The Polymer Ontology. |

| Chemical Registration System | Assigns unique, persistent IDs to new compounds/samples. | CSD-Director, custom solution with InChIKey. |

| Automated Data Parsing Scripts | Converts proprietary instrument files to open formats. | Custom Python scripts using pandas, openpyxl. |

| FAIR Data Repository | Platform for sharing compliant datasets with a DOI. | Materials Cloud, Zenodo, institutional repository. |

| Reference Polymer Libraries | Well-characterized polymers for model validation and benchmarking. | Polymer Properties Database (P-POD), commercial kits. |

AI in Action: Deploying Machine Learning Models for Smarter Polymer Process and Formulation Design

Technical Support Center & Troubleshooting Guides

Frequently Asked Questions (FAQs)

Q1: My regression model for predicting polymer glass transition temperature (Tg) shows high training accuracy but poor performance on new experimental data. What could be wrong? A: This is a classic sign of overfitting. Ensure your dataset is large enough (typically >100 data points per feature). Use regularization techniques (Lasso/L1, Ridge/L2) and perform feature selection to eliminate irrelevant molecular descriptors. Always validate using a hold-out test set or cross-validation.

Q2: When using an Artificial Neural Network (ANN) for property prediction, how do I decide on the network architecture? A: Start with a simple architecture (e.g., 2-3 hidden layers) and increase complexity only if needed. Use techniques like hyperparameter tuning (grid/random search) to optimize the number of nodes and layers. Employ dropout layers (e.g., 20-50% rate) to prevent overfitting, which is common with small polymer datasets.

Q3: My Support Vector Machine (SVM) model for classifying polymers as "processable" or "non-processable" is extremely slow to train. How can I improve this?

A: SVM training time scales poorly with large datasets. First, scale your features (e.g., using StandardScaler). For non-linear problems, consider using the Radial Basis Function (RBF) kernel but carefully tune the C and gamma parameters. If the dataset is very large, try using a linear SVM or switch to a more scalable model like an ANN.

Q4: Unsupervised clustering groups chemically dissimilar polymers together based on their properties. Is this an error? A: Not necessarily. Algorithms like k-means or hierarchical clustering group data points based on feature similarity in the defined property space, not necessarily on chemical intuition. Review your feature set—you may be missing key structural descriptors. Consider using dimensionality reduction (PCA, t-SNE) to visualize clusters before interpretation.

Q5: How do I handle missing or imbalanced data in my polymer dataset? A: For missing property values, use imputation methods (mean/median for continuous, mode for categorical) but be cautious not to introduce bias. For imbalanced datasets (e.g., few "high-performance" polymers), use techniques like SMOTE (Synthetic Minority Over-sampling Technique) or adjust class weights in your model's loss function.

Experimental Protocol: Workflow for Data-Driven Polymer Discovery

1. Data Curation & Featurization

- Source Data: Gather polymer properties (Tg, tensile strength, dielectric constant) from databases (PolyInfo, PubChem) or high-throughput experiments.

- Featurization: Compute molecular descriptors (e.g., using RDKit) or use fingerprints (Morgan fingerprints). For copolymers, include composition ratios and sequence descriptors.

- Output: A structured table of features (X) and target properties (y) for supervised learning.

2. Model Selection & Training Protocol

- Regression (e.g., for predicting modulus): Split data (70/15/15 for train/validation/test). Train a Gradient Boosting Regressor (XGBoost). Optimize hyperparameters (

n_estimators,max_depth) via 5-fold cross-validation on the training set. - ANN (e.g., for complex property prediction): Use a sequential model with Dense, Dropout, and BatchNorm layers. Compile with

adamoptimizer andmseloss. Train for up to 500 epochs with early stopping. - SVM (e.g., for binary classification of solubility): Scale features. Use a linear kernel for large datasets or RBF for smaller, non-linear problems. Find optimal

Cvia grid search on the validation set. - Unsupervised Learning (e.g., for novel polymer identification): Apply PCA to reduce dimensionality, then use DBSCAN or k-means clustering. Validate cluster coherence using silhouette scores.

3. Validation & Deployment

- Validate final model on the held-out test set. Report key metrics (see Table 1).

- Deploy model to screen virtual polymer libraries (e.g., from combinatorial monomer pairs).

Data Presentation

Table 1: Comparison of ML Model Performance on a Benchmark Polymer Tg Dataset (n=5000)

| Model Type | Specific Algorithm | Key Hyperparameters | R² (Test Set) | Mean Absolute Error (MAE) [K] | Training Time (s) | Best Use Case in Polymer Discovery |

|---|---|---|---|---|---|---|

| Supervised (Regression) | Gradient Boosting | nestimators=200, maxdepth=5 | 0.89 | 12.5 | 45.2 | Predicting continuous properties from structural fingerprints. |

| Supervised (Non-linear) | Artificial Neural Network | Layers: [64, 32, 16], Dropout=0.3 | 0.91 | 10.8 | 312.7 | Modeling complex, non-linear property relationships. |

| Supervised (Classification) | Support Vector Machine | Kernel='rbf', C=10, gamma='scale' | 0.94* | N/A | 189.5 | Binary classification (e.g., high/low performance) with clear margins. |

| Unsupervised | k-means Clustering | n_clusters=6, init='k-means++' | N/A | N/A | 8.7 | Discovering hidden groups in unlabeled data for novel polymer design. |

*Denotes accuracy score for classification.

Diagrams

Title: Workflow for ML-Driven Polymer Discovery

Title: Model Selection Logic for Polymer Data

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Materials & Tools for Data-Driven Polymer Research

| Item / Solution | Function in Polymer Discovery Context | Example/Note |

|---|---|---|

| High-Throughput Experimentation (HTE) Robotic Platform | Automates synthesis & characterization to rapidly generate large, consistent datasets for model training. | Essential for creating quality data. |

| Quantum Chemistry Software (e.g., Gaussian, ORCA) | Calculates electronic structure descriptors used as informative features for ML models. | Provides features like HOMO/LUMO, dipole moment. |

| Chemical Descriptor Toolkits (e.g., RDKit, Dragon) | Generates molecular fingerprints and structural descriptors from polymer/SMILES strings. | Critical for featurization. |

| ML Frameworks (e.g., Scikit-learn, TensorFlow/PyTorch) | Provides algorithms for regression, classification, clustering, and deep learning. | Use within Python ecosystem. |

| Polymer Databases (e.g., PolyInfo, PoLyInfo) | Source of historical experimental data for initial model training and benchmarking. | MIT's PolyInfo is a key resource. |

| Automated Characterization Tools (e.g., HPLC, GPC-SEC Autosamplers) | Provides consistent, high-volume molecular weight and purity data as model targets/features. | Reduces measurement noise. |

Technical Support Center: Troubleshooting Guides & FAQs

FAQ 1: How do I handle missing data in my polymer property dataset? Answer: Missing data is common in experimental datasets. For polymer systems, we recommend:

- Single Imputation: Use the median value for numeric features (e.g., catalyst concentration) if <5% of data is missing. For categorical features (e.g., solvent type), use the mode.

- Model-Based Imputation: For >5% missingness, use k-Nearest Neighbors (k-NN) imputation, as it leverages similar polymer formulations to estimate missing values.

- Deletion: Delete rows only if the missing data is for a critical target variable (e.g., final molecular weight). Avoid deleting based on missing input variables if using imputation.

Experimental Protocol for Data Validation: Before imputation, run a missing value analysis. Create a table of variables sorted by percent missing. Validate imputations by artificially removing 10% of known values, applying your chosen method, and calculating the Mean Absolute Percentage Error (MAPE) against the true values.

FAQ 2: My model performance plateaus despite adding more data. Which feature transformations should I prioritize? Answer: This often indicates uninformative feature representations. Prioritize domain-informed transformations:

- For Monomer Ratios: Transform raw masses or moles into mole fractions or functional group equivalents.

- For Reaction Conditions: Apply polynomial features (e.g., squared temperature) or interaction terms (e.g.,

Catalyst_Load * Time) to capture non-linear effects. - For Spectral Data: Use Principal Component Analysis (PCA) to reduce dimensionality of FTIR or NMR spectra before input. Retain components explaining 95% variance.

Experimental Protocol for Feature Transformation Impact Test:

- Baseline: Train a model (e.g., Random Forest) using only raw features.

- Iteration: Train identical models using progressively added transformed features (e.g., Group 1: mole fractions, Group 2: mole fractions + interaction terms).

- Evaluation: Track model performance (R², MAE) on a held-out test set for each group. Use the results table below to decide which transformations yield meaningful improvement.

Table 1: Impact of Feature Transformations on Model Performance

| Feature Set | Number of Features | Test R² | Test MAE (Mw, kDa) |

|---|---|---|---|

| Raw Inputs (masses, T, time) | 8 | 0.62 | 4.8 |

| + Monomer Mole Fractions | 10 | 0.71 | 3.9 |

| + Temperature^2, Pressure^2 | 12 | 0.78 | 3.2 |

| + Interaction Terms (T * Time, Cat. * Monomer) | 16 | 0.85 | 2.5 |

| + Top 10 PCA components from FTIR | 26 | 0.88 | 2.1 |

FAQ 3: How do I select the most relevant input variables from a high-dimensional screening study? Answer: Use a hybrid filter-wrapper selection method.

- Filter Step: Calculate mutual information or Spearman correlation between each input and the target (e.g., polymer tensile strength). Retain top 20-30% of features.

- Wrapper Step: Apply Recursive Feature Elimination (RFE) with a robust regressor (e.g., Support Vector Regression) on the filtered set. RFE iteratively removes the least important features.

- Stability Check: Use Boruta or a similar algorithm to confirm selected features are significantly more important than random noise variables.

Experimental Protocol for RFE:

- Initialize SVR with an RBF kernel.

- Use 5-fold cross-validation at each step of RFE to score feature subsets.

- Plot cross-validation score vs. number of features. Select the elbow point where score plateaus or drops.

- Validate final feature set on a completely independent batch of polymerization experiments.

Diagram 1: Feature Engineering Workflow for Polymer Data

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Materials for Polymer Feature Engineering Experiments

| Item & Supplier Example | Function in Experiment |

|---|---|

| Polymerization Reactor (e.g., Parr Instrument Co.) | Provides controlled environment (T, P, stirring) for synthesizing polymer samples to generate consistent data. |

| Gel Permeation Chromatography (GPC) System (e.g., Agilent) | Measures molecular weight distribution (Mw, Mn, PDI) - a key target variable for feature engineering. |

| Differential Scanning Calorimeter (DSC) (e.g., TA Instruments) | Measures thermal transitions (Tg, Tm) to link processing features to material properties. |

| FTIR Spectrometer (e.g., Thermo Fisher) | Generates high-dimensional spectral data for transformation (e.g., via PCA) into input features. |

| Chemometrics Software (e.g., SIMCA, PLS_Toolbox) | Enables advanced feature transformations, PCA, and projection to latent structures modeling. |

| Python/R with scikit-learn/mlr3 libraries | Core platform for implementing custom feature selection, transformation, and engineering pipelines. |

Technical Support Center

Troubleshooting Guides

Issue 1: Poor Model Performance & High Prediction Error

- Q: My ML model (e.g., Random Forest) for predicting copolymer composition shows high Mean Absolute Error (MAE) on the test set. What are the primary causes?

- A: High MAE typically stems from inadequate or non-representative training data. Ensure your dataset covers the full experimental design space (e.g., monomer feed ratio, initiator concentration, temperature ranges). Perform feature importance analysis; key process parameters might be missing. Check for overfitting by comparing training and validation error—regularization or dataset expansion may be needed.

Issue 2: Inconsistent Polymerization Kinetics

- Q: During synthesis, my observed reaction kinetics deviate significantly from simulations, leading to off-target copolymer compositions. How do I troubleshoot?

- A: First, verify reagent purity and accurate degassing procedures. Calibrate temperature sensors on the reaction vessel. Inconsistent kinetics often arise from variable initiator efficiency or trace inhibitors. Run a small-scale control experiment with a standard monomer pair to benchmark your setup. Ensure stirring rate is sufficient for homogeneous mixing.

Issue 3: Failed Correlation Between Composition and Release Profile

- Q: The copolymer composition is as predicted, but the drug release kinetics in vitro do not match the targeted profile. What steps should I take?

- A: This indicates the predictive model's output (composition) is insufficient. The release kinetics are governed by additional factors. Characterize the glass transition temperature (Tg), hydrophilicity/hydrophobicity balance, and film morphology (via SEM). Incorporate these as secondary targets into your data-driven optimization loop. Revisit your dissolution test conditions (pH, sink conditions) for consistency.

Frequently Asked Questions (FAQs)

Q1: What is the minimum dataset size required to build a reliable predictive model for this application? A: While dependent on complexity, a robust starting point is a dataset with 50-100 unique, well-characterized synthesis experiments. This should span at least 3-4 levels for each critical input variable (e.g., monomer A/B ratio, chain transfer agent concentration). Use statistical design of experiments (DoE) principles to maximize information gain.

Q2: Which machine learning algorithms are most effective for correlating synthesis parameters with copolymer composition? A: Based on current literature, tree-based ensemble methods (Random Forest, Gradient Boosting) often perform well due to their ability to handle non-linear relationships. For smaller datasets, Support Vector Regression (SVR) can be effective. Neural networks require larger datasets but can model highly complex interactions.

Q3: How do I validate that my predictive model is suitable for scaling from lab to pilot plant? A: Implement temporal validation: train your model on data from one reactor or one time period, and test it on data from a different period or reactor. Perform a "spike-in" experiment at the pilot scale, using model-recommended parameters, and compare the predicted vs. actual composition and release profile. A successful model should maintain an R² > 0.85 on this external validation.

Q4: What are the critical characterization techniques required for model training data? A: Essential techniques include: 1) NMR (for actual copolymer composition and sequence distribution), 2) GPC (for molecular weight and dispersity, Đ), and 3) In vitro dissolution testing under physiological conditions (for release kinetics profile, e.g., % released over time).

Data Presentation

Table 1: Performance Comparison of Predictive Models for Copolymer Molar Fraction

| Model | Training R² | Test Set MAE | Key Features Used |

|---|---|---|---|

| Linear Regression | 0.72 | 0.098 | Feed Ratio, Temp |

| Random Forest | 0.94 | 0.041 | Feed Ratio, Temp, [Initiator], Stir Rate |

| Gradient Boosting | 0.96 | 0.038 | Feed Ratio, Temp, [Initiator], Solvent % |

| Neural Network (2-layer) | 0.91 | 0.047 | All 6 Process Parameters |

Table 2: Impact of Hydrophilic Monomer Fraction on Drug Release Kinetics (T=50%)

| Polymer ID | % Hydrophilic Monomer | T50% (hours) | Release Mechanism (Peppas Model n) |

|---|---|---|---|

| P1 | 15% | 48.2 | 0.51 (Fickian Diffusion) |

| P2 | 25% | 24.5 | 0.63 (Anomalous Transport) |

| P3 | 40% | 8.1 | 0.89 (Case-II Transport) |

Experimental Protocols

Protocol 1: Synthesis of Acrylate-Based Copolymer Library for Model Training

- Setup: In a flame-dried 50 mL Schlenk flask equipped with a magnetic stir bar, prepare the monomer mixture by combining methyl methacrylate (MMA) and 2-hydroxyethyl methacrylate (HEMA) at prescribed molar ratios (e.g., 95:5 to 60:40).

- Initiation: Add azobisisobutyronitrile (AIBN, 1 mol% relative to total monomers) as initiator. Purge the mixture with argon for 30 minutes to remove oxygen.

- Polymerization: Immerse the flask in a pre-heated oil bath at 70°C ± 0.5°C with constant stirring (500 rpm) for 18 hours.

- Quenching & Purification: Cool the reaction to room temperature. Precipitate the copolymer into tenfold excess of cold hexane with vigorous stirring. Filter the polymer and dry under vacuum at 40°C until constant weight is achieved.

- Characterization: Determine composition by ¹H NMR (CDCl₃) and molecular weight by GPC (THF, PS standards).

Protocol 2: In Vitro Drug Release Kinetics Testing

- Film Preparation: Cast a 5% w/v solution of the drug-loaded copolymer (10% w/w drug loading) in acetone onto a Teflon plate. Allow slow solvent evaporation over 24 hours, followed by vacuum drying for 48 hours.

- Dissolution Study: Cut films into precise 1 cm x 1 cm squares. Immerse each square in 200 mL of phosphate buffer saline (PBS, pH 7.4) at 37°C in a USP Apparatus II (paddle) at 50 rpm.

- Sampling: At predetermined time intervals (e.g., 1, 2, 4, 8, 24, 48, 72 h), withdraw 3 mL of medium, filter (0.45 µm), and analyze drug concentration via validated HPLC-UV method. Replenish with an equal volume of fresh pre-warmed PBS.

- Data Modeling: Fit cumulative release data to mathematical models (e.g., Zero-order, Higuchi, Korsmeyer-Peppas) to determine the dominant release mechanism.

Diagrams

Workflow for Data-Driven Polymer Design

Key Factors Influencing Release Kinetics

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Experiment |

|---|---|

| Functional Monomers (e.g., HEMA, PEGMA) | Provide hydrophilicity to modulate swelling and drug diffusion rates. |

| Controlled Radical Initiator (e.g., AIBN, V-501) | Ensures reproducible radical generation and polymerization kinetics. |

| Chain Transfer Agent (e.g., DDM, 2-MPA) | Controls molecular weight and dispersity, critical for release consistency. |

| Deuterated Solvent (e.g., CDCl₃, DMSO‑d6) | Essential for accurate NMR characterization of copolymer composition. |

| Phosphate Buffer Saline (PBS, pH 7.4) | Simulates physiological conditions for in vitro drug release testing. |

| HPLC-grade Solvents & Columns | Enables precise quantification of drug concentration during release studies. |

| GPC/SEC Standards (e.g., PMMA, PS) | Calibrates the system for accurate molecular weight distribution analysis. |

Troubleshooting Guides & FAQs

Q1: During electrospinning, my jet is unstable, resulting in bead formation on the fibers. What are the primary causes and solutions?

A: Bead formation is commonly caused by insufficient polymer chain entanglement. Key factors are solution viscosity and surface tension. Increase polymer concentration to enhance viscosity. Alternatively, adjust solvent composition—adding a higher boiling point solvent (e.g., DMF to a DCM solution) can reduce bead formation by allowing more drying time. Ensure relative humidity is stable (optimal range 40-60%); high humidity can cause water condensation, disrupting jet solidification.

Q2: My nanofiber mat has poor mechanical integrity and tears easily. How can I improve this?

A: Poor mechanical strength often stems from weak inter-fiber bonding and small fiber diameter. Solutions include: (1) Post-processing with solvent vapor (e.g., ethanol or acetone vapors) to slightly weld fiber junctions. (2) Optimizing collector type—using a rotating drum collector aligns fibers better, increasing mat strength. (3) Adjusting process parameters: Increasing voltage or decreasing flow rate can produce thicker, stronger fibers. See Table 1 for parameter effects.

Q3: The electrospinning process clogs the needle tip frequently. How can I prevent this?

A: Clogging is due to premature solvent evaporation. Mitigation strategies: (1) Use a solvent system with a higher boiling point or a binary solvent mixture to control evaporation rate. (2) Implement a syringe pump with consistent, low pulsation flow. (3) Consider coaxial electrospinning where a core/sheath design can keep the tip clear, or use a nozzle-less electrospinning setup if clogging persists.

Q4: How do I handle highly volatile solvents in electrospinning for reproducible results?

A: For volatile solvents like chloroform or dichloromethane: (1) Use a sealed environmental chamber to control temperature and saturated solvent atmosphere, preventing rapid evaporation at the tip. (2) Reduce the distance between the needle tip and the collector (e.g., to 10-12 cm) to minimize flight time. (3) Utilize a humidity-controlled system, as dry air exacerbates evaporation.

Q5: When integrating AI for parameter optimization, what data format and preprocessing steps are critical?

A: Data must be structured with clear input variables (e.g., concentration, voltage, distance, flow rate) and output responses (fiber diameter, porosity, tensile strength). Preprocessing steps: (1) Normalize all input parameters to a [0,1] scale. (2) For categorical data (e.g., polymer type, collector geometry), use one-hot encoding. (3) Handle missing data via K-nearest neighbors (KNN) imputation. (4) Split data into training (70%), validation (15%), and test (15%) sets temporally if processes drift.

Data Presentation

Table 1: Effect of Key Electrospinning Parameters on Nanofiber Morphology

| Parameter | Typical Range Tested | Primary Effect on Fiber Diameter | Effect on Bead Formation | Recommended for Scaffold Use |

|---|---|---|---|---|

| Polymer Concentration | 8-15% (w/v) | Increase = Diameter Increase | High concentration reduces beads | 10-12% for uniform ~300 nm fibers |

| Applied Voltage | 15-25 kV | Moderate Increase = Diameter Decrease (initially) | High voltage can increase beads | 18-20 kV for stable jet |

| Tip-to-Collector Distance | 12-20 cm | Increase = Diameter Decrease (if evaporation allows) | Too short increases beads; too long can cause instability | 15 cm for PCL solutions |

| Flow Rate | 0.5-2.0 mL/h | Increase = Diameter Increase | High flow rate promotes beads | 1.0 mL/h for balance |

| Relative Humidity | 30-60% | Low RH decreases diameter via rapid drying | High RH (>60%) promotes bead defects | 45-55% for reproducibility |

Table 2: Example DoE (Central Composite Design) Layout and AI-Predicted vs. Actual Results

| Run | Conc. (%) | Voltage (kV) | Distance (cm) | Flow Rate (mL/h) | Predicted Diameter (nm) | Actual Diameter (nm) | Error % |

|---|---|---|---|---|---|---|---|

| 1 | 10 | 18 | 15 | 1.0 | 310 | 298 | -3.9 |

| 2 | 12 | 20 | 15 | 1.5 | 410 | 432 | +5.4 |

| 3 | 8 | 20 | 12 | 1.0 | 180 | 165 | -8.3 |

| 4 | 10 | 22 | 15 | 1.0 | 285 | 270 | -5.3 |

| 5 | 10 | 18 | 18 | 1.0 | 260 | 251 | -3.5 |

Experimental Protocols

Protocol 1: Standard Solution Preparation & Viscosity Measurement

- Weighing: Accurately weigh the polymer (e.g., PCL, MW 80,000) to achieve the target % w/v concentration (e.g., 10%).

- Dissolution: Add the polymer to a binary solvent mixture (e.g., 7:3 DCM:DMF) in a sealed glass vial.

- Mixing: Stir on a magnetic stirrer at 500 rpm, 25°C, for 12 hours until a clear, homogeneous solution is obtained.

- Viscosity Test: Using a rotational viscometer (e.g., Brookfield DV2T) with spindle SC4-18 at 20 RPM, measure viscosity at 25°C. Record value in cP.

Protocol 2: DoE Execution for Electrospinning

- Setup: Assemble electrospinning apparatus in a fume hood with controlled environment (T=24±1°C, RH=50±5%). Use a programmable syringe pump, high-voltage power supply, and grounded rotating drum collector (diameter 10 cm, speed 1000 rpm).

- Parameter Setting: For each DoE run, set parameters (concentration, voltage, tip-collector distance, flow rate) as per design matrix.

- Equilibration: Allow the system to stabilize for 5 minutes after parameter changes before starting collection.

- Fiber Collection: Electrospin for 30 minutes per run. Collect fibers on aluminum foil wrapped around the drum.

- Sample Labeling: Label each sample meticulously with run ID and parameters.

Protocol 3: Fiber Characterization via SEM Imaging & Analysis

- Sample Preparation: Cut a 5x5 mm section from the fiber mat. Mount on an SEM stub using conductive carbon tape.

- Coating: Sputter-coat with a 10 nm layer of gold/palladium using a coater (e.g., Leica EM ACE200).

- Imaging: Use SEM (e.g., Zeiss Sigma 300) at 5 kV accelerating voltage, 10 mm working distance. Capture at least 10 images at random locations at 10,000x magnification.

- Diameter Analysis: Use image analysis software (e.g., ImageJ with DiameterJ plugin). Measure at least 100 fibers per sample. Report mean diameter and standard deviation.

Diagrams

Title: Data-Driven Optimization Workflow for Electrospinning

Title: Parameter Effects on Electrospinning Outcomes

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Electrospinning | Example Product/Note |

|---|---|---|

| Biodegradable Polymers | Primary scaffold material; determines degradation rate and biocompatibility. | Poly(ε-caprolactone) (PCL), Poly(lactic-co-glycolic acid) (PLGA). Use medical grade. |

| Solvent Systems | Dissolves polymer; evaporation rate critically impacts fiber morphology. | Dichloromethane (DCM), Dimethylformamide (DMF), Tetrahydrofuran (THF). Use HPLC grade for purity. |

| Syringe Pump | Provides precise, pulsation-free flow of polymer solution. | Harvard Apparatus PHD ULTRA, with flow resolution of 0.001 mL/h. |

| High Voltage Power Supply | Generates the electric field (10-30 kV) to create the Taylor cone and jet. | Spellman SL Series, positive polarity, with digital readout. |

| Rotating Collector | Aligns fibers; speed controls mat anisotropy and density. | Custom drum (Ø 10-20 cm) with variable speed motor (100-3000 rpm). |

| Environmental Chamber | Controls temperature and humidity for reproducible drying dynamics. | Custom acrylic enclosure with humidity generator (e.g., TECHLAB) and hygrometer. |

| Conductive Substrate | Grounded surface for fiber collection. | Aluminum foil, conductive paper, or static dissipative mat. |

| Sputter Coater | Applies thin conductive metal layer on non-conductive fibers for SEM. | Quorum Q150R S with gold/palladium target. |

| Image Analysis Software | Quantifies fiber diameter, porosity, and alignment from micrographs. | ImageJ with DiameterJ & OrientationJ plugins; commercial: AZoMaterials. |

Technical Support Center & Troubleshooting

Frequently Asked Questions (FAQs)

Q1: My digital twin of a continuous stirred-tank polymerization reactor shows a persistent deviation between the predicted and actual monomer conversion rate. What are the primary calibration points to investigate?

A: Begin by validating the kinetic parameters in your reaction model. For free-radical polymerization of styrene, typical Arrhenius pre-exponential factors (A) and activation energies (Ea) are listed below. Ensure your digital twin's mass and heat transfer coefficients match the physical reactor's mixing efficiency and cooling jacket performance. Next, synchronize the digital twin's inlet feed stream data (flow rates, purity) with logged plant data.

Table: Typical Kinetic Parameters for Styrene Polymerization (Free-Radical)

| Parameter | Value Range | Units | Notes |

|---|---|---|---|

| Pre-exponential Factor (Ap) | 1.0 x 106 - 1.0 x 107 | L/mol·s | Propagation step |

| Activation Energy (Ea,p) | 26 - 32 | kJ/mol | Propagation step |

| Pre-exponential Factor (At) | 1.0 x 108 - 1.0 x 109 | L/mol·s | Termination step (combination) |

| Activation Energy (Ea,t) | 8 - 12 | kJ/mol | Termination step |

Q2: In my extrusion process digital twin, the predicted melt pressure at the die is consistently 10-15% lower than the sensor reading. What could cause this?

A: This often indicates inaccurate rheological modeling of the polymer melt. First, verify that the viscosity model (e.g., Cross-WLF) parameters are calibrated for the specific polymer grade and additives used. Confirm the accuracy of the barrel temperature profile input. A worn screw or barrel in the physical extruder, not accounted for in the twin, will reduce pumping efficiency and increase actual pressure.

Q3: How do I integrate real-time Raman spectroscopy data for copolymer composition into my reactor digital twin for closed-loop control?

A: Implement a data ingestion pipeline that streams processed spectroscopy data (e.g., monomer ratio) into the twin's state estimation module (often a Kalman Filter). The filter will correct the model's predicted state. Ensure your model's reaction rate equations account for cross-propagation kinetics. The workflow for this data-driven optimization is below.

Title: Real-Time Spectral Data Integration for Twin Calibration

Q4: When simulating a shift in production grade on a twin-screw extruder digital twin, the specific mechanical energy (SME) prediction is erratic. What is the proper protocol for steady-state validation?

A: Follow this experimental protocol to collect data for calibrating your extruder digital twin under steady-state conditions.

Experimental Protocol: Extruder Steady-State Data Acquisition for Digital Twin Calibration

- Objective: Establish steady-state operating data for a given polymer formulation and screw configuration to validate and calibrate the extrusion digital twin.

- Materials: See "Research Reagent Solutions" table.

- Procedure:

- Set all barrel zone temperatures, screw speed (RPM), and feeder rates to target values.

- Allow the extruder to run for a minimum of 5 residence times (θ) to reach steady state. Calculate θ = Machine Volume / Volumetric Output Rate.

- Once stable (melt pressure variation < ±2%), begin data logging over a 10-minute interval.

- Logged Parameters: All barrel and die temperatures (°C), screw speed (RPM), main drive motor amperage (A), feed rate (kg/hr), melt pressure at multiple barrel zones and die (bar), and melt temperature at die (°C).

- Collect a minimum of three polymer samples from the strand at consistent intervals for subsequent offline analysis (e.g., MFR, viscosity).

- Repeat procedure for different RPMs or feed rates to build a calibration dataset.

- Digital Twin Integration: Input the logged operating parameters into the twin. Compare the simulated motor torque/pressure and melt temperature to the logged data. Calibrate the material viscosity model parameters to minimize the error.

The Scientist's Toolkit

Table: Key Research Reagent Solutions for Polymerization & Extrusion Digital Twinning

| Item | Function in Context |

|---|---|

| Polymer Grade with Tracing Additives | Enables validation of mixing and residence time distribution models within the digital twin. |

| Calibrated Rheometer | Provides essential shear viscosity vs. rate data to parameterize the non-Newtonian flow models in reactor and extruder simulations. |

| In-line Spectrometer (Raman/NIR) | Delivers real-time compositional data (monomer conversion, copolymer ratio) for dynamic state estimation and model updating. |

| Data Historian Software (e.g., OSIsoft PI) | Aggregates time-series process data from sensors and control systems for synchronized input into the digital twin. |

| High-Fidelity Process Simulator (e.g., gPROMS, ANSYS Polyflow) | The core platform for building first-principles (mechanistic) models of reactors and extruders that form the basis of the digital twin. |

| Parameter Estimation Software Toolkit | Used to calibrate unknown model parameters (e.g., kinetic constants, heat transfer coefficients) by minimizing error between twin predictions and plant data. |

Q5: For a digital twin of a multi-zone tubular reactor, what is the recommended modeling approach to balance accuracy and computational speed for real-time deployment?

A: Use a hybrid modeling approach. Employ a fundamental 1D plug-flow reactor model with discretized zones for mass and energy balances. For complex rheology, integrate a reduced-order model (ROM) trained via machine learning on data from high-fidelity CFD simulations. This workflow enables data-driven optimization.

Title: Hybrid Model Development for Real-Time Deployment

Navigating Complexities: Advanced Troubleshooting and Multi-Objective Optimization Strategies

Diagnosing and Reducing Batch-to-Batch Variability in Polymer Synthesis

Technical Support Center

Troubleshooting Guides & FAQs

Q1: During a reversible addition-fragmentation chain-transfer (RAFT) polymerization, we observe high dispersity (Đ > 1.5) and inconsistent molecular weights between batches. What are the primary culprits? A: High dispersity in RAFT often stems from improper reagent handling or reaction conditions. Key factors to investigate:

- Oxygen Inhibition: Trace oxygen acts as a radical scavenger. Ensure rigorous deoxygenation via multiple freeze-pump-thaw cycles or nitrogen/vacuum sparging for at least 30 minutes prior to initiation.

- RAFT Agent Purity & Storage: Degraded RAFT agents (e.g., dithiobenzoates, trithiocarbonates) lose control. Store under inert atmosphere at -20°C and check purity via NMR before use.

- Initiator Half-life: Use an initiator (e.g., AIBN, V-70) with an appropriate half-life (t1/2) at your reaction temperature to maintain a constant radical flux.

- Monomer Purification: Inhibitors (e.g., MEHQ) in monomers must be removed by passing through an inhibitor-removal column or basic alumina.

Q2: In polycondensation reactions for polyesters, we see variable inherent viscosity (IV) and end-group consistency. How do we improve reproducibility? A: Variability in step-growth polymerizations is highly sensitive to stoichiometric imbalance and removal of condensation byproducts.

- Monomer Stoichiometry: Use high-purity diols and diacids. For exact 1:1 balance, consider using a slight excess of one monomer followed by end-capping, or employ the Carothers equation to target specific DP.

- Water Removal: Inconsistent byproduct (water) removal leads to variable reaction rates and incomplete conversion. Use a Dean-Stark apparatus for azeotropic removal or a controlled, high-purity nitrogen purge with a consistent flow rate (e.g., 50 mL/min).

- Catalyst Activity: Catalyst (e.g., tin(II) 2-ethylhexanoate) concentration and activity are critical. Prepare fresh catalyst solutions in dry solvent and add via precise microliter syringe.

Q3: Our emulsion polymerization batches show variable particle size (DLS) and colloidal stability. What steps should we take? A: Emulsion variability is linked to surfactant dynamics and nucleation.

- Surfactant Critical Micelle Concentration (CMC): Ensure surfactant (e.g., SDS, Triton X-100) concentration is consistently above the CMC. Prepare surfactant solutions gravimetrically and use a consistent water source (deionized, same resistivity).

- Initiator Decomposition Rate: Water-soluble initiators (e.g., KPS) decomposition rate is pH and temperature-sensitive. Buffer the aqueous phase (e.g., pH 7-10) and use a calibrated, well-mixed water bath for temperature control (±0.5°C).

- Stirring Rate & Geometry: Inconsistent mixing affects nucleation and heat transfer. Use a fixed stirring rate (e.g., 300 rpm) and identical impeller geometry (e.g., 4-blade pitched turbine) across all batches.

Table 1: Key Process Parameters Impacting Batch Variability in Common Polymerizations

| Polymerization Mechanism | Critical Parameter | Typical Control Range | Impact on Variability if Uncontrolled |

|---|---|---|---|

| RAFT (Controlled Radical) | [Oxygen] Post-Deoxygenation | < 1 ppm | High: Leads to long inhibition period, broad Đ. |

| Radical Initiator t1/2 at Trxn | 1-2 hours | Medium-High: Too short/long halves radical flux stability. | |

| Ring-Opening Polymerization (ROP) | Catalyst (e.g., Sn(Oct)2) Purity | > 99% | High: Impurities catalyze transesterification, broadening Đ. |

| Monomer (e.g., lactide) Water Content | < 50 ppm | High: Causes chain transfer/termination. | |

| Emulsion (Free Radical) | Surfactant Concentration vs. CMC | 1.5 - 3 x CMC | High: Affects particle nucleation number and final size. |

| Agitation Rate | ± 5% of setpoint | Medium: Affects mixing, heat transfer, and shear. |

Experimental Protocols for Diagnosis & Reduction

Protocol 1: Systematic RAFT Polymerization for Low Dispersity

- Objective: Synthesize poly(methyl methacrylate) with target Mn = 20,000 g/mol and Đ < 1.2.

- Materials: MMA (inhibitor removed), CPDB (RAFT agent), AIBN, anhydrous toluene.

- Method:

- Charge: In a glovebox (N2 atmosphere), add MMA (10.0 mL, 93.6 mmol), CPDB (154 mg, 0.468 mmol), AIBN (7.7 mg, 0.0468 mmol), and toluene (10 mL) to a dried Schlenk flask. [Monomer]:[RAFT]:[I] = 200:1:0.1.

- Deoxygenate: Seal flask, remove from glovebox. Perform 3 freeze-pump-thaw cycles (liquid N2, vacuum < 0.1 mbar, thaw under N2).

- React: Immerse in pre-heated oil bath at 70°C (± 0.2°C) with magnetic stirring (500 rpm). React for 6 hours (target ~80% conversion).

- Terminate: Cool in ice water, expose to air, and dilute with THF for analysis (SEC, 1H NMR).

Protocol 2: In-line Monitoring for Real-Time Diagnosis

- Objective: Use in-line FTIR to monitor monomer conversion in real-time, enabling reaction quenching at identical conversion points.

- Setup: Reactor fitted with a dip probe (SiComp, ATR crystal) connected to an FTIR spectrometer.

- Method:

- Calibrate FTIR by correlating the decay of monomer C=C stretch (~1635 cm-1) to conversion via 1H NMR for a preliminary batch.

- For subsequent batches, run the reaction under identical conditions while collecting spectra every 2 minutes.

- Quench the reaction via rapid cooling/air exposure once the real-time conversion reaches the pre-determined target (e.g., 75%).

- This removes "time" as a variable, directly controlling for conversion, a major source of molecular weight variability.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Reducing Variability

| Item | Function & Importance for Reproducibility |

|---|---|

| Inhibitor Removal Columns (e.g., packed with basic alumina) | Removes phenolic inhibitors (MEHQ, BHT) from monomers reliably and consistently, superior to distillation for routine lab use. |

| Schlenk Flask & Freeze-Pump-Thaw Manifold | Enables rigorous deoxygenation of reaction mixtures, critical for all controlled radical polymerizations. |

| Calibrated Syringe Pumps | Allows precise, continuous addition of monomer, initiator, or catalyst solutions for semi-batch processes, improving heat and composition control. |

| Moisture Tracers (e.g., Karl Fischer Titrator) | Quantifies water content in monomers and solvents (target < 100 ppm) to prevent unintended side-reactions in moisture-sensitive polymerizations (e.g., ROP, anionic). |

| NMR Internal Standard (e.g., 1,3,5-trioxane, mesitylene) | Enables accurate quantitative 1H NMR for end-group analysis and conversion, providing absolute Mn and verifying stoichiometry. |

Diagrams

FAQs & Troubleshooting Guides

Q1: During hot-melt extrusion, my formulation shows unexpected phase separation or color change. What could be the cause and how can I diagnose it? A: This often indicates a chemical interaction (e.g., Maillard reaction, transesterification) between an API with reactive functional groups (e.g., primary amine) and a polymer/excipient (e.g., PEG, PVP). To diagnose:

- Perform a Compatibility Screen: Use a micro-scale, data-generating experiment.

- Protocol: Prepare intimate physical mixtures (1:1 ratio) of the API with each individual excipient and polymer. Place samples in sealed vials and subject them to stressed conditions (e.g., 40°C/75% RH, 60°C dry) for 1-2 weeks. Include controls (pure components).

- Analysis: Analyze weekly using DSC and FTIR. A disappearance of the API's melting peak in DSC or new peaks/peak shifts in FTIR indicates interaction.

- Data-Driven Check: Query available databases (e.g., Polymer Properties Database, Drug-Excipient Interaction Database) for known incompatibilities of your API's chemical class.