Accelerating Discovery: How AI-Driven High-Throughput Testing is Revolutionizing Polymer Composite Development

This article provides a comprehensive overview of AI-driven high-throughput testing (HTT) for polymer composites, a transformative approach accelerating materials discovery and optimization.

Accelerating Discovery: How AI-Driven High-Throughput Testing is Revolutionizing Polymer Composite Development

Abstract

This article provides a comprehensive overview of AI-driven high-throughput testing (HTT) for polymer composites, a transformative approach accelerating materials discovery and optimization. We explore the foundational principles of integrating artificial intelligence with robotic automation and advanced characterization. The article details current methodological workflows, from automated formulation and synthesis to AI-powered data analysis, specifically highlighting applications in biomedical materials like drug delivery systems and implants. We address critical challenges in data quality, model interpretability, and experimental design, offering optimization strategies. Finally, we examine validation frameworks and compare AI-HTT against traditional methods, quantifying gains in speed, cost, and predictive accuracy for researchers and drug development professionals.

The AI-HTT Paradigm: Core Concepts and Why It's a Game-Changer for Composites Research

Application Notes

The integration of Artificial Intelligence (AI), Robotics, and High-Throughput Experimentation (HTE) creates a closed-loop, autonomous research platform. In polymer composites research, this synergy accelerates the discovery and optimization of materials with tailored properties (e.g., mechanical strength, thermal stability). AI models, particularly machine learning (ML), predict promising formulation and processing parameters. Robotic automation systems execute these experiments at scale via HTE, generating high-fidelity data that is fed back to refine the AI models. This iterative cycle compresses development timelines from years to months.

Key Quantitative Benefits:

- Throughput: Automated liquid handling and parallel synthesis can increase experiment output by 10-100x compared to manual methods.

- Success Rate: ML-guided design can improve the hit rate of successful formulations by >30% over traditional design-of-experiment (DoE) approaches.

- Resource Efficiency: Automated platforms can reduce reagent consumption by up to 90% through miniaturization (e.g., microplate-based reactions).

Table 1: Quantitative Impact of AI-Robotics-HTE Integration in Composite Research

| Metric | Traditional Manual Approach | AI-Robotics-HTE Platform | Improvement Factor |

|---|---|---|---|

| Experiments per Week | 5-10 | 200-500 | 40-100x |

| Material per Formulation Test | 10-50 g | 0.1-1 g (microscale) | 10-100x less |

| Formulation Optimization Cycle Time | 3-6 months | 2-4 weeks | ~4-6x faster |

| Data Points Generated per Project | 10² - 10³ | 10⁴ - 10⁶ | 100-1000x more |

Experimental Protocols

Protocol 1: AI-Guided HTE for Thermoset Composite Formulation

Objective: To autonomously discover an epoxy resin composite with maximized fracture toughness and glass transition temperature (Tg).

Materials: See "The Scientist's Toolkit" below.

AI Design Phase:

- Define Search Space: Specify ranges for input variables: epoxy resin type (2-4 types), curing agent type (2-3 types), stoichiometric ratio (0.8-1.2), filler identity (e.g., 0-3 types), filler loading (0-15 wt%), and curing temperature (80-180°C).

- Initialize Model: Train a Bayesian Optimization (BO) or Gaussian Process (GP) model on an initial dataset (historical or from a space-filling DoE of ~50 experiments).

- Propose Experiments: The AI algorithm suggests the next batch of 24-96 formulations expected to maximize the multi-objective goal (Tg & toughness).

Robotic Execution Phase (HTE):

- Liquid Handling:

- The robotic platform receives the experimental list.

- Using positive displacement tips, it dispenses calculated masses of epoxy resins into designated wells of a 96-well micro-reactor array.

- It then adds precise amounts of curing agents and nanoparticle suspensions (fillers), followed by automated mixing (orbital shaking or tip-based agitation).

- Curing & Processing:

- The micro-reactor array is transferred via robotic arm to a programmable thermal curing station.

- Curing is performed per the specified temperature profile.

- Cured samples are automatically demolded.

Characterization & Data Flow:

- Automated Analysis: Robotic arms transfer samples to integrated characterization tools:

- Tg: Use automated dynamic mechanical analysis (miniature DMA) or differential scanning calorimetry (DSC) autosampler.

- Toughness: Perform automated micro-scale fracture tests or nanoindentation.

- Data Aggregation: Results are automatically parsed and structured into a database.

- Model Update: The aggregated data is used to retrain/update the AI model, which then proposes the next batch of experiments. The loop continues until performance targets are met.

Protocol 2: Autonomous Optimization of Processing Parameters for Short-Fiber Composites

Objective: Optimize injection molding parameters for polypropylene/short-carbon-fiber composites to maximize tensile strength.

Workflow:

- AI Setup: An ML model (e.g., Random Forest or Neural Network) is pre-trained on a dataset linking processing parameters (barrel temperature, mold temperature, injection speed, holding pressure) to tensile strength.

- Robotic Processing: An automated, computer-controlled micro-injection molding system executes molding cycles as dictated by the AI-proposed parameter sets, producing standardized tensile bars.

- Automated Mechanical Testing: A robotic system loads tensile bars into a universal testing machine, initiates tests, and extracts yield strength, modulus, and elongation.

- Iteration: Results are fed to the AI, which refines its parameter recommendations for the next cycle.

Table 2: Key Parameters & Ranges for Autonomous Processing Optimization

| Parameter | Lower Bound | Upper Bound | Optimization Step |

|---|---|---|---|

| Melt Temperature | 180 °C | 240 °C | 5 °C |

| Mold Temperature | 30 °C | 80 °C | 10 °C |

| Injection Speed | 50 mm/s | 200 mm/s | 25 mm/s |

| Holding Pressure | 400 bar | 800 bar | 50 bar |

| Cooling Time | 15 s | 40 s | 5 s |

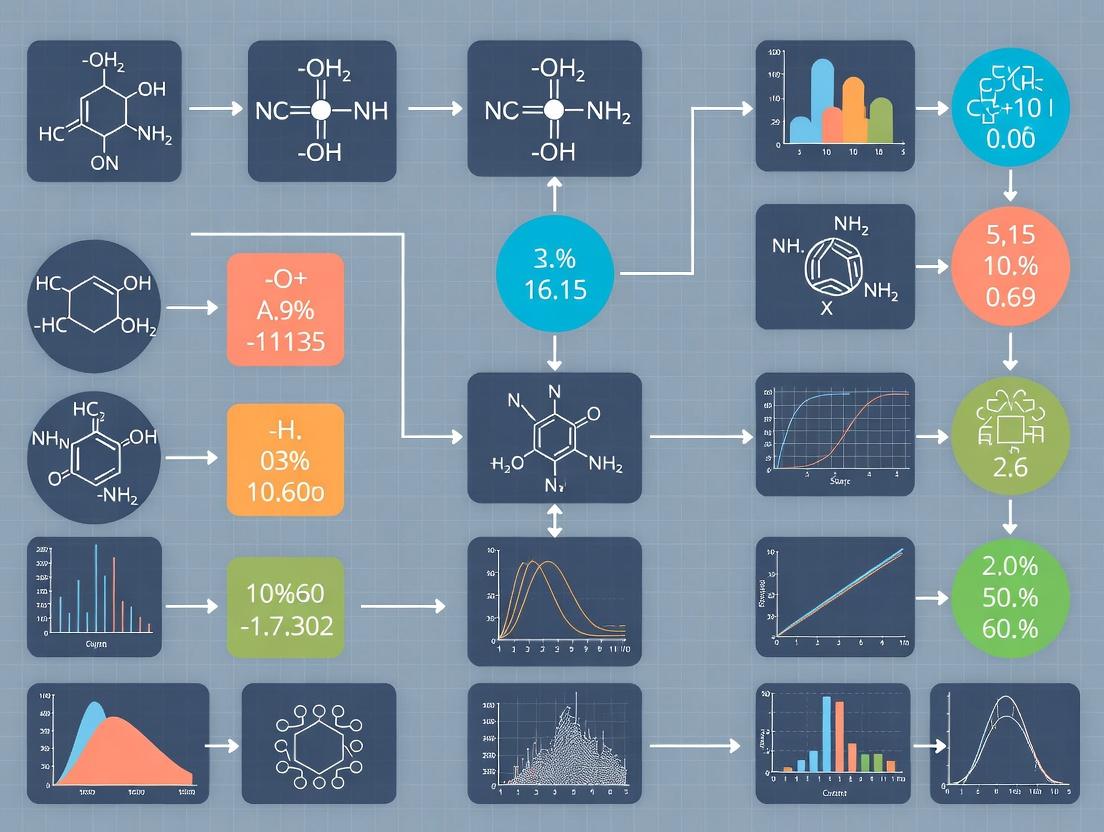

Diagrams

AI Robotics HTE Closed Loop

Polymer HTE Experimental Workflow

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions & Materials for AI-Driven Polymer HTE

| Item | Function/Description |

|---|---|

| Multi-Component Epoxy Resin Kits | Pre-formulated libraries of resins (diglycidyl ethers) and curing agents (amines, anhydrides) with varied chain lengths/reactivities for combinatorial formulation. |

| Functionalized Nanoparticle Dispersions | Stable colloidal suspensions of nanoparticles (SiO₂, CNC, graphene) in solvents or monomers, compatible with automated liquid handling. |

| Microplate-Based Reactor Arrays | Chemically resistant, 96- or 384-well plates capable of withstanding high temperatures and pressures for parallel synthesis and curing. |

| Positive Displacement Liquid Handler | Robotic liquid handling system with high precision (nL-μL) for dispensing viscous polymers and nanoparticle suspensions. |

| Automated Thermal Curing Station | Programmable oven or heating block capable of running multiple temperature profiles in parallel for microplate formats. |

| Integrated Rheometer/DMA Autosampler | Enables automated measurement of viscosity, gel time, and thermomechanical properties (Tg, modulus) from micro-samples. |

| High-Throughput Nanoindenter | Automated system for measuring localized mechanical properties (hardness, modulus, fracture toughness) across hundreds of sample spots. |

| Centralized Lab Information Management System (LIMS) | Software to track sample identity, experimental parameters, and analytical results, linking physical experiments to digital data. |

The development of advanced polymer composites is critically bottlenecked by serial, labor-intensive testing methods. Within the broader thesis on AI-driven high-throughput testing, these bottlenecks—physical specimen fabrication, slow mechanical testing, and manual data analysis—are the primary constraints. This document provides Application Notes and Protocols for implementing an integrated, automated workflow to overcome these barriers, enabling rapid property prediction and material optimization.

The following table summarizes the time differentials between traditional and AI-enhanced high-throughput (HT) methods for composite development.

Table 1: Time Comparison of Traditional vs. AI-Enhanced High-Throughput (HT) Methods

| Development Phase | Traditional Method Duration | AI-HT Method Duration | Speed Factor | Primary Enabling Technology |

|---|---|---|---|---|

| Formulation Screening | 2-4 weeks (manual batch mixing) | 24-48 hours | ~10-15x | Automated robotic dispensing, DoE-driven libraries |

| Specimen Fabrication | 1-2 weeks (hand lay-up, curing) | 1-2 days | ~5-7x | High-throughput curing ovens, automated tape laying (ATL) |

| Mechanical Testing | 1-2 weeks (serial ASTM tests) | 6-12 hours | ~10-20x | Automated testing systems (e.g., Instron AT), coupled with DIC |

| Data Analysis & Model Building | 3-6 months (manual correlation) | 1-2 weeks | ~8-12x | Machine Learning (ML) regression models (Random Forest, GPR) |

| Overall Cycle Time (Concept to Model) | 6-12 months | 3-6 weeks | ~8-15x | Integrated AI/ML & robotic automation platform |

Application Notes & Experimental Protocols

Protocol 3.1: High-Throughput Formulation & Miniaturized Specimen Fabrication

Objective: To rapidly produce a diverse library of composite formulations with varying resin chemistries, filler loading, and fiber orientations for downstream testing.

Materials: See "The Scientist's Toolkit" (Section 5).

Procedure:

- DoE Setup: Define experimental space using a fractional factorial design (e.g., 3 factors: resin hardener ratio, nanoclay % wt, short carbon fiber length). Use software (JMP, Modde) to generate 50-100 unique formulation recipes.

- Automated Dispensing:

- Load constituent materials into syringes on a robotic liquid handler.

- Program the handler to dispense precise masses/volumes according to the DoE matrix into individual wells of a high-temperature-resistant silicone mold (e.g., 10x10 array of dog-bone cavities).

- Execute dispensing under inert atmosphere if necessary.

- In-situ Mixing & Degassing:

- Employ a dual-axis capacitive mixer integrated into the platform to mix each cavity for 60 seconds.

- Apply a uniform vacuum cycle (5 mbar for 90 seconds) to the entire mold array to remove entrapped air.

- High-Throughput Curing:

- Transfer the filled mold to a programmable, multi-zone curing oven.

- Execute a thermal profile (e.g., 80°C for 2h, 120°C for 1h) monitored by an array of embedded micro-thermocouples.

- Automated Demolding & Labeling:

- After cooling, use a robotic gripper to extract each miniaturized dog-bone specimen.

- Apply a QR code label via automated printer for full traceability.

Protocol 3.2: Automated Mechanical Testing Coupled with In-line Diagnostics

Objective: To perform rapid, parallel mechanical testing while capturing rich, multi-modal data for ML model training.

Procedure:

- Specimen Queueing: A conveyor or robotic arm presents specimens from Protocol 3.1 to the testing station in sequence.

- Automated Tensile Test:

- A vision system guides a gripper to mount the specimen into motorized wedge grips.

- Execute a standardized tensile test (ASTM D638-14 adapted for miniaturized specimens) at a constant strain rate (1 mm/min).

- Key Data Streams: Load (from load cell), displacement (from actuator encoder), and full-field strain (from 2D Digital Image Correlation - DIC).

- In-line Diagnostic Imaging:

- A synchronized macro-imaging system captures high-resolution images of the failure zone post-fracture.

- An optional inline portable spectrophotometer captures a color spectrum for visual property correlation.

- Data Aggregation: All data streams (load-displacement, DIC strain maps, failure images) are automatically tagged with the specimen's QR code and uploaded to a centralized database.

Protocol 3.3: AI-Driven Data Fusion & Predictive Model Building

Objective: To fuse multi-modal data and train ML models that predict composite properties from formulation and processing parameters.

Procedure:

- Feature Engineering:

- From Formulation: Resin type, hardener ratio, filler % wt, fiber length/orientation.

- From Processing: Cure temperature gradient, degassing efficiency (estimated from bubble count in pre-cure image).

- From Test Results: Ultimate tensile strength, Young's modulus, strain at break, fracture surface texture features (from image analysis).

- Model Training:

- Use a supervised learning framework (e.g., Scikit-learn, PyTorch).

- Input: Engineered features from formulation & processing.

- Output: Target mechanical properties (strength, modulus).

- Algorithm: Train a Gradient Boosting Regressor (e.g., XGBoost) or a Gaussian Process Regressor (GPR) on 70% of the data.

- Validation & Deployment:

- Validate model performance on the held-out 30% test set. Target R² > 0.85.

- Deploy the trained model as a web service to predict properties for new, virtual formulations, guiding the next design iteration.

Workflow & Pathway Visualizations

Diagram Title: Traditional vs AI-HT Composite Development Workflow

Diagram Title: AI-Driven Data Fusion for Property Prediction

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Materials & Equipment for AI-HT Composite Research

| Item Name | Category | Function in AI-HT Workflow |

|---|---|---|

| Robotic Liquid Handler (e.g., Hamilton MICROLAB STAR) | Automation | Precisely dispenses resin, hardener, and nano-fillers into multi-well molds according to DoE, enabling high-throughput formulation. |

| High-Temp Silicone Mold (Multi-cavity) | Consumable | Allows simultaneous curing of dozens of miniaturized specimens (dog-bone, puck) for parallel testing. |

| Programmable Multi-zone Curing Oven | Processing | Provides precise, uniform thermal profiles across a large batch of specimens, ensuring consistent cure kinetics. |

| Automated Testing System (e.g., Instron AutoX 750) | Testing | Robots performs sequential tensile/compression tests on mini-specimens 24/7, outputting structured data. |

| 2D Digital Image Correlation (DIC) System | Diagnostics | Captures full-field strain maps during mechanical testing, providing rich data for model training beyond standard metrics. |

| Graphite Nanoplatelets (xGnP) | Nanomaterial | A common conductive nanofiller used to modify electrical/thermal properties; a variable in formulation DoE. |

| Epoxy Resin System (e.g., Hexion EPIKOTE/EPIKURE) | Matrix Material | A benchmark thermoset polymer for composite research; its ratio with hardener is a key experimental variable. |

| Machine Learning Software Suite (e.g., Python with Scikit-learn, PyTorch) | Data Analysis | The core platform for fusing data, engineering features, and training predictive property models. |

Within the context of AI-driven high-throughput testing (HTT) for polymer composites research, the workflow integrates computational design, automated synthesis, robotic testing, and data analytics into a closed-loop system. This accelerates the discovery and optimization of composite materials for applications ranging from structural components to drug delivery systems.

Foundational Components of the Workflow

Digital Design & In-Silico Screening

This phase uses AI models to predict composite properties before physical synthesis.

Protocol 2.1.1: ML-Driven Virtual Screening of Composite Formulations

- Objective: To down-select promising polymer/filler/nanoparticle formulations from a vast virtual library.

- Data Curation: Assemble a historical dataset of composite formulations and their key properties (e.g., tensile strength, modulus, toughness, glass transition temperature).

- Model Training: Employ a gradient-boosting regression algorithm (e.g., XGBoost) or a graph neural network (GNN) to learn the structure-property relationships. GNNs are particularly effective for representing polymer chain interactions and filler dispersion.

- Virtual Screening: Use the trained model to predict properties for a vast combinatorial space of new formulations (e.g., 10,000+ candidates).

- Selection: Apply multi-objective optimization (e.g., Pareto front analysis) to identify the top 100-200 candidates that balance target properties for physical testing.

Table 1: Performance Metrics of a Representative Virtual Screening Model

| Model Type | Training Set Size | Mean Absolute Error (Tensile Strength, MPa) | R² Score (Modulus Prediction) | Virtual Screening Throughput (Formulations/hr) |

|---|---|---|---|---|

| XGBoost | 5,000 data points | 4.2 | 0.91 | ~50,000 |

| Graph Neural Network | 5,000 data points | 2.8 | 0.96 | ~12,000 |

Automated High-Throughput Synthesis & Processing

Robotic platforms translate digital designs into physical samples.

Protocol 2.2.1: Robotic Dispensing and Film Casting for Microplate-Based Libraries

- Objective: To synthesize a library of 96 distinct composite films on a single microplate.

- Equipment Preparation: Calibrate a liquid-handling robot equipped with dynamic dispense heads. Prepare reservoirs of polymer solutions (e.g., PLA in DMF), filler suspensions (e.g., cellulose nanocrystals, graphene oxide), and crosslinker solutions.

- Formulation Dispensing: The robot follows a pre-defined recipe to dispense variable volumes of each component into individual wells of a silicone-walled polytetrafluoroethylene (PTFE) microplate. Inert atmosphere (N₂) is maintained for oxygen-sensitive reactions.

- In-Well Mixing: A dual-action mixing protocol is used: (a) robotic tip mixing (10 cycles), followed by (b) microplate agitation on an orbital shaker (500 rpm, 5 minutes).

- Solvent Evaporation/Curing: The microplate is transferred to a controlled environment chamber (e.g., 60°C, <10% RH, with exhaust) for 12 hours to form solid films.

Robotic Physical Testing and Characterization

Automated systems perform mechanical and functional tests on synthesized libraries.

Protocol 2.3.1: Automated Tensile Testing of Microplate-Synthesized Films

- Objective: To perform miniaturized tensile tests on all 96 composite films in an unattended sequence.

- Sample Transfer: A 6-axis articulated robot arm, equipped with a vacuum gripper, lifts individual films from the microplate and transfers them to a micro-tensile stage.

- Gripping & Alignment: The stage features self-tightening, pneumatic grips. A machine vision system confirms sample alignment and measures the exact gauge length and cross-sectional area.

- Testing: The stage executes a standardized tensile protocol (e.g., 0.1 mm/s strain rate until failure).

- Data Capture: Force and displacement are recorded at 100 Hz. The system automatically calculates Young's modulus, ultimate tensile strength, and strain at break.

Table 2: Output from a Single AI-HTT Campaign on Polymer-Clay Nanocomposites

| Formulation ID | Clay Loading (wt%) | Predicted Modulus (GPa) | Measured Modulus (GPa) | Deviation (%) | Measured Toughness (MJ/m³) |

|---|---|---|---|---|---|

| NC_23 | 2.5 | 3.45 | 3.51 | +1.7 | 45.2 |

| NC_67 | 5.0 | 4.20 | 3.98 | -5.2 | 38.7 |

| NC_89 | 7.5 | 5.10 | 4.22 | -17.3 | 22.1 |

Data Integration, AI Retraining, and Closed-Loop Optimization

Experimental results feed back to improve the digital models.

Protocol 2.4.1: Closing the AI-HTT Loop with Bayesian Optimization

- Objective: To refine the formulation search space using data from the last physical HTT campaign.

- Data Fusion: Append the new experimental results (Table 2) to the master training dataset.

- Model Retraining: Fine-tune the primary property prediction model with the expanded dataset.

- Acquisition Function: Employ a Bayesian optimization loop. The model's prediction uncertainty and predicted property value are combined via an acquisition function (e.g., Expected Improvement) to propose the next set of 96 formulations.

- Proposal: The system outputs a new set of formulations that either exploit known high-performance regions or explore high-uncertainty regions of the design space.

Visualizing the Integrated AI-HTT Workflow

Diagram 1: AI-HTT Closed-Loop for Composites

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Materials & Reagents for AI-HTT Polymer Composites Research

| Item | Function/Application in AI-HTT Workflow | Example (Supplier Specifics Excluded) |

|---|---|---|

| Functionalized Nanoparticle Suspensions | Provide uniform, stable dispersions of fillers (e.g., SiO₂, clay, CNT) for reliable robotic dispensing. | Aminosilane-coated silica nanoparticles (10% w/v in ethanol). |

| Polymer Resin Libraries | A curated set of base polymers with varied backbone chemistry (e.g., epoxies, acrylates, PLGA) for combinatorial formulation. | Photocurable acrylate oligomer kit (4 viscosities, 6 functionalities). |

| High-Throughput Screening Additives | Pre-formulated master stocks of plasticizers, initiators, catalysts, or drugs for controlled release studies. | Thermal initiator (AIBN) solutions in DMSO at 5 concentrations. |

| Surface-Treated Microplates | Specialized substrates for synthesis and testing. Non-stick coatings ensure sample recovery. | 96-well PTFE/Silicone composite deep-well plates. |

| Calibration Standards Kit | Materials with certified mechanical/thermal properties for validating robotic testing platforms. | Polyurethane film array with traceable modulus (0.1-3.0 GPa). |

Application Notes

In AI-driven high-throughput testing (HTT) for polymer composites, the integration of multi-faceted property datasets is critical for predictive modeling and accelerated discovery. These primary data types form the foundational layers for training robust machine learning algorithms.

1. Mechanical Property Datasets: These quantify the response of composite materials to applied forces. In HTT, automated systems like combinatorial robotics perform micro-scale tensile, flexural, and hardness tests on thousands of discrete formulation patches. AI models correlate these data with processing parameters and compositional gradients to predict bulk performance and identify failure envelopes.

2. Thermal Property Datasets: Essential for applications in extreme environments, these datasets include Glass Transition Temperature (Tg), Thermal Decomposition Onset (Td), and Coefficient of Thermal Expansion (CTE). High-throughput Differential Scanning Calorimetry (DSC) and Thermogravimetric Analysis (TGA) modules, integrated into automated workflows, generate data that AI uses to infer structural stability and cure kinetics.

3. Chemical Property Datasets: This encompasses degradation resistance (e.g., to solvents, acids, bases), sorption kinetics, and catalytic activity. Spectroscopic (FTIR, Raman) and chromatographic (GC-MS) endpoints from parallelized exposure experiments feed AI models to predict long-term chemical stability and reactivity.

4. Biological Property Datasets (for Biocomposites & Drug Delivery Systems): For composites in biomedical applications, datasets include protein adsorption profiles, cytotoxicity (IC50), hemocompatibility, and drug release kinetics. Automated cell culture handlers and plate readers generate high-dimensional biological response data. AI integrates this with material properties to design composites with tailored bio-interfacial characteristics.

Table 1: Core Primary Data Types and High-Throughput Measurement Techniques

| Property Type | Key Parameters | Exemplary HTT Technique | Typical Output Range | AI Model Utility |

|---|---|---|---|---|

| Mechanical | Tensile Modulus, Ultimate Strength, Elongation at Break | Automated Micro-tensile Testing | Modulus: 0.1 GPa - 300 GPa | Structure-Property Prediction |

| Thermal | Tg, Td, CTE | High-Throughput DSC/TGA | Tg: -50°C to 400°C | Stability & Processing Optimization |

| Chemical | Degradation Rate, Equilibrium Swelling | Parallelized Spectroscopic Analysis | Degradation %: 0-100% over time | Lifetime Prediction |

| Biological | IC50, Hemolysis %, Drug Release Half-life (t½) | Automated Live/Dead Assays, HPLC | IC50: 0.1 - 1000 µg/mL | Biocompatibility Screening |

Experimental Protocols

Protocol 1: High-Throughput Mechanical Characterization of Polymer Composite Libraries Objective: To simultaneously determine tensile modulus and yield strength for 96 distinct composite formulations.

- Library Fabrication: Using an automated dispenser, prepare a gradient library of resin and filler (e.g., carbon fiber, silica) onto a patterned silicone substrate with 96 independent wells. Cure using a UV-photopolymerization station.

- Sample Transfer: A robotic arm transfers each cured puck to a miniaturized tensile stage (e.g., equipped with a 500N load cell).

- Automated Testing: The stage engages, applying strain at a constant rate of 1 mm/min. Force and displacement are recorded until fracture.

- Data Processing: Software calculates stress-strain curves for each sample, extracting modulus, yield strength, and elongation. Data is compiled into a structured CSV file for AI ingestion.

Protocol 2: High-Throughput Thermal Stability Screening Objective: To determine the decomposition temperature (Td at 5% weight loss) for 48 composite variants.

- Sample Loading: Aliquot ~5mg of each powdered composite sample into wells of a specialized TGA autosampler carousel.

- Programmed Run: The autosampler sequentially introduces samples into the TGA furnace under a nitrogen atmosphere (50 mL/min). A temperature ramp from 30°C to 800°C at 20°C/min is executed for each.

- Automated Analysis: Software identifies the temperature at 5% mass loss for each thermogram. The data matrix (Formulation ID vs. Td) is exported.

Protocol 3: High-Throughput Cytotoxicity Screening (MTT Assay) for Biocomposites Objective: To measure cell viability (%) of human fibroblast cells after 24-hour exposure to composite leachates.

- Leachate Preparation: In a 96-well plate, immerse sterile composite discs (n=3 per formulation) in cell culture medium (200 µL/well). Incubate (37°C, 5% CO2) for 24 hours.

- Cell Exposure: Seed fibroblasts (10,000 cells/well) in a new 96-well plate. Aspirate medium and replace with 100 µL of filtered leachate from Step 1. Include control wells (cells + medium only).

- Viability Quantification: After 24h, add 10 µL of MTT reagent (5 mg/mL) to each well. Incubate for 4 hours. Add 100 µL of solubilization solution and incubate overnight.

- Data Acquisition: Measure absorbance at 570 nm using a plate reader. Calculate viability % relative to control. Data is structured for dose-response AI modeling.

Visualizations

Diagram Title: AI-Driven HTT Workflow Integrating Primary Data Types

Diagram Title: Generic HTT Protocol Flow for Multi-Modal Data Generation

The Scientist's Toolkit: Key Research Reagent Solutions & Materials

Table 2: Essential Materials for AI-Driven HTT in Polymer Composites

| Item Name | Category | Function in HTT Context |

|---|---|---|

| Combinatorial Inkjet Dispenser | Fabrication Robot | Precisely deposits picoliter volumes of resins, fillers, and additives to create gradient composition libraries on a single substrate. |

| Photopolymerizable Resin Library | Chemical Reagent | A suite of acrylate, epoxy, or other monomers with varying backbone chemistries, enabling rapid curing (seconds) for HT sample prep. |

| Functionalized Nanofiller (e.g., SiO2, CNT) | Material | Provides mechanical reinforcement or electrical conductivity; surface functionalization ensures compatibility and creates a tunable variable. |

| High-Throughput TGA/DSC Autosampler | Analytical Hardware | Allows sequential analysis of up to 50+ samples without manual intervention, generating consistent thermal stability datasets. |

| 96-Well Microtensile Tester | Mechanical Tester | Miniaturized mechanical test stage that measures stress-strain of multiple micro-samples in rapid succession. |

| Multi-Parameter Plate Reader | Bio-Analytical Tool | Measures absorbance, fluorescence, and luminescence in 96- or 384-well plates, automating biological endpoint readouts (e.g., MTT, ELISA). |

| Automated Cell Culture System | Biology Tool | Maintains and seeds cell lines for biocompatibility assays with minimal manual handling, ensuring assay consistency. |

| Structured Data Pipeline Software | Software | Automates the extraction, cleaning, and formatting of raw instrument data into AI-ready tables (e.g., CSV files with standardized headers). |

1.0 Introduction & Thesis Context This document provides foundational knowledge on core Artificial Intelligence (AI) methodologies—Machine Learning (ML), Deep Learning (DL), and Active Learning (AL) loops—framed within the critical need for accelerated discovery in materials science. The broader thesis posits that integrating these AI-driven approaches into high-throughput testing (HTT) frameworks is transformative for polymer composites research. By predicting structure-property relationships, optimizing formulations, and intelligently guiding experiments, AI reduces the cost and time of the development cycle, enabling rapid innovation for applications ranging from lightweight automotive components to advanced drug delivery systems.

2.0 Foundational Model Definitions & Quantitative Comparison

Table 1: Comparison of Foundational AI Models

| Model Type | Core Principle | Typical Architecture | Data Requirement | Common Use-Case in Composites Research |

|---|---|---|---|---|

| Machine Learning (ML) | Learns patterns from structured feature data using statistical algorithms. | Random Forest, SVM, Gradient Boosting. | Moderate (100s-1000s of samples). Feature engineering critical. | Predicting tensile strength from formulation ratios (filler %, resin type). |

| Deep Learning (DL) | Learns hierarchical feature representations directly from raw or complex data via neural networks. | Convolutional Neural Networks (CNNs), Graph Neural Networks (GNNs). | Large (1000s-1M+ samples). Computationally intensive. | Analyzing micro-CT scan images for defect detection or predicting properties from molecular graph structures. |

| Active Learning (AL) Loop | An iterative, human-in-the-loop framework where the model selects the most informative data points for labeling. | Query strategy (e.g., uncertainty sampling) + Base model (ML or DL). | Starts small, grows strategically. Maximizes information gain per experiment. | Guiding the next set of HTT synthesis trials to optimally explore the formulation space for a target property. |

3.0 Experimental Protocols

Protocol 3.1: Building a Baseline ML Model for Property Prediction Objective: To predict a target property (e.g., Young's Modulus) of a polymer composite from curated formulation and processing features. Materials: Historical experimental dataset, Python environment (scikit-learn, pandas).

- Feature Curation: Compile structured data: matrix polymer ID (encoded), filler type & weight %, curing temperature, mixing speed.

- Data Preprocessing: Split data 80/20 for training/test. Scale numerical features (StandardScaler). Encode categorical variables.

- Model Training: Train a Random Forest Regressor. Use 5-fold cross-validation on the training set to tune hyperparameters (nestimators, maxdepth).

- Validation: Evaluate on the held-out test set using Mean Absolute Error (MAE) and R² scores.

- Interpretation: Analyze feature importance scores from the trained model to identify key drivers of the target property.

Protocol 3.2: Implementing a Convolutional Neural Network (CNN) for Microstructure Analysis Objective: To classify SEM images of composite fractures as "brittle" or "ductile." Materials: Labeled SEM image dataset, GPU-enabled Python environment (TensorFlow/PyTorch).

- Data Preparation: Resize all images to uniform dimensions (e.g., 224x224). Apply data augmentation (rotation, flips) to training set only. Normalize pixel values.

- Model Architecture: Implement a sequential CNN: Input -> 2x (Conv2D + ReLU + MaxPooling2D) -> Flatten -> Dense(128, ReLU) -> Dropout(0.5) -> Dense(2, Softmax).

- Training: Use categorical cross-entropy loss and Adam optimizer. Train for 50 epochs with batch size 32, validating on a 15% hold-out set.

- Evaluation: Assess performance using test set accuracy, precision, recall, and generate a confusion matrix.

Protocol 3.3: Establishing an Active Learning Loop for Formulation Optimization Objective: To minimize the number of experiments needed to discover a composite formulation with >90% target performance. Materials: Initial small dataset (<50 samples), HTT platform capable of preparing and testing formulations based on model requests.

- Initialization: Train a base model (e.g., Gaussian Process Regressor) on the initial small dataset.

- Query & Selection: Use an uncertainty sampling query strategy. The model identifies n (e.g., 5) formulations within the design space where its prediction variance is highest.

- Experiment & Labeling: The HTT platform executes the n proposed experiments, and the resulting properties are measured.

- Model Update: The newly acquired (formulation, property) pairs are added to the training dataset. The base model is retrained.

- Iteration: Steps 2-4 are repeated until a formulation meeting the >90% target performance criterion is identified or the experimental budget is exhausted.

4.0 Visualization: AI-Driven HTT Workflow

Title: Active Learning Loop for Composite Discovery

5.0 The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials & Computational Tools for AI-Driven Composites Research

| Item / Solution | Function / Role | Example in Protocol |

|---|---|---|

| High-Throughput Robotics Platform | Automates the precise dispensing, mixing, and curing of polymer resin and filler components to generate large, consistent sample libraries. | Protocol 3.3: Executes synthesis of AL-proposed formulations. |

| Automated Mechanical Testers | Integrates with sample libraries to perform rapid, sequential tensile, flexural, or impact tests, generating quantitative property data. | Protocol 3.1 & 3.3: Provides labeled property data (Young's Modulus) for model training. |

| Scikit-learn Library | Provides robust, accessible implementations of classic ML algorithms (Random Forest, SVM, GP) for baseline modeling and AL strategies. | Protocol 3.1 & 3.3: Used for building and training the predictive regression model. |

| PyTorch / TensorFlow Framework | Open-source libraries for building and training complex DL models (CNNs, GNNs) on GPU hardware, enabling image and graph data analysis. | Protocol 3.2: Used to construct, train, and evaluate the CNN for image classification. |

| Graph Neural Network (GNN) Library (e.g., PyTorch Geometric) | Specialized toolkit for building models that operate directly on graph-structured data, such as molecular representations of polymers. | Predicting properties from the chemical graph of a monomer or filler. |

| ALiPy Python Toolkit | Provides standardized implementations of various Active Learning query strategies (uncertainty, diversity, query-by-committee). | Protocol 3.3: Facilitates the selection of the most informative samples from the pool. |

Application Notes

The integration of AI-driven high-throughput testing (HTT) into polymer composites research represents a paradigm shift, accelerating the design-to-deployment cycle. This convergence addresses critical challenges in material discovery, property prediction, and lifecycle assessment.

1. AI-Augmented Material Discovery: Generative models and deep learning are used to propose novel polymer formulations and composite architectures. High-throughput robotic synthesis and characterization platforms generate the necessary training data, creating a closed-loop discovery system. This approach is pivotal in developing sustainable composites and materials for extreme environments.

2. Predictive Performance Modeling: Machine learning (ML) models, trained on HTT data from techniques like dynamic mechanical analysis (DMA), nanoindentation, and ultrasonic testing, accurately predict non-linear mechanical properties (e.g., fatigue, fracture toughness) without full-scale physical testing. This reduces reliance on costly and time-consuming traditional methods.

3. Industrial Adoption Drivers: In sectors like aerospace, automotive, and biomedical devices, adoption is driven by the need for lightweighting, part consolidation, and certified material performance. AI/HTT enables rapid qualification of new composites, formulation optimization for specific processing conditions (e.g., injection molding, additive manufacturing), and predictive maintenance models based on composite degradation.

4. Key Challenges: Barriers include the "data scarcity" problem for novel material classes, the high capital cost of automated platforms, and the need for standardized data formats to enable model sharing and reproducibility. Bridging the gap between nanoscale simulation data and macroscale HTT results remains an active research focus.

Protocols

Protocol 1: AI-Driven High-Throughput Formulation Screening for Epoxy-Carbon Fiber Composites

Objective: To rapidly identify optimal curing agent and modifier concentrations for maximizing tensile strength and glass transition temperature (Tg). Materials: See "Research Reagent Solutions" table. Equipment: Automated liquid handling robot, high-throughput mechanical tester (e.g., array of micro-tensile bars), Differential Scanning Calorimetry (DSC) autosampler, robotic composite layup system, cloud-based data platform. Procedure:

- Design of Experiment (DoE): Use an AI-based active learning algorithm to define the first set of 50 formulations within a defined chemical space (e.g., amine equivalent weight, modifier %wt).

- Automated Synthesis: Execute formulations using a liquid handler to dispense epoxy resin, curing agents (diamines), and toughening modifiers (CTBN) into coded vials.

- High-Throughput Curing & Preparation: Transfer mixtures to a micro-cavity mold. Cure in a gradient thermal oven that applies a temperature range (e.g., 100°C-180°C) across different cells. Robotically prepare micro-tensile specimens.

- Automated Characterization:

- Tensile Testing: Perform automated micro-tensile tests, recording Young's modulus, ultimate tensile strength (UTS), and elongation at break.

- Thermal Analysis: Use an autosampler DSC to determine Tg for each formulation.

- Data Integration & Model Training: Stream all quantitative data (formulation ratios, UTS, Tg) to a centralized database. Train a Gaussian Process Regression model to map the formulation-property landscape.

- Iterative Loop: The AI model selects the next 20 most informative formulations to test, refining its predictions. Repeat steps 2-5 for 4 cycles.

Protocol 2: Predictive Fatigue Life Modeling from High-Throughput DMA Data

Objective: To predict the full S-N (stress-life) curve for a composite laminate using a minimal set of high-throughput dynamic mechanical analysis measurements. Materials: Carbon fiber reinforced polymer (CFRP) laminate coupons (varying fiber orientations, e.g., [0]₈, [90]₁₆, [±45]₄s). Equipment: High-throughput DMA system with autoloader, servo-hydraulic testing frame for validation, computing cluster. Procedure:

- High-Throughput Viscoelastic Profiling: Using an autoloader DMA, perform frequency sweep tests (0.1-100 Hz) at multiple isotherms (e.g., -50°C to 200°C) on a library of 100+ small coupon samples representing material and process variations.

- Data Reduction: Extract key viscoelastic parameters: storage modulus (E') at a reference temperature/frequency, loss factor (tan δ) peak temperature (approximate Tg), and the breadth of the tan δ peak.

- Feature Engineering: Create a feature vector for each sample including: E'(25°C, 1Hz), Tg, tan δ peak width, fiber orientation code, and void content percentage (from inline ultrasonic inspection).

- Model Training: Train a multi-output neural network on a historical dataset where the targets are the parameters (A, B, C) of the Basquin's law equation: σ = A * Nf^B + C, where σ is stress amplitude and Nf is cycles to failure.

- Prediction & Validation: For new laminate variants, run the high-throughput DMA protocol (Step 1), generate the feature vector, and input it into the trained model to predict the full S-N curve. Validate predictions with traditional fatigue tests on 3-5 selected samples per variant.

Data Tables

Table 1: Representative Performance of AI/HTT vs. Traditional Methods in Composite Development

| Metric | Traditional Approach (Epoxy Composite) | AI/HTT-Augmented Approach | Improvement Factor |

|---|---|---|---|

| Time for Initial Formulation Screening | 6-12 months | 4-6 weeks | ~4-6x faster |

| Number of Formulations Tested per Cycle | 10-20 | 200-500 | ~25x more |

| Cost per Data Point (Mechanical Test) | ~$500 (standard coupon) | ~$50 (micro-sample) | 90% reduction |

| Predictive Model Accuracy (Tensile Strength) | ±15% (Empirical) | ±7% (ML on HTT data) | ~2x more accurate |

Table 2: Industrial Adoption of AI/HTT for Polymer Composites (2023-2024)

| Industry Sector | Primary Application | Key Technology Used | Reported Outcome |

|---|---|---|---|

| Aerospace | Qualification of new CFRP for interior components | Robotic fiber placement + in-process sensing + ML | Reduced certification timeline by 30% |

| Automotive (EV) | Battery enclosure material development | Generative design + HTT flame retardancy testing | Identified 3 candidate materials meeting targets 60% faster |

| Biomedical | Resorbable polymer scaffold optimization | High-throughput polymer synthesis & degradation testing | Optimized degradation profile to match bone growth rate |

| Sporting Goods | Next-gen thermoplastic composite design | Active learning for impact resistance | Achieved 20% improvement in impact strength over legacy material |

Diagrams

AI-HTT Closed-Loop Research Workflow

Neural Network for Fatigue Prediction

The Scientist's Toolkit

Table 3: Key Research Reagent Solutions for AI-Driven HTT in Polymer Composites

| Item/Reagent | Function in AI/HTT Workflow | Key Consideration |

|---|---|---|

| Epoxy Resin (e.g., DGEBA) | Base polymer for formulation screening. | High purity and consistent viscosity are critical for robotic dispensing accuracy. |

| Amino-Based Curing Agents | Crosslinker for epoxy systems; varied structures alter properties. | Automated handling requires low volatility and good stability at room temperature. |

| Carboxyl-Terminated Butadiene Acrylonitrile (CTBN) | Rubber toughening modifier for epoxies. | Pre-dispersed masterbatches or low-viscosity variants enable reliable automated mixing. |

| Surface-Treated Nanofillers (e.g., SiO₂, CNT) | Additives for enhancing mechanical/thermal properties. | Functionalization level and dispersion quality must be standardized for reproducible HTT. |

| Automated Calorimetry Sample Pans | Containers for high-throughput DSC/TGA analysis. | Must be compatible with robotic autosamplers and have consistent thermal mass. |

| Micro-Tensile Bar Molds (Array Format) | For creating many small, standardized mechanical test specimens. | Fabricated from high-release materials (e.g., PTFE) to allow for robotic demolding. |

| Data Standardization Software (e.g., OLK) | Converts raw instrument data into a unified, searchable format. | Essential for creating the clean, structured databases required for effective AI training. |

From Code to Composite: A Step-by-Step Guide to Implementing AI-HTT Workflows

Application Notes

The integration of AI-driven robotics for automated polymer formulation and synthesis is revolutionizing high-throughput research in polymer composites and drug delivery systems. This phase is foundational for generating large, consistent, and well-defined sample libraries required for training predictive AI models. Robotic systems enable precise, reproducible dispensing of monomers, cross-linkers, nano-fillers (e.g., graphene oxide, cellulose nanocrystals), and active pharmaceutical ingredients (APIs). Automated mixing ensures homogeneous composite blends, while programmable curing stages (UV, thermal) control network formation. This automation directly addresses historical bottlenecks in materials research, allowing for the exploration of vast compositional and processing parameter spaces—such as stoichiometry, filler loading, and cure kinetics—at a pace and precision unattainable manually. The resulting datasets, linking formulation parameters to material properties, are critical for inverse design and accelerating the development of next-generation biocompatible scaffolds, conductive composites, and controlled-release matrices.

Protocols

Protocol 1: High-Throughput Formulation of Photocurable Polymer Composite Libraries

Objective: To robotically prepare an array of polymer composite samples with systematic variation in composition for subsequent mechanical and rheological testing.

Materials:

- Robotic liquid handling system (e.g., Hamilton Microlab STAR)

- Programmable UV curing station (e.g., DYMAX BlueWave LED)

- 96-well polypropylene deep-well plates

- Base monomer: Poly(ethylene glycol) diacrylate (PEGDA, Mn 700)

- Comonomer: 2-Hydroxyethyl methacrylate (HEMA)

- Photoinitiator: Irgacure 2959

- Nanofiller: Functionalized graphene oxide (GO) dispersion in N,N-Dimethylformamide (DMF)

- Inert diluent: Dimethyl sulfoxide (DMSO)

Method:

- System Preparation: Prime robotic fluid lines with appropriate solvents. Load reagents into designated source containers. Define a 96-well plate as the target.

- Dispensing: a. The robot dispenses PEGDA and HEMA across the plate according to a pre-defined gradient pattern, creating a binary compositional spread (e.g., 70/30 to 95/5 PEGDA/HEMA ratio). b. A constant volume of photoinitiator stock solution (2% w/v in DMSO) is added to each well. c. A gradient of GO dispersion (0 to 2% w/w) is dispensed orthogonally to the monomer gradient. d. DMSO is added as a compensator to ensure equal final volume (500 µL ± 5 µL) in all wells.

- Automated Mixing: The plate is transferred to an integrated plate shaker. Mixing is performed at 1500 rpm for 180 seconds.

- Curing: The plate is transferred under a UV LED array (λ=365 nm, Intensity=15 mW/cm²). Each well is irradiated for 120 seconds. Plate position and exposure time are controlled via software.

Protocol 2: Automated Synthesis of Thermoset Epoxy Composites for DMA

Objective: To synthesize a series of epoxy-anhydride composites with varied cross-link density for dynamic mechanical analysis (DMA).

Materials:

- Automated dispensing and mixing robot (e.g., Festo Didactic Motion Terminal, Chemspeed Technologies SWING)

- Programmable thermal curing oven

- Glass vials (8 mL) in custom racks

- Resin: Diglycidyl ether of bisphenol A (DGEBA)

- Curing Agent: Methyl tetrahydrophthalic anhydride (MTHPA)

- Catalyst: 2,4,6-Tris(dimethylaminomethyl)phenol (DMAMP)

- Modifier: Poly(propylene glycol) bis(2-aminopropyl ether) (Jeffamine D-400)

Method:

- Dispensing: The robotic arm dispenses DGEBA into each vial. A gradient of Jeffamine D-400 (0 to 20 phr) is co-dispensed as a toughener.

- Catalyst Addition: A fixed amount of DMAMP catalyst (1 phr) is added to each vial.

- Stoichiometric Curing Agent Addition: The robot calculates and dispenses the required mass of MTHPA to maintain a 1:1 epoxy:anhydride equivalent ratio, accounting for the Jeffamine content.

- High-Shear Mixing: Each vial is sealed with a cap. The vial rack is agitated by the robotic system in a three-dimensional figure-eight pattern for 300 seconds at 60°C.

- Two-Stage Thermal Cure: The rack is transferred to a forced-air convection oven. The cure cycle is: Stage 1: 100°C for 2 hours; Stage 2: 150°C for 3 hours. Ramp rates are controlled at 2°C/min.

Table 1: Robotic Dispensing Precision for Common Polymer Precursors

| Reagent | Viscosity (cP) | Target Volume (µL) | Mean Delivered Volume (µL) | Coefficient of Variation (%) |

|---|---|---|---|---|

| PEGDA (Mn 700) | 90 | 250 | 249.8 | 0.32 |

| DGEBA | 12,000 | 1000 | 998.5 | 0.45 |

| MTHPA | 75 | 850 | 851.2 | 0.28 |

| GO/DMF Disp. (1%) | 25 | 50 | 50.1 | 0.65 |

Table 2: Effect of Automated Mixing Parameters on Composite Homogeneity

| Mixing Mode | Duration (s) | Speed | Resulting GO Agglomerate Size (µm) | Homogeneity Index (CV% of Tensile Strength) |

|---|---|---|---|---|

| Orbital Shaking | 180 | 1500 rpm | 12.5 ± 3.2 | 18.7% |

| Dual-Axis Gyration | 120 | 20° tilt, 2 Hz | 5.1 ± 1.8 | 8.2% |

| Ultrasonic Probe* | 60 | 50 J/mL | 2.3 ± 0.9 | 4.5% |

*Conducted in an integrated sonication station.

Visualizations

Title: AI-Driven Automated Synthesis Workflow

Title: Photocuring Reaction Pathway

The Scientist's Toolkit: Essential Research Reagent Solutions

| Item | Function in Automated Synthesis |

|---|---|

| Poly(ethylene glycol) diacrylate (PEGDA) | A biocompatible, photocurable telechelic monomer used as a base resin for hydrogels and composite networks. |

| Diglycidyl ether of bisphenol A (DGEBA) | A standard high-viscosity epoxy resin for thermoset composites; requires precise heated dispensing. |

| Irgacure 2959 | A water-compatible, UV photoinitiator that generates free radicals upon 365 nm exposure to initiate polymerization. |

| Methyl tetrahydrophthalic anhydride (MTHPA) | A common curing agent for epoxy resins, enabling thermal cure for high-performance thermosets. |

| Graphene Oxide (GO) Dispersion | A nano-reinforcement filler; its dispersion quality critically impacts composite electrical/mechanical properties. |

| Dimethyl sulfoxide (DMSO) | A versatile polar aprotic solvent used to dilute viscous precursors and ensure robotic dispensing accuracy. |

| Functional Silanes (e.g., GPTMS) | Coupling agents used to modify filler surfaces, improving interfacial adhesion within the polymer matrix. |

Application Notes

This phase details the integration of automated analytical techniques within an AI-driven high-throughput (HT) framework for polymer composites research. The goal is to rapidly generate multi-dimensional datasets that inform structure-property-processing relationships, accelerating the discovery and optimization of materials for applications ranging from drug delivery systems to structural components.

Automated Dynamic Mechanical Analysis (DMA) provides rapid viscoelastic property mapping (storage/loss moduli, tan δ) across temperature and frequency, crucial for understanding thermomechanical performance. Automated Thermogravimetric Analysis (TGA) enables unattended, sequential measurement of thermal stability and compositional analysis (e.g., filler content, polymer degradation profiles). Automated Fourier-Transform Infrared (FTIR) Spectroscopy offers high-speed chemical fingerprinting, monitoring curing reactions, degradation, or component distribution in composites. Automated Imaging (e.g., optical, SEM) integrated with automated sample handling provides morphological data essential for correlating structure to properties.

These techniques feed standardized data into a central AI/ML platform, where predictive models guide subsequent experimental iterations.

Experimental Protocols

Protocol 1: High-Throughput Automated DMA Screening of Polymer Composite Libraries

Objective: To autonomously characterize the thermomechanical properties of a 96-member polymer composite library. Materials: Automated DMA (e.g., TA Instruments DMA 850 with AutoLoader), 96-composite sample library (prepared via automated dispensing), calibration standards. Procedure:

- Sample Loading: Load up to 48 samples into the autoloader carousel. Ensure consistent sample geometry (tension film, dual cantilever).

- Method Programming: In the instrument software, create a sequence method. For each sample, define:

- Temperature ramp: -50°C to 200°C at 3°C/min.

- Frequency: 1 Hz.

- Strain amplitude: 0.1%.

- Automated Run: Initiate the sequence. The system automatically:

- Loads a sample.

- Performs the temperature sweep.

- Unloads the sample.

- Proceeds to the next sample.

- Data Extraction: Post-run, software automatically exports key data (Glass Transition Temperature (Tg) from tan δ peak, Storage Modulus at 25°C) to a structured .csv file for AI pipeline ingestion.

Protocol 2: Sequential TGA Analysis for Compositional Determination

Objective: To determine the inorganic filler content and thermal stability of composite series. Materials: Automated TGA (e.g., PerkinElmer TGA 8000 with Autosampler), nitrogen and air gas, alumina crucibles. Procedure:

- Autosampler Setup: Load up to 19 samples into the autosampler rack alongside a reference standard.

- Method Definition: Create a sequence with the following universal method:

- Equilibrate at 30°C.

- Ramp at 20°C/min to 900°C under N₂ (50 mL/min).

- Isotherm for 2 min.

- Change gas to air (50 mL/min) and isotherm for 10 min (to burn off carbonaceous residue).

- Automated Execution: Start sequence. The autosampler sequentially places each crucible into the furnace.

- Data Processing: Software calculates and reports:

- % Weight Loss at 500°C (polymer degradation).

- Residual Weight % at 900°C under Air (inorganic filler content).

Protocol 3: High-Throughput FTIR Mapping of Composite Films

Objective: To assess chemical homogeneity and curing conversion in composite film libraries. Materials: Automated FTIR with XY mapping stage and autoloader (e.g., Thermo Scientific Nicolet iN10 MX), 24-well composite film plate. Procedure:

- Plate Registration: In the mapping software, define the coordinates for each well center.

- Measurement Parameters: Set acquisition to:

- Spectral range: 4000-650 cm⁻¹.

- Resolution: 4 cm⁻¹.

- Number of scans per spectrum: 16.

- Aperture: 100 µm.

- Automated Mapping: For each well, execute a 3x3 point map (9 spectra per sample).

- Batch Processing: Use chemometric software (e.g., ISys) to automatically:

- Generate average spectrum per well.

- Calculate degree of cure via peak height ratio (e.g., 915 cm⁻¹ / 1600 cm⁻¹ for epoxy).

- Flag outliers based on spectral correlation.

Data Presentation

Table 1: Summary of High-Throughput Characterization Techniques & Outputs

| Technique | Primary Metrics Measured | Sample Throughput (Unattended) | Key AI-Ready Data Output |

|---|---|---|---|

| Automated DMA | Storage/Loss Modulus, Tan δ, Tg | ~48 samples/24h | Tg, E' at 25°C, FWHM of Tan δ peak |

| Automated TGA | Weight Loss %, Decomposition Onset, Residual Mass | ~20 samples/24h | Onset Temp. T₅%, Residual % at 900°C |

| Automated FTIR | Absorbance Peaks, Functional Group Maps, Conversion | ~100s spectra/24h | Peak Height Ratios, Spectral Correlation Coefficients |

| Automated Imaging | Particle Size, Dispersion Index, Void Content | Dependent on modality | Mean Particle Size (µm), Area % Filler, Porosity % |

Table 2: Exemplar HT-DMA Data from a 16-Sample Epoxy Composite Screen

| Sample ID | Filler Type | Filler wt.% | Tg from Tan δ (°C) | Storage Modulus at 25°C (MPa) |

|---|---|---|---|---|

| EPX_01 | None | 0 | 125.2 | 2850 |

| EPX_02 | Silica | 5 | 127.5 | 3100 |

| EPX_03 | Silica | 10 | 128.1 | 3350 |

| EPX_04 | Silica | 20 | 129.8 | 3800 |

| EPX_05 | Alumina | 5 | 124.8 | 3050 |

| EPX_06 | Alumina | 10 | 123.5 | 3200 |

| EPX_07 | Alumina | 20 | 121.0 | 3650 |

Visualizations

HT Characterization & AI Feedback Loop

Automated Instrument Sequence Workflow

The Scientist's Toolkit: Research Reagent Solutions & Essential Materials

Table 3: Key Materials for High-Throughput Polymer Composite Characterization

| Item | Function & Importance |

|---|---|

| Automated DMA Film/Tension Clamps | Enable consistent, repeatable loading of film samples in autoloader sequences, crucial for data reproducibility. |

| TGA Alumina Crucibles (with Autosampler Mates) | Inert, high-temperature resistant pans compatible with robotic arms; uniformity is key for consistent thermal contact. |

| 96-Well Polymer Composite Plates (IR-Transparent) | Standardized sample format for FTIR mapping and imaging; allows direct correlation between chemical and morphological data. |

| Calibration Reference Materials (e.g., Indium, Alumel, Polystyrene) | Essential for daily validation of DMA, TGA, and FTIR instruments within an automated queue, ensuring data integrity. |

| Automated Liquid Handling System | Prepares composite precursor libraries (resin, hardener, filler dispersions) with precise stoichiometry, feeding the characterization pipeline. |

| Conductive Adhesive Tabs & SEM Stubs (Cartridge) | Allows automated preparation of samples for SEM imaging, integrating morphological data into the multi-technique dataset. |

Within the broader thesis on AI-driven high-throughput testing for polymer composites research, Phase 3 focuses on the systematic engineering of data pipelines. The objective is to transform raw, heterogeneous experimental data from high-throughput mechanical, thermal, and spectroscopic characterizations into curated, machine-readable datasets. This phase is critical for enabling predictive modeling, materials discovery, and the elucidation of structure-property relationships.

Foundational Data Pipeline Architecture

A robust data pipeline for composite research must handle multi-modal data streams. The architecture ensures data integrity, traceability, and FAIR (Findable, Accessible, Interoperable, Reusable) principles.

Title: Polymer Composite Data Pipeline Flow

Key Quantitative Data Standards & Benchmarks

To ensure dataset quality, specific quantitative benchmarks must be established for data ingestion and validation.

Table 1: Data Quality Benchmarks for Pipeline Ingestion

| Data Type | Required Precision | Acceptable Null Rate | Metadata Completeness | Format Standard |

|---|---|---|---|---|

| Dynamic Mechanical Analysis (DMA) | Storage Modulus (E'): ±0.5% | < 2% | ≥ 95% (Temp, Freq, Strain) | ASTM D4065-12 |

| Fourier-Transform Infrared (FTIR) | Absorbance: ±1 cm⁻¹ | < 1% | ≥ 98% (Resolution, Scans) | ASTM E1252-98 |

| Thermogravimetric Analysis (TGA) | Mass Loss: ±0.2% | < 1% | ≥ 95% (Atmosphere, Rate) | ASTM E1131-20 |

| Tensile Properties | Ultimate Strength: ±1% | < 3% | ≥ 90% (Gauge, Speed) | ASTM D3039-14 |

| Scanning Electron Microscopy (SEM) | Pixel Resolution: ≤ 5 nm | < 5% | ≥ 95% (kV, Mag, Detector) | DICONDE Standard |

Table 2: Feature Engineering Outputs for ML Readiness

| Extracted Feature | Source Technique | Engineering Operation | ML-Ready Data Type | Typical Dimensionality |

|---|---|---|---|---|

| Glass Transition (Tg) | DMA (Tan δ peak) | Peak Analysis → Single Value | Float (Scalar) | 1 |

| Thermal Decomposition Onset | TGA (Derivative) | Onset Temp. Calculation | Float (Scalar) | 1 |

| Functional Group Presence | FTIR Spectra | Peak Area Integration → Vector | Array (Float) | 1500-4000 cm⁻¹ |

| Microstructural Texture | SEM Image | Gray-Level Co-occurrence Matrix | 2D Matrix (Float) | 256x256 |

| Stress-Strain Curve | Tensile Test | Piecewise Polynomial Spline | Time-Series Array | ~1000 points |

Experimental Protocol: End-to-End Data Curation for a Composite Formulation Study

Protocol 4.1: Automated Curation of a Multi-Technique Dataset This protocol details the steps to generate a single, unified dataset from parallel high-throughput testing of 50 polymer composite variants.

Objective: To create a machine-readable dataset linking formulation variables (e.g., filler type, loading %, coupling agent) to measured properties from DMA, TGA, and FTIR.

Materials & Software:

- High-Throughput Testing Rack (e.g., for parallel DMA/TGA).

- FTIR Spectrometer with automated sample stage.

- Laboratory Information Management System (LIMS) ID for each sample.

- Pipeline Orchestration Tool: Apache Airflow or Nextflow.

- Data Processing: Python scripts (Pandas, NumPy, SciPy).

- Storage: SQL database (for metadata) & Parquet files (for spectral/curve data).

Procedure:

- Pre-experiment Metadata Registration:

- For each composite variant (n=50), register a unique Sample ID in the LIMS.

- Log all formulation parameters (polymer matrix, filler ID, wt%, processing conditions) as structured key-value pairs linked to the Sample ID.

Instrument Data Acquisition with Traceability:

- Perform DMA frequency sweep, TGA, and FTIR analysis according to ASTM standards cited in Table 1.

- Configure all instruments to embed the registered Sample ID in the output file header and filename.

Automated Data Ingestion & Validation (Daily Batch):

- Run a scheduled pipeline job (e.g., via Airflow DAG) that: a. Scans instrument output directories for new files. b. Parses files, extracts Sample ID, and matches it to LIMS metadata. c. Executes validation rules from Table 1 (precision checks, null checks). d. Flags outliers or failures for manual review; logs all actions.

Feature Extraction & Transformation:

- For validated data, execute feature extraction scripts:

- DMA: Fit tan δ curve to identify Tg; extract storage modulus (E') at reference temperature.

- TGA: Calculate onset temperature of degradation (Td) at 5% mass loss.

- FTIR: Apply vector normalization to absorbance spectra; integrate peak areas for specific functional groups (e.g., C=O stretch, Si-O bond).

- Output a structured dictionary per sample:

{Sample_ID: {metadata}, {features: {Tg: value, Td: value, FTIR_vector: array}}}.

- For validated data, execute feature extraction scripts:

Dataset Assembly & Versioning:

- Merge all sample dictionaries into a master Pandas DataFrame (tabular data).

- Store FTIR vectors and stress-strain curves as separate NumPy arrays, indexed to the master DataFrame.

- Assign a version tag (e.g.,

v3.1_composites_20231027) and save the DataFrame (as.parquet) and arrays (as.npy) to the versioned data lake. - Generate and store a data manifest file documenting version, sample count, and extraction parameters.

Title: Multi-Technique Data Curation Protocol

The Scientist's Toolkit: Research Reagent Solutions & Essential Materials

Table 3: Key Tools & Reagents for Pipeline-Driven Composite Research

| Item / Solution | Function in Data Pipeline Context | Example Vendor / Product |

|---|---|---|

| Laboratory Information Management System (LIMS) | Central registry for sample metadata, ensuring traceability from synthesis to analysis. Foundation for data linking. | LabVantage, Benchling |

| High-Throughput DMA/TGA Modules | Automated, parallelized thermal analysis generating consistent, large-volume raw data for pipeline ingestion. | TA Instruments DMA 850/ TGA 5500, Mettler Toledo |

| Automated FTIR with Mapping Stage | Enables rapid, spatially-resolved chemical characterization of composite surfaces, generating high-dimensional spectral data. | Thermo Fisher Scientific Nicolet iN10 |

| Standard Reference Materials (SRMs) | Critical for daily validation of instrument calibration, ensuring data precision meets benchmarks in Table 1. | NIST SRM (e.g., Polystyrene for Tg, Nickel Curie for TGA) |

| Data Pipeline Orchestration Software | Automates, schedules, and monitors the multi-step workflow from ingestion to storage (e.g., Protocol 4.1). | Apache Airflow, Nextflow |

| Structured Data Format Libraries | Enables efficient serialization, storage, and retrieval of large, mixed tabular and array-based datasets. | Apache Parquet (via PyArrow), HDF5 |

| Automated Data Validation Scripts | Custom code to enforce quality rules (Table 1), flag outliers, and ensure only high-quality data proceeds downstream. | Python (Pandas, Pydantic), Great Expectations |

| Containerization Platform | Packages the entire data processing environment (OS, libraries, code) to guarantee reproducibility across research teams. | Docker, Singularity |

AI Model Selection and Training for Property Prediction (e.g., Strength, Degradation Rate)

Application Notes

The integration of AI-driven high-throughput testing (HTT) within polymer composites and drug development research necessitates a systematic approach to model selection and training for predicting material properties. This framework accelerates the discovery and optimization of novel composites and biomaterials by linking high-throughput experimental data with predictive computational models. Key predictive tasks include tensile/compressive strength, Young's modulus, degradation rate, and bioactivity.

Core AI Model Categories for Property Prediction

The selection of an AI model is contingent upon dataset size, feature dimensionality, and the complexity of the structure-property relationship.

Table 1: Model Suitability Analysis for Property Prediction

| Model Category | Best For Data Size | Typical R² Range (Reported) | Key Advantages | Limitations for HTT Data |

|---|---|---|---|---|

| Linear Regression (Ridge/Lasso) | Small (<100 samples) | 0.5 - 0.7 | Interpretable, robust to small samples. | Cannot capture non-linear interactions. |

| Random Forest (RF) | Medium (100-10k samples) | 0.7 - 0.85 | Handles mixed data types, provides feature importance. | May overfit without tuning; extrapolation poor. |

| Gradient Boosting (XGBoost, LightGBM) | Medium to Large (>500 samples) | 0.75 - 0.9 | High accuracy, efficient handling of missing data. | Computationally intensive; less interpretable. |

| Graph Neural Networks (GNNs) | Variable (depends on graph size) | 0.8 - 0.95 | Directly models molecular/polymer graph structure. | High data hunger; complex training protocol. |

| Multilayer Perceptron (MLP) | Medium to Large (>1000 samples) | 0.65 - 0.9 | Universal function approximator. | Requires careful regularization and scaling. |

Critical Data Considerations

- Feature Engineering: For polymer composites, features include monomer SMILES strings, polymerization degree, crosslink density, filler type/size/distribution (from SEM/TEM), and processing conditions (temperature, pressure).

- Data Source Integration: HTT platforms generate data from robotic synthesis, parallel mechanical testers (e.g., nanoindentation arrays), and automated characterization (FTIR, Raman). AI models must unify these heterogeneous data streams.

Detailed Experimental Protocols

Protocol 1: High-Throughput Dataset Curation for AI Training

Objective: To compile a structured dataset from HTT for AI model training. Materials: Robotic synthesizer, combinatorial library design software, automated tensile tester, HPLC/UPLC (for degradation studies), data logging middleware. Procedure:

- Design of Experiments (DoE): Use a fractional factorial design to vary monomer ratios, filler loadings (0-30 wt%), and catalyst concentrations across 256 unique compositions in a 16x16 microarray format.

- Automated Synthesis & Curing: Execute synthesis using the robotic platform. Log precise parameters (time, temperature, shear rate) for each sample.

- Parallel Property Testing:

- Strength/Modulus: Use an array nanoindenter to perform 25 indents per composite spot. Extract Young's modulus and hardness. Calculate average and std. dev.

- Degradation Rate: For biodegradable composites, immerse samples in parallel pH-buffered vessels. Use automated sampling and HPLC analysis to measure monomer release over 14 days. Fit to first-order kinetics to derive degradation rate constant k.

- Data Alignment: Use sample barcodes to link synthesis parameters, characterization spectra, and measured properties into a single database (e.g., SQL or Pandas DataFrame).

Protocol 2: Training and Validating a Gradient Boosting Model for Strength Prediction

Objective: To train a LightGBM model predicting tensile strength from composition and processing features. Pre-requisite: Curated dataset from Protocol 1 (n=5000 samples). Software: Python (scikit-learn, lightgbm, pandas). Procedure:

- Feature Encoding & Split:

- Encode categorical fillers (e.g., graphene, CNT, silica) via one-hot encoding.

- Standardize numerical features (filler loading, cure temperature).

- Split data 70/15/15 into training, validation, and hold-out test sets.

- Hyperparameter Tuning:

- Use the validation set for a Bayesian optimization search over

num_leaves,learning_rate,feature_fraction, andmin_data_in_leaf. - Optimize for minimal Mean Absolute Error (MAE).

- Use the validation set for a Bayesian optimization search over

- Model Training:

- Train LightGBM regressor on the training set with early stopping against the validation set.

- Evaluation:

- Predict on the unseen test set.

- Report key metrics: R², MAE, and RMSE.

- Perform permutation importance analysis to identify top 5 predictive features.

Table 2: Example Model Performance on Hold-Out Test Set

| Target Property | Model | R² | MAE | RMSE | Top Predictive Feature (Importance) |

|---|---|---|---|---|---|

| Tensile Strength (MPa) | LightGBM | 0.89 | 2.1 MPa | 3.4 MPa | Filler Aspect Ratio |

| Degradation Rate k (day⁻¹) | LightGBM | 0.82 | 0.003 day⁻¹ | 0.007 day⁻¹ | Ester Bond Density |

Visualizations

High-Throughput AI Model Development Workflow

GNN Architecture for Polymer Property Prediction

The Scientist's Toolkit: Research Reagent Solutions & Essential Materials

Table 3: Key Materials and Reagents for AI-Driven HTT of Composites

| Item / Reagent | Function in HTT/AI Pipeline | Example Product / Specification |

|---|---|---|

| Robotic Liquid Handler | Precise dispensing of monomers, initiators, and fillers for combinatorial library synthesis. | Beckman Coulter Biomek i7, with thermal control. |

| Combinatorial Library Plates | High-density arrays for parallel synthesis and testing. | 96-well or 384-well plates compatible with organic solvents. |

| Array Nanoindenter | High-throughput mechanical property mapping at micro-scale. | Bruker Hysitron TI Premier with 96-tip array. |

| Automated HPLC/UPLC System | Quantitative analysis of degradation products for kinetic rate determination. | Waters Acquity UPLC with autosampler. |

| Chemical Features Database | Provides computed molecular descriptors (e.g., logP, polar surface area) for AI features. | RDKit or Dragon software descriptors. |

| Graph Neural Network Framework | Software for building models that learn directly from molecular graphs. | PyTorch Geometric (PyG) or Deep Graph Library (DGL). |

| Hyperparameter Optimization Tool | Automates the search for optimal model parameters. | Optuna or Ray Tune. |

Within the framework of a thesis on AI-driven high-throughput (HT) testing for polymer composites, this document details application notes and protocols for developing targeted biomedical materials. The integration of AI and HT experimentation accelerates the discovery and optimization of polymers for biocompatible coatings and controlled-release drug delivery matrices. These approaches enable rapid screening of composition-structure-property-performance relationships, fundamentally advancing the design cycle.

AI-Driven HT Screening Framework

AI models, trained on large-scale experimental data, predict key performance indicators (KPIs) for new polymer formulations before synthesis. HT robotic platforms then validate these predictions through parallel synthesis and characterization. This closed-loop system iteratively refines the AI models, creating a powerful discovery engine.

Key Performance Indicators (KPIs) for Targeted Applications

The following quantitative targets guide the AI-driven design process for the two application classes.

Table 1: Target KPIs for Biocompatible Coatings and Controlled-Release Matrices

| Application | Primary KPIs | Target Values | HT Screening Method |

|---|---|---|---|

| Biocompatible Coatings (e.g., for implants) | Protein Adsorption | < 0.5 µg/cm² (Albumin) | Micro-BCA Assay Array |

| Cell Viability (MTT Assay) | > 90% (vs. control) | 96-well Plate Cytotoxicity | |

| Hydrophilicity (Water Contact Angle) | 40° - 70° | Automated Goniometry | |

| Bacterial Adhesion Reduction | > 80% (vs. uncoated) | Fluorescent Staining & HT Imaging | |

| Controlled-Release Matrices (e.g., for drugs) | Drug Loading Capacity | 5 - 30% (w/w) | UV-Vis Spectroscopy Array |

| Cumulative Release at t* | 20-80% (t*=24h) | HPLC-UV in 96-well Format | |

| Release Profile (n, Higuchi Model) | 0.45 < n < 0.89 | Model Fitting to HT Release Data | |

| Matrix Degradation Time | 1 week - 6 months | Automated Mass Loss Tracking |

Detailed Experimental Protocols

Protocol 1: HT Synthesis & Characterization of Polymer Composite Library

Objective: To robotically synthesize a library of candidate polymers (e.g., PLGA-PEG blends with varied ratios and molecular weights) and perform initial characterization.

- AI-Guided Formulation: Input desired KPI targets (Table 1) into the AI design module to receive 96 candidate formulations.

- Robotic Dispensing: Using a liquid handling robot, dispense calculated volumes of polymer stock solutions (in DMSO or dioxane) and cross-linker/catalyst into a 96-vial reaction block.

- Parallel Synthesis: Conduct reactions under controlled atmosphere (N₂) with block heating at 70°C for 12 hours.

- HT Precipitation & Washing: Add each reaction mixture to a deep-well plate containing deionized water. Agitate, centrifuge, and aspirate supernatant automatically.

- Primary Characterization: Redissolve polymer precipitates in standard solvent. Use automated systems for:

- Viscosity: Micro-capillary viscometry.

- Molecular Weight Distribution: Rapid GPC with multi-angle light scattering (MALS) detector in an array format.

Protocol 2: HT Biocompatibility & Protein Adsorption Screening

Objective: To assess coating biocompatibility by quantifying cell viability and protein adsorption in a 96-well format.

- Coating Deposition: Spin-coat or dip-coat polymer solutions from Protocol 1 onto 96-well cell culture plates with a robotic arm. Sterilize under UV light for 30 min.

- Protein Adsorption (Micro-BCA Assay): a. Add 200 µL of 1 mg/mL Bovine Serum Albumin (BSA) in PBS to each well. Incubate (37°C, 1h). b. Aspirate and wash 3x with PBS. c. Add 150 µL of Micro-BCA working reagent. Incubate (60°C, 1h). d. Measure absorbance at 562 nm. Quantify adsorbed protein against a standard curve.

- Cell Viability (MTT Assay): a. Seed NIH/3T3 fibroblasts at 10,000 cells/well in complete media. Incubate (37°C, 5% CO₂, 48h). b. Add 20 µL of MTT reagent (5 mg/mL) per well. Incubate (4h). c. Aspirate media, add 150 µL DMSO to solubilize formazan crystals. d. Measure absorbance at 570 nm. Viability (%) = (Abssample / Abscontrol) * 100.

Protocol 3: HT Drug Release Profiling for Matrix Formulations

Objective: To characterize the controlled-release kinetics of a model drug (e.g., Doxorubicin) from polymer matrices.

- Drug-Loaded Matrix Fabrication: Mix polymer solutions with doxorubicin-HCl (10% w/w relative to polymer). Dispense 50 µL aliquots into 96-well filter plates. Lyophilize to form solid matrices.

- Release Study Setup: Place filter plate atop a 96-well collection plate. Add 150 µL of phosphate buffer saline (PBS, pH 7.4) release medium to each well of the filter plate.

- Automated Sampling: At predetermined time points (1, 2, 4, 8, 24, 48, 72h), apply vacuum to filter the entire release medium into the collection plate. Immediately replenish with 150 µL fresh PBS.

- Quantification: Analyze drug concentration in each collected sample using a plate-reader compatible HPLC-UV system or direct UV-Vis measurement at 480 nm. Calculate cumulative release.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for HT Development of Biomedical Polymers

| Item | Function | Example Product/ Specification |

|---|---|---|

| PLGA (Poly(lactic-co-glycolic acid)) | Biodegradable polymer backbone for coatings/matrices. | Lactel, 50:50, MW 30,000-60,000 Da |

| PEG (Polyethylene glycol) | Hydrophilic modifier to reduce protein adhesion & modulate release. | Sigma-Aldrich, MW 5,000 Da, bifunctional |

| Doxorubicin Hydrochloride | Model chemotherapeutic drug for release studies. | TOKU-E, >98% purity |

| Micro-BCA Protein Assay Kit | Sensitive, plate-based quantitation of adsorbed protein. | Thermo Scientific, 23235 |